How to automatically redact PII from audio and video files with Python

In this tutorial, we’ll learn how to automatically redact Personal Identifiable Information (PII) from audio and video files in 5 minutes using Python and AssemblyAI.

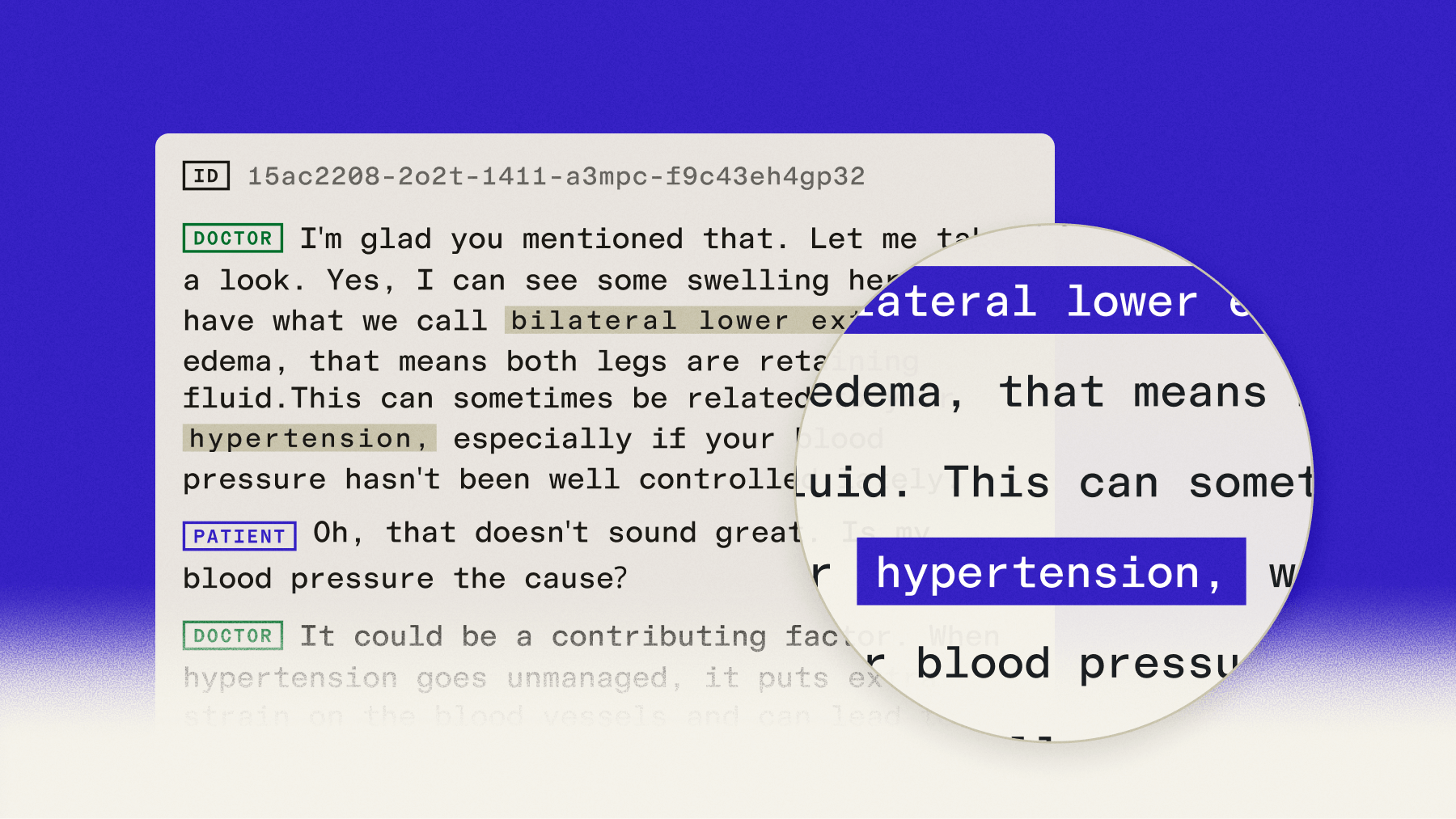

Personal Identifiable Information, or PII, is information about an individual that can be linked to that individual in an identifiable manner. How and by whom PII is handled and accessed is regulated by laws such as HIPAA, GDPR, and CCPA. In fact, data privacy is a top concern for companies building with speech recognition; a recent market survey found that over 30% of respondents cite it as a significant challenge.

Redacting PII from video or audio files (for example, a doctor/patient visit) is a very common need. Luckily, we can use AI to easily redact PII at scale. The efficiency gains are significant; for example, a healthcare case study showed that an AI scribe purpose-built for mental health professionals reduced their documentation time by 90%.

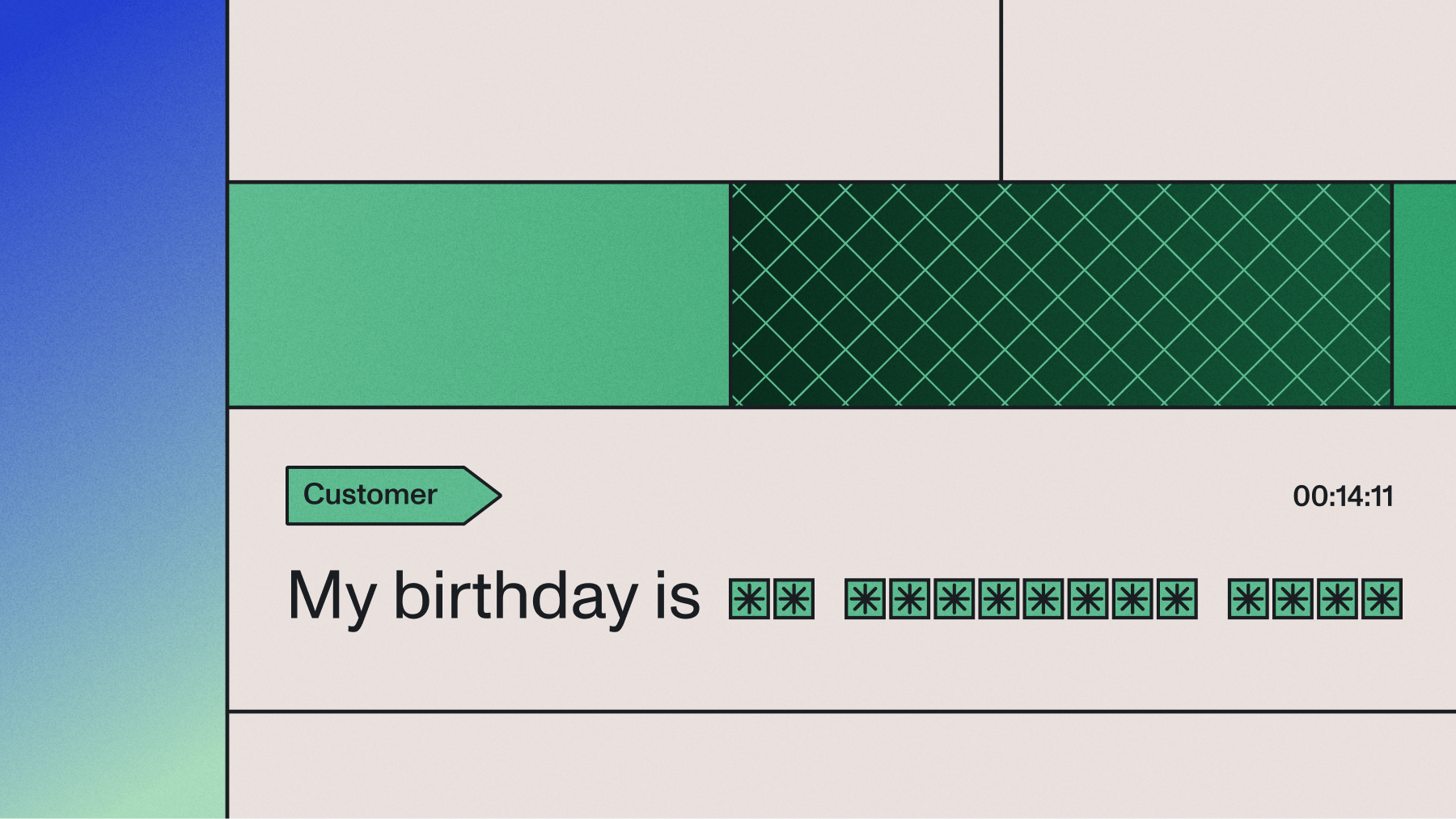

In this tutorial, we’ll learn how to redact a wide range of PII categories, like medical conditions, email addresses, and credit card numbers, from audio and video files as well as their textual transcripts. Here you can see the final redacted transcript we’ll create, as well as the associated redacted audio file:

Good afternoon, MGK design. Hi. I’m looking to have plans drawn up for an addition in my

house. Okay, let me have one of our architects return your call. May I have your name,

please? My name is ####. ####. And your last name? My last name is #####. Would you

spell that for me, please? # # # # # #. Okay, and your telephone number? Area code?

###-###-#### that’s ###-###-#### yes, ma’am. Is there a good time to reach you? That’s

my cell, so he could catch me anytime on that. Okay, great. I’ll have him return your

call as soon as possible. Great. Thank you very much. You’re welcome. Bye.Let’s get started!

Before you start

PII redaction automatically removes sensitive information like names, phone numbers, and addresses from audio files and their transcripts using AI models. You can redact both the text transcript and generate a new audio file with the sensitive content replaced.

To complete this tutorial, you’ll need:

- Python 3.7 or higher installed on your system

- An AssemblyAI account with an API key

- A publicly accessible audio or video file URL, or you can use our sample file

PII Redaction is one of our Guardrails features and is available on all tiers, including the free tier. You can find more information on our pricing page.

Step 1: Set up environment

First, create a project directory and navigate into it. Then, create a virtual environment:

# Mac/Linux:

python3 -m venv venv

. venv/bin/activate

# Windows:

python -m venv venv

.\venv\Scripts\activate.bat

Next, install the AssemblyAI Python SDK:

pip install assemblyaiThen set your AssemblyAI API Key as an environment variable. PII Redaction is one of our Guardrails features and is available on all account tiers.

# Mac/Linux:

export ASSEMBLYAI_API_KEY=<your_key>

# Windows:

set ASSEMBLYAI_API_KEY=<your_key>Step 2: Understanding PII policies and substitution methods

PII redaction requires configuring two parameters: policies (what to redact) and substitution methods (how to replace it).

PII policies

Choose from 20+ predefined categories:

- Personal identifiers: person_name, date_of_birth, phone_number, email_address

- Financial information: credit_card_number, credit_card_cvv, credit_card_expiration, bank_routing, account_number

- Government IDs: drivers_license, passport_number, us_social_security_number

- Medical information: medical_condition, medical_process, drug, injury, blood_type

- Location data: location, physical_address

- Other sensitive data: occupation, religion, language, nationality

Select policies based on your use case—medical for healthcare, financial for banking applications.

Substitution methods

Control how redacted content appears:

- hash: Replaces with #### symbols for maximum anonymization

- entity_name: Shows [PERSON_NAME] to preserve context

- Custom text: Define your own replacement pattern

Use hash for maximum privacy or entity_name when you need to maintain document structure.

Audio redaction method [NEW]

When redact_pii_audio=True, you can also control how PII is replaced in the redacted audio file. By default, PII is replaced with a beep tone. You can override this to use silence instead—often preferred for voice agent recordings or customer call archives where silence sounds more natural:

redact_pii_audio_options={"override_audio_redaction_method": "silence"} # Default

is "beep"Step 3: Transcribe the file

Now that our environment is set up, we can submit an audio file for transcription. For this tutorial, we’ll use a short phone conversation between a man and an architecture firm. Create a file called main.py and add the following lines:

import assemblyai as aai

audio_url = "https://storage.googleapis.com/aai-web-samples/architecture-call.mp3"

Next, we need to configure our transcription to redact PII. Add the following lines to main.py:

config = aai.TranscriptionConfig(

redact_pii=True,

redact_pii_audio=True,

redact_pii_policies=[

aai.PIIRedactionPolicy.person_name,

aai.PIIRedactionPolicy.phone_number,

],

redact_pii_sub=aai.PIISubstitutionPolicy.hash,

# Optional: replace PII in audio with silence instead of the default beep tone

# redact_pii_audio_options={"override_audio_redaction_method": "silence"},

)

We enable PII Redaction through redact_pii, and specify that we also want a redacted version of the audio file itself through redact_pii_audio. We set the categories, or policies, of PII we want to redact through redact_pii_policies. Finally, we set how the PII is redacted through redact_pii_sub.

Now we can transcribe the audio file:

transcript = aai.Transcriber().transcribe(audio_url, config)Step 4: Print the redacted transcript

Add a line to main.py to print out the redacted transcript:

print(transcript.text, '\n\n')Step 5: Fetch the redacted audio files

Print the URL of the redacted audio file:

print(transcript.get_redacted_audio_url())Step 6: Run the program

Execute python main.py or python3 main.py to run the program. After a few moments, the redacted transcript will be printed:

Good afternoon, MGK design. Hi. I'm looking to have plans drawn up for an addition

in my house. Okay, let me have one of our architects return your call. May I have

your name, please? My name is ####. ####. And your last name? My last name is

#####. Would you spell that for me, please? # # # # # #. Okay, and your telephone

number? Area code? ###-###-#### that's ###-###-#### yes, ma'am. Is there a good

time to reach you? That's my cell, so he could catch me anytime on that. Okay,

great. I'll have him return your call as soon as possible. Great. Thank you very

much. You're welcome. Bye.

You can see the unredacted transcript below for comparison:

Good afternoon, MGK design. Hi. I'm looking to have plans drawn up for an addition

in my house. Okay, let me have one of our architects return your call. May I have

your name, please? My name is John. John. And your last name? My last name is

Lowry. Would you spell that for me, please? L o w e r y. Okay, and your telephone

number? Area code? 610-265-1714 that's 610-265-1714 yes, ma'am. Is there a good

time to reach you? That's my cell, so he could catch me anytime on that. Okay,

great. I'll have him return your call as soon as possible. Great. Thank you very

much. You're welcome. Bye.

Note that a redacted audio file will be returned, even if you submitted a video file for transcription. In this case, you can use a tool like FFmpeg to replace the original audio in the file with the redacted version.

Optionally, you can check out the code in compare.py in the project repository to print the differences between the unredacted and redacted versions of the transcript.

Production considerations and error handling

Production deployments require error handling, status validation, and retry logic.

Check transcription status

Validate completion before processing results:

import assemblyai as aai

transcript = aai.Transcriber().transcribe(audio_url, config)

if transcript.status == aai.TranscriptStatus.error:

print(f"Transcription failed: {transcript.error}")

# Log error, retry, or alert your team

elif transcript.status == aai.TranscriptStatus.completed:

print(transcript.text)

# Process the redacted transcript

else:

print(f"Unexpected status: {transcript.status}")

Handle network and timeout errors

For production systems, wrap your API calls in try-except blocks:

import time

from typing import Optional

def transcribe_with_retry(

audio_url: str,

config: aai.TranscriptionConfig,

max_retries: int = 3

) -> Optional[aai.Transcript]:

"""Transcribe with exponential backoff retry logic."""

for attempt in range(max_retries):

try:

transcript = aai.Transcriber().transcribe(audio_url, config)

if transcript.status == aai.TranscriptStatus.completed:

return transcript

elif transcript.status == aai.TranscriptStatus.error:

print(f"Transcription error: {transcript.error}")

return None

except Exception as e:

wait_time = 2 ** attempt # Exponential backoff

print(f"Attempt {attempt + 1} failed: {e}")

if attempt < max_retries - 1:

print(f"Retrying in {wait_time} seconds...")

time.sleep(wait_time)

else:

print("Max retries exceeded")

return None

Validate PII redaction completeness

For sensitive applications, verify that PII was actually detected and redacted:

# Check if PII was found and redacted

redacted_audio_url = transcript.get_redacted_audio_url()

if redacted_audio_url:

print(f"Redacted audio available: {redacted_audio_url}")

# To access detailed PII entities, you must first enable entity_detection

# e.g., in your config: aai.TranscriptionConfig(..., entity_detection=True)

#

# Access detailed PII entities if needed for audit logs

if transcript.entities:

for entity in transcript.entities:

print(f"Redacted {entity.entity_type} at {entity.start}ms - {entity.end}ms")

Monitor processing performance

Track transcription times to ensure your application meets SLA requirements:

import time

start_time = time.time()

transcript = aai.Transcriber().transcribe(audio_url, config)

processing_time = time.time() - start_time

print(f"Processing completed in {processing_time:.2f} seconds")

# Log to your monitoring system

# metrics.record('pii_redaction.processing_time', processing_time)

Next steps

You’ve now built a complete PII redaction pipeline that automatically removes sensitive information from both audio files and their transcripts. This foundation enables you to build compliant applications that handle sensitive data safely.

The techniques covered here scale from processing single files to building enterprise-grade systems that handle millions of hours of audio. You can extend this implementation with additional features like:

- Real-time streaming PII redaction for voice agents and live call center scenarios—available via the Universal-Streaming API [NEW]

- Batch processing multiple files with concurrent API calls

- Custom PII detection patterns for industry-specific terminology

- Integration with data pipelines for automated compliance workflows

- Audit logging for regulatory requirements

To explore the full range of PII policies, advanced configuration options, and other Speech Understanding models that work alongside PII Redaction, you can try our API for free or dive deeper into our documentation.

Frequently asked questions about PII redaction

What is the difference between PII redaction and masking?

Redaction permanently removes sensitive information, while masking obscures data but keeps it partially visible (like showing ****1234 for credit cards). AssemblyAI performs true redaction—original data cannot be recovered.

How do I handle transcription errors during PII redaction? [NEW]

Check transcript.status for aai.TranscriptStatus.error and log transcript.error for debugging. Implement retry logic for network timeouts and validate file formats (WAV, MP3, M4A). Note: audio redaction does not currently support .webm (Opus) files—convert to WAV or MP3 before submitting if needed.

Can I customize PII detection for industry-specific terminology?

Combine multiple PII policies for broader coverage, or post-process transcripts with custom pattern matching. Use Keyterms Prompting to improve transcription accuracy of domain-specific terms before redaction.

How do I replace audio in my original video file with redacted audio?

When you submit a video file, AssemblyAI returns a redacted audio file. Use FFmpeg to replace the original audio track:

ffmpeg -i input_video.mp4 -i redacted_audio.wav -c:v copy -map 0:v:0 -map 1:a:0 output_video.mp4This command copies the video stream from your original file and replaces only the audio track with the redacted version.

What are the performance implications of enabling multiple PII policies?

Multiple PII policies have minimal performance impact since all policies process in parallel. Processing time depends primarily on audio duration, not the number of enabled policies.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.