In this tutorial, we'll learn how to perform Speech-to-Text in 5 minutes using Python and AssemblyAI's Speech-to-Text API. To interact with the API, we’ll use AssemblyAI’s Python SDK, which provides high level functions for creating and working with transcripts. Let's dive in!

Getting Started

To follow along with this tutorial, you’ll need to already have Python 3 installed on your system.

Install the SDK

To begin, we'll install the AssemblyAI Python SDK with the following terminal command:

pip install assemblyaiGet a Speech-to-Text API Key

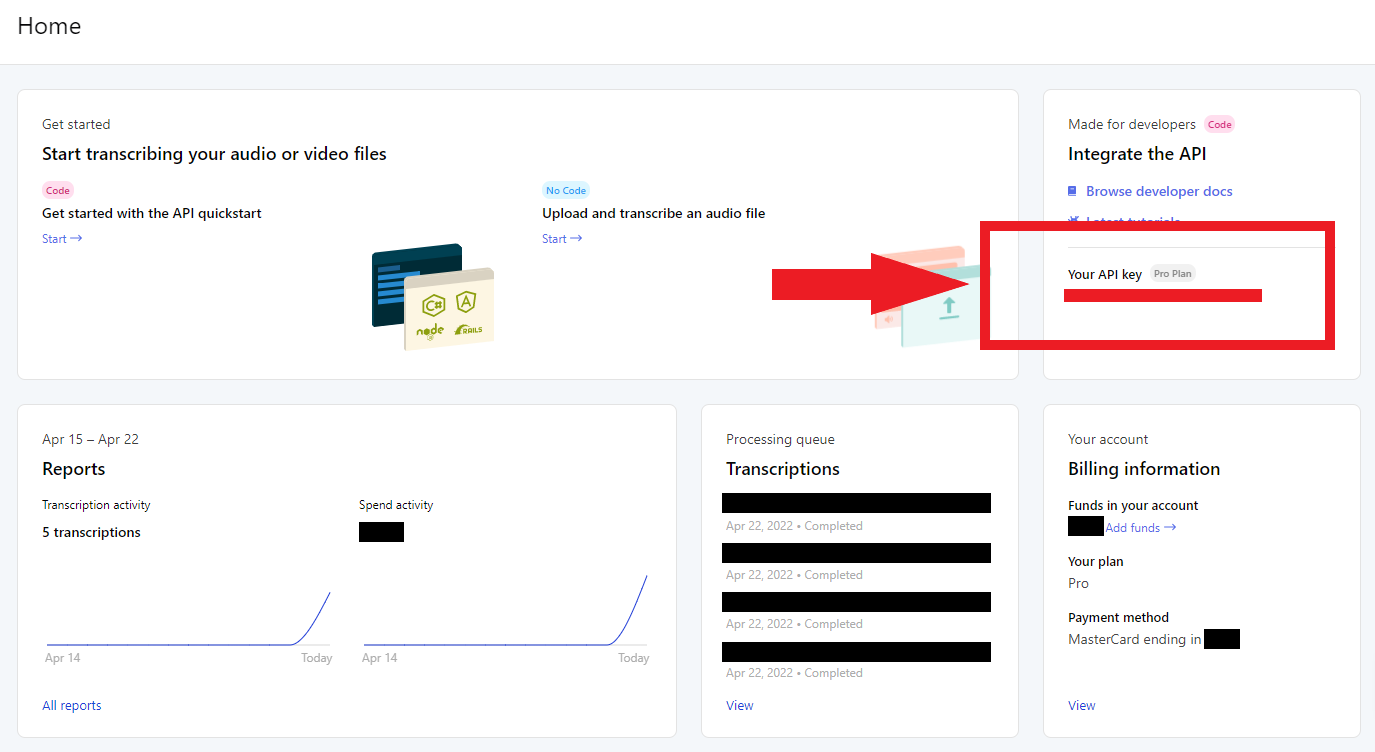

To perform the transcription, we will be using AssemblyAI's free Speech-to-Text API. If you don't yet have an account, create one here. Log in to your account to see the Dashboard, which provides a snapshot of your account. All we'll need right now is your API key. Click the key under the Your API key section on the Dashboard to copy its value.

This API key is like a fingerprint associated to your account and lets the API know that you have permission to use it.

Important Note

Never share your API key with anyone or upload it to GitHub. Your key is uniquely associated with your account and should be kept secret.

Store your API Key

We want to avoid hard coding the API key for both security and convenience reasons. Instead, we'll store the API key as an environment variable.

Back in the terminal, execute one of the following commands, depending on your operating system, replacing <YOUR_API_KEY> with the value copied previously from the AssemblyAI Dashboard:

Windows

set AAI_API_KEY=<YOUR_API_KEY>MacOS/Linux

export AAI_API_KEY=<YOUR_API_KEY>This variable only exists within the scope of the terminal process, so it will be lost upon closing the terminal. To persist this variable, set a permanent user environment variable.

Alternative: using dotenv

You can alternatively set your API key in the Python script itself using aai.settings.api_key = "YOUR_API_KEY". Note that you should not hard code this value if you use this method. Instead, store your API key in a .env file and use a package like python-dotenv to import it into the script. Do not check the .env file into source control.

How to Transcribe an Audio File with Python

Now we can get started transcribing an audio file, which can be stored either locally or remotely.

Transcribe the Audio File

Create a `main.py` file and paste the below lines of code in:

import assemblyai as aai

transcriber = aai.Transcriber()

transcript = transcriber.transcribe("https://storage.googleapis.com/aai-web-samples/gettysburg.wav")

print(transcript.text)First, we import the assemblyai package, and then instantiate a Transcriber object. Using this object’s transcribe method, we pass in the location of the file we would like to transcribe. The file can be either a local or a publicly accessible remote file - in this case, we use a remote file. Finally, we print the results of the transcript by accessing the text attribute of the resulting Transcript object.

Run the file in the terminal with python main.py (or python3) to see the result printed to the console after a few moments. Larger audio files will take longer to process.

Four score and seven years ago our fathers brought forth on this continent a new nation conceived in liberty and dedicated to the proposition that all men are created equal.HTTPS Note

HTTPS must be used when communicating with the AssemblyAI API. Using e.g. an HTTP proxy will result in errors.

That's all it takes to transcribe a file using AssemblyAI's Speech-to-Text API. To learn more about what you can do with the AssemblyAI API, like summarize files, analyze sentiment, or apply LLMs to transcripts, continue below. Otherwise, feel free to jump down to the Final Words section.

Analyzing transcripts with LLMs

The AssemblyAI Python SDK also makes it easy to apply LLMs to transcripts using LeMUR. For example, you can create a custom summary of an audio for video file. Here, we use the lemur.summarize method of the transcript object to output a summary formatted as a list of bullet points.

import assemblyai as aai

transcriber = aai.Transcriber()

transcript = transcriber.transcribe("https://storage.googleapis.com/aai-web-samples/meeting.mp4")

context = "A GitLab meeting to discuss logistics"

answer_format = "A list of bullet points"

result = transcript.lemur.summarize(

context=context,

answer_format=answer_format,

)

print(result.response)We can also generate action items from meetings or answer questions about the contents of a file using the lemur.action_items and lemur.question methods. In addition, you can define your own custom tasks for LeMUR to perform on the transcript through the lemur.task method.

To learn more about what LeMUR can do and how to use it, you can check out our website or quickstart colab. Alternatively, check out our LeMUR Playground to try it out in a no-code way.

Analyzing files with Audio Intelligence models

Beyond LeMUR, the AssemblyAI API also offers a suite of Audio Intelligence models that can extract useful information from your audio and video files.

For example, the Auto Chapters model will automatically segment the transcript into semantically-distinct chapters, returning the starting and stopping timestamps for each chapter along with a summary of each chapter. The Sentiment Analysis model will return the sentiment for each sentence in the audio as positive, negative, or neutral.

To use these models, all we have to do is “turn them on” in a TranscriptionConfig object which we can pass into our Transcriber:

import assemblyai as aai

config = aai.TranscriptionConfig(

sentiment_analysis=True,

auto_chapters=True

)

transcriber = aai.Transcriber(config=config)

transcript = transcriber.transcribe("https://storage.googleapis.com/aai-web-samples/gettysburg.wav")

print(transcript.chapters)

print(transcript.sentiment_analysis)To see the full suite of models you can use through the AssemblyAI API, check out our website or docs, or use our Playground to try them directly in a no-code way.

Final Words

That's all it takes to perform Speech-to-Text in Python. If you’re interested in learning more about Machine Learning, feel free to check out some of the other articles on our blog, like

- RLHF vs RLAIF for language model alignment

- Introduction to Generative AI

- What is Residual Vector Quantization?

Alternatively, check out our YouTube channel or follow us on Twitter to stay in the loop when we drop new tutorials and guides.