Open Sourcing our Drone CI/CD CloudWatch Auto Scaler

At AssemblyAI, we use Drone as our primary CI/CD tool. It's dead simple to set up and operate which frees us up to build out our product. Recently we decided to figure out how to build a cost-effective, easily-scalable Drone worker fleet for our GPU instances.

At AssemblyAI, we use Drone as our primary CI/CD tool. It's dead simple to set up and operate which frees us up to build out our product.

Being an AI company, we have a fair amount of workloads that require a GPU. GPU instances are expensive, so in the past our Drone workflow for GPU-based tests looked like this:

aws ec2 start-instances --instance-ids some-hardcoded-instance-id-with-an-elastic-ip ssh user@elastic-ip run test suite aws ec2 stop-instances --instance-ids hardcoded-instance-id

Some of our services, like our API, don't need a GPU. For those services, we run a small fleet of T class spot instances to handle the CI/CD workload for those services. We call these instances the "standard" workers.

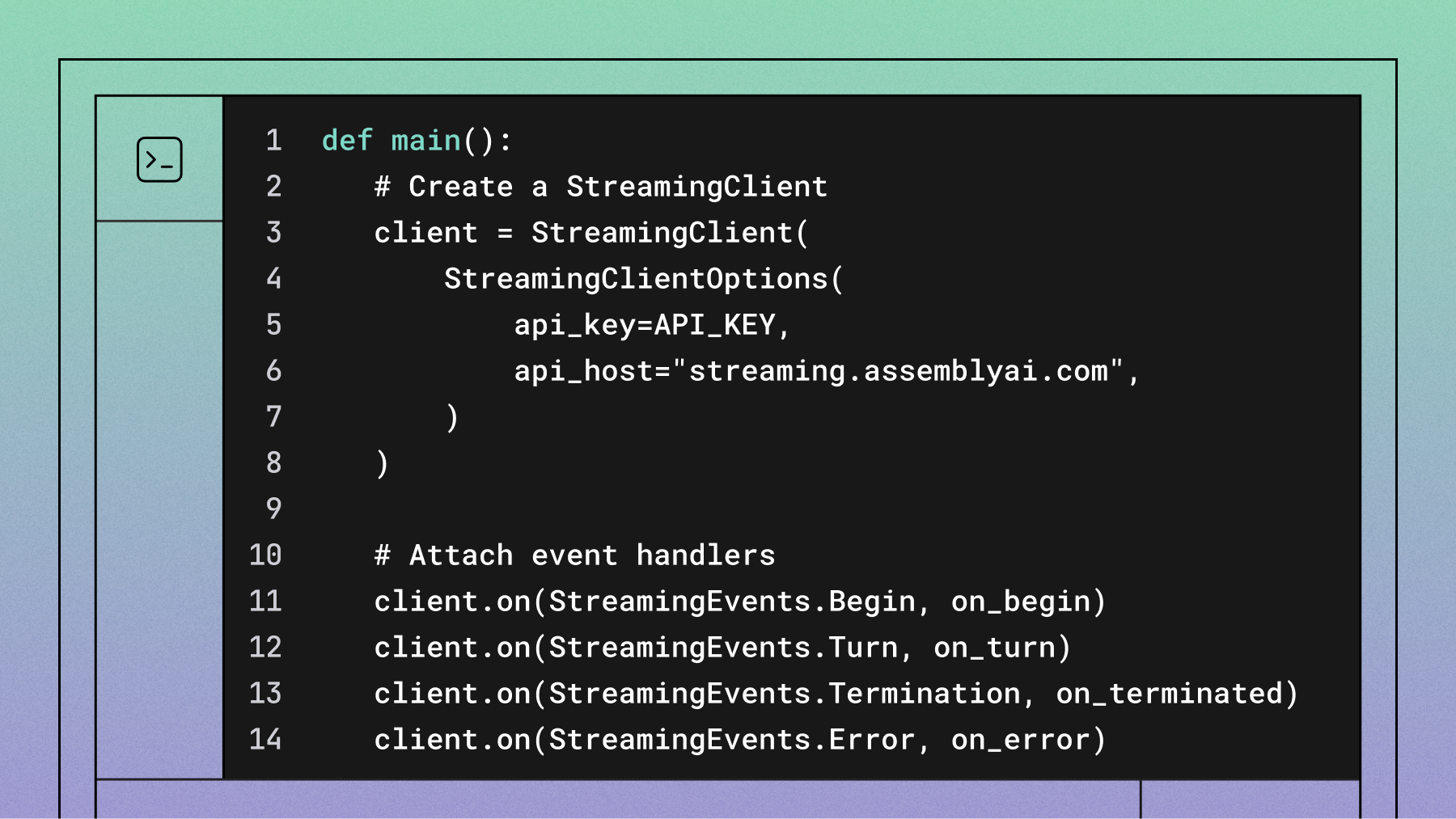

Recently we decided to figure out how to build a cost-effective, easily-scalable Drone worker fleet for our GPU instances. Our "standard" worker fleet is an EC2 autoscaling group that, prior to drone-queue-cloudwatch, didn't actually scale. The Launch Template's userdata for our "standard" workers tells new instances to retrieve a base64-encoded docker-compose file from Parameter Store, save it to the filesystem, then docker-compose -f /path/to/file -d up. This has worked really well for us so far.

First Steps

In order to move away from our SSH-based workflow, we needed to utilize Docker Runners.

We first looked at the Drone autoscaler. It's a very solid piece of software but didn't quite fit our needs. We didn't want to run another container if we could avoid it. In our case, we'd have to run an autoscaler container per worker "type" (GPU, non-GPU, etc). The Drone autoscaler also SSHs into instances it launches in order to configure them, which creates another layer of complexity.

Drone autoscaler runs in the background and polls the build API endpoint to see if it needs to add new instances. We decided that we could do something similar and tailor it to our use case.

Implementation

When deciding what metrics to scale on, we realized that scaling on CPU and/or memory wouldn't be effective. What if we had 20 builds queued up behind a few long-running, non-intense scripts? The only effective metric is build queue depth. We also decided to publish metrics for running builds in order to control scaling. We didn't want running builds to be interrupted by a scale in event.

One of our goals is to reduce our operational overhead. We already run our Drone workers in autoscaling groups so we decided to send Drone build stats to Cloudwatch and let Cloudwatch handle scaling.

As far as the polling process itself, Lambda was an easy choice. No managing instances or containers, no worrying about our instance becoming unhealthy, no OS patching, etc. All we had to do was set up an EventBridge cron to invoke our function every minute.

On the Drone side, we use Node Routing to define which worker group handles a given build. When drone-queue-cloudwatch polls the Drone API, it turns each build's node labels into Cloudwatch metric dimensions. From there, it's pretty simple to set up scaling policies.

For the sake of reducing cost, we decided against publishing a metric for queues with 0ut running or pending builds.

Using the Tool

You can find the code open sourced here: https://github.com/AssemblyAI/drone-queue-cloudwatch

Once you have cloned the repo, you can run bash build/lambda.sh to build the binary and have it zipped up for you. Alternatively, you can access published artifacts in the aai-oss S3 bucket in us-west-2.

- To use a specific version of the code, use the

drone-queue-cloudwatch/<commit sha>.zipobject key - To use the latest version, use the

drone-queue-cloudwatch/latest.zipobject key

There is example Terraform code in the terraform/ directory that you can reference for your own implementation.

Learnings

GPUs and docker-compose

In order for Docker Compose to access GPUs, your Docker daemon config must specify the default-runtime as nvidia. The following config works for us:

{ "default-runtime": "nvidia", "runtimes": { "nvidia": { "path": "nvidia-container-runtime", "runtimeArgs": [] } } }

GitHub Actions

We like GitHub Actions for self-contained test suites. In fact, GitHub Actions performs the CI for drone-queue-cloudwatch. For tests that require authenticated access to resources, we prefer Drone. For example, any pipeline that must access AWS resources runs through Drone - this way, we can leverage IAM roles.

Shoutouts

Thank you to my friend Pablo Clemente Perez for evangelizing Drone and getting me excited to work with it!

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.