Not all diarization systems perform equally. Understanding key metrics helps you evaluate solutions and set realistic expectations for your application.

DER is the industry-standard metric for evaluating speaker diarization performance. It measures the percentage of time that's incorrectly attributed to speakers, combining three types of errors:

Even systems with low DER can struggle with correctly identifying the total number of speakers in a conversation. This metric becomes critical when processing thousands of calls daily—small errors compound quickly at scale.

While modern diarization has made tremendous progress, several challenges remain that affect even advanced systems. Understanding these limitations helps set appropriate expectations and design robust applications.

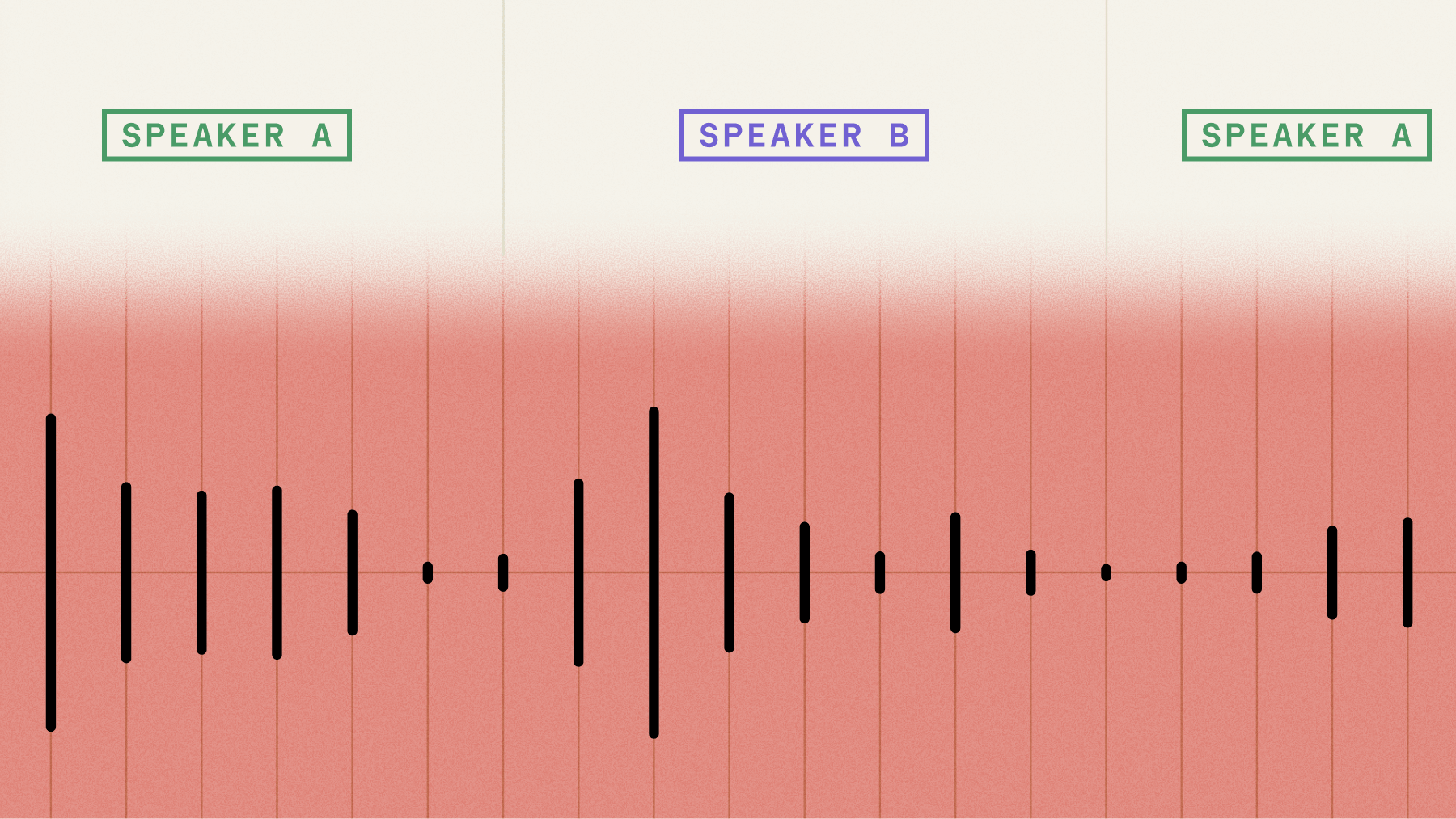

When multiple people speak simultaneously, diarization becomes significantly more complex. Natural conversations include interruptions that create overlapping segments. However, advanced models are improving at detecting and labeling these overlaps; one recent study found that even when speech overlaps, the model can effectively segment it into distinct pieces.

Rapid back-and-forth conversation provides less audio data for the model to characterize each speaker. Brief interjections or single-word responses may be misattributed or missed entirely. Brief interjections or single-word responses may be misattributed or missed entirely. Modern systems are improving, and some systems report a 43% accuracy improvement on segments as short as 250 milliseconds, though very brief utterances remain difficult.

Real-world audio rarely matches laboratory conditions. Background noise, echo, varying microphone quality, and distance from speakers all impact accuracy. While AI models train on diverse datasets to handle these conditions, extreme cases still pose challenges.

Test Diarization on Noisy Audio

Evaluate performance on your real recordings with background noise, accents, and variable quality. See where speaker changes are detected before you build.

Try playground

Similar Voices

Speakers with very similar voice characteristics—such as family members or people of similar age and background—can be difficult to distinguish. The system relies on subtle vocal patterns that may overlap significantly between similar speakers.

Active areas of research

The field of speaker diarization continues to advance rapidly, with researchers tackling fundamental challenges through innovative approaches.

Neural network based clustering techniques - for example, UIS RNN - represent a shift toward learning-based clustering that adapts to specific conversation patterns.

- Better handling of crosstalk when multiple speakers talk at the same time - for example, source separation

- Improving the ability to detect the number of speakers in the audio/video file when there are many speakers

- Improved handling of noisy audio files when there are high levels of background noises, music, or other channel disturbances

Recent advances focus on self-supervised learning approaches that require less labeled data, making it easier to improve performance for underrepresented languages and acoustic conditions. Researchers are also exploring multimodal diarization that combines audio with visual cues for video applications.

Speaker diarization use cases

Speaker diarization unlocks value across industries by adding structure and attribution to multi-speaker audio. Here's how different sectors apply this technology to solve real problems.

Telemedicine

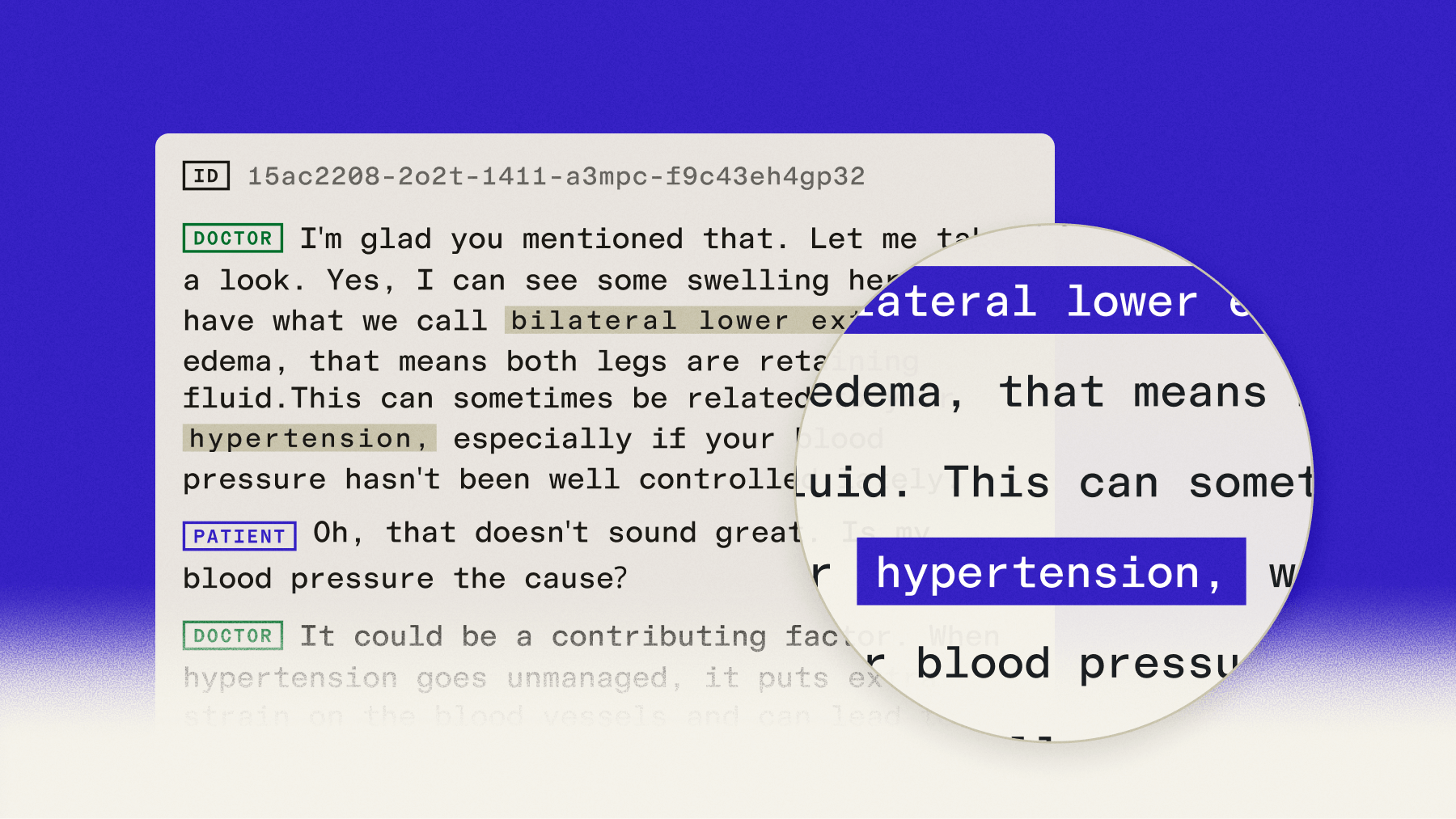

Automatically label both the <patient> and <doctor> on appointment recordings to make them more readable and useful. Importing into a healthcare ERP or database is simplified with better tagging/indexing and the ability to trigger actions like follow-up visits and prescription refills.

Conference Calls

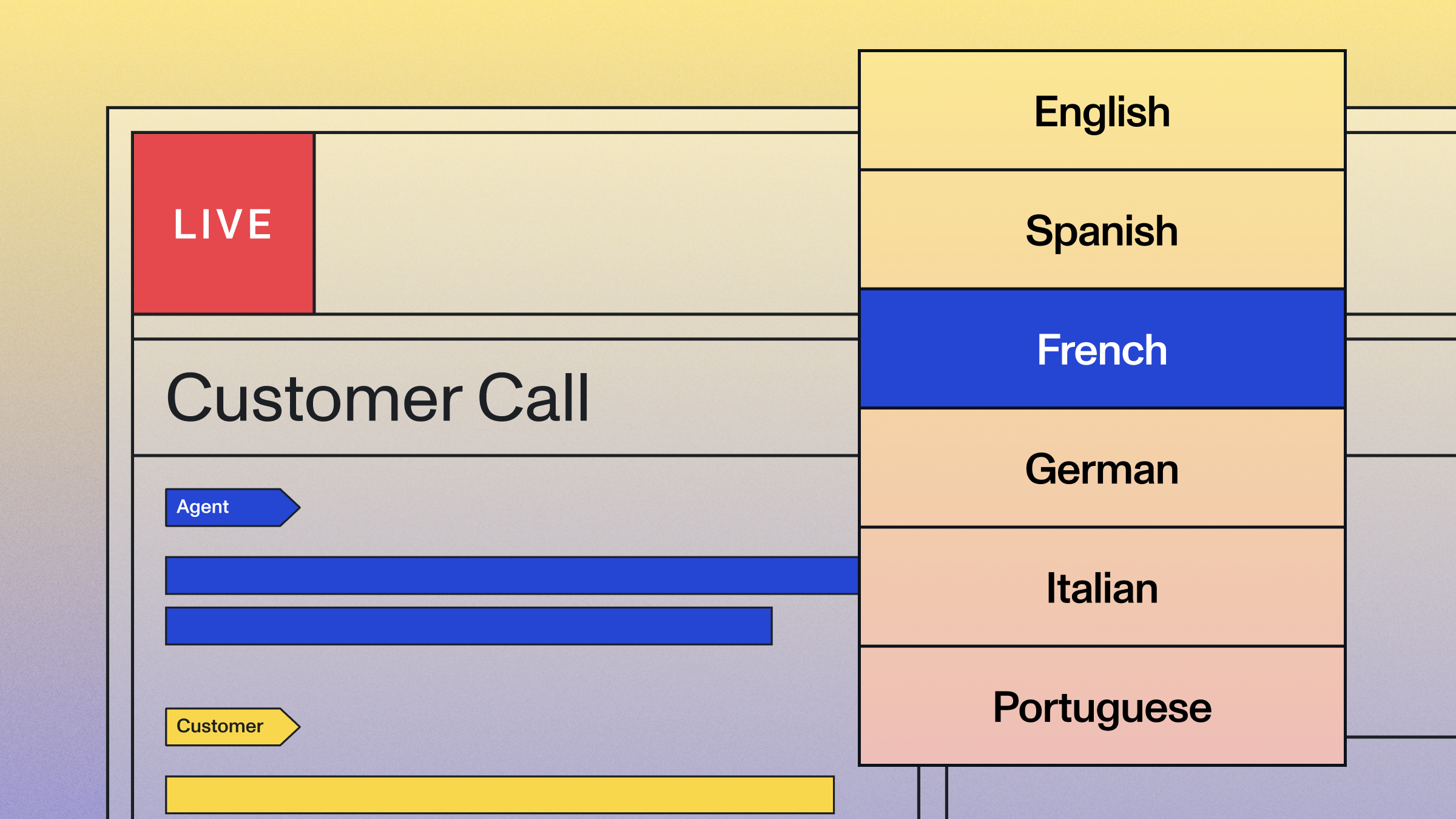

Automatically label multiple speakers on a conference call recording to make transcriptions more useful. This allows sales and support coaching platforms to analyze and display transcripts split by the <agent> and the <customer> in their interface to make search and navigation more simple. This can also help trigger actions like follow-ups, ticket status changes, and more.

Podcast Hosting

Automatically label the podcast <host> and <guest> on a recording to bring transcripts to life without needing to listen to the audio or video. This is especially important to podcasters, as most files are recorded on a mono-channel, and almost always include more than a single speaker. Podcast hosting platforms can use transcripts to drive better SEO, improve search/navigation, and provide insights podcasters may not otherwise have access to.

Hiring Platforms

Automatically label the <recruiter> and <applicant> on a hiring interview recording. Applicant Tracking Systems have customers who rely heavily on phone and video calls to recruit their applicants. Speaker diarization allows these platforms to split what applicants are responding to and what recruiters are asking without having to listen to the audio or video. This can also help trigger actions like applicant follow-ups and moving them to the next stage in the hiring process.

Video Hosting

Automatically label multiple <speakers> on a video recording to make automated captions more useful. Video hosting platforms can now better index files for better search, provide better accessibility and navigation for viewers, and creates more useful content for SEO.

Broadcast Media

Automatically label multiple <guests> and <hosts> on broadcast radio or TV recordings for more precise search and analytics around keywords. Media monitoring platforms can now provide more insights to their customers by labeling which speaker mentioned their keyword (e.g. Coca-Cola). This also allows them to provide better indexing, search, and navigation for individual recording playback.

How to enable speaker diarization with AssemblyAI

Enabling speaker diarization with AssemblyAI is straightforward using our Python SDK. The SDK simplifies the process by handling authentication, file uploads, and polling, allowing you to get a speaker-separated transcript with just a few lines of code.

The code sample below shows how to transcribe an audio file with speaker diarization enabled. For more code samples and language options, see our documentation for Speaker Diarization.

import axios from "axios";

import fs from "fs-extra";

const baseUrl = "https://api.assemblyai.com";

const headers = {

authorization: "<YOUR_API_KEY>",

};

const path = "./audio/audio.mp3";

const audioData = await fs.readFile(path);

const uploadResponse = await axios.post(`${baseUrl}/v2/upload`, audioData, {

headers,

});

const uploadUrl = uploadResponse.data.upload_url;

const data = {

audio_url: uploadUrl, // You can also use a URL to an audio or video file on the web

speech_models: ["universal-3-pro", "universal-2"],

language_detection: true,

speaker_labels: true,

};

const url = `${baseUrl}/v2/transcript`;

const response = await axios.post(url, data, { headers });

const transcriptId = response.data.id;

const pollingEndpoint = `${baseUrl}/v2/transcript/${transcriptId}`;

while (true) {

const pollingResponse = await axios.get(pollingEndpoint, { headers });

const transcriptionResult = pollingResponse.data;

if (transcriptionResult.status === "completed") {

for (const utterance of transcriptionResult.utterances) {

console.log(`Speaker ${utterance.speaker}: ${utterance.text}`);

}

break;

} else if (transcriptionResult.status === "error") {

throw new Error(`Transcription failed: ${transcriptionResult.error}`);

} else {

await new Promise((resolve) => setTimeout(resolve, 3000));

}

}

Getting started with speaker diarization

Speaker diarization transforms raw audio into structured, analyzable conversations. Whether you're building the next AI meeting assistant, a call coaching platform, or a media analysis tool, accurate speaker labels are foundational for creating world-class products.

The technology has matured significantly. Modern AI models handle challenging acoustic conditions, multiple speakers, and natural conversation patterns that would have stumped earlier systems. Combined with advances in speech recognition, speaker diarization now enables fully automated conversation analysis at scale.

When evaluating solutions, test with your actual audio data. Benchmark performance isn't always indicative of real-world accuracy in your specific use case. Consider factors like audio quality, number of speakers, conversation style, and integration requirements.

For teams building Voice AI applications, the choice often comes down to build versus buy. Open-source tools offer flexibility but require significant expertise and infrastructure. Commercial APIs provide production-ready accuracy with minimal setup, letting you focus on your core application logic.

The best way to understand the impact of high-quality diarization is to see it in action on your own audio data. Try our API for free and experience how accurate speaker separation transforms conversation data into actionable insights.

Frequently asked questions about speaker diarization

What's the difference between speaker diarization and speaker recognition?

Speaker diarization labels voices generically (Speaker A, Speaker B) without identifying specific people. Speaker recognition matches voices to known individuals from a database of voiceprints.

Can speaker diarization identify speakers by name?

By default, speaker diarization provides generic labels like "Speaker A" and "Speaker B". However, you can use AssemblyAI's Speaker Identification feature to automatically replace these labels with actual names or roles (e.g., "John Smith" or "Agent"). This feature infers speaker identities from the conversation's context, eliminating the need for manual mapping or pre-enrolled voice samples.

How accurate is speaker diarization with poor audio quality?

Modern AI models handle background noise and compression well, but extreme conditions like heavy background music or very low bitrate recordings reduce accuracy. Test with your specific audio conditions for realistic expectations.

Does speaker diarization work in real-time?

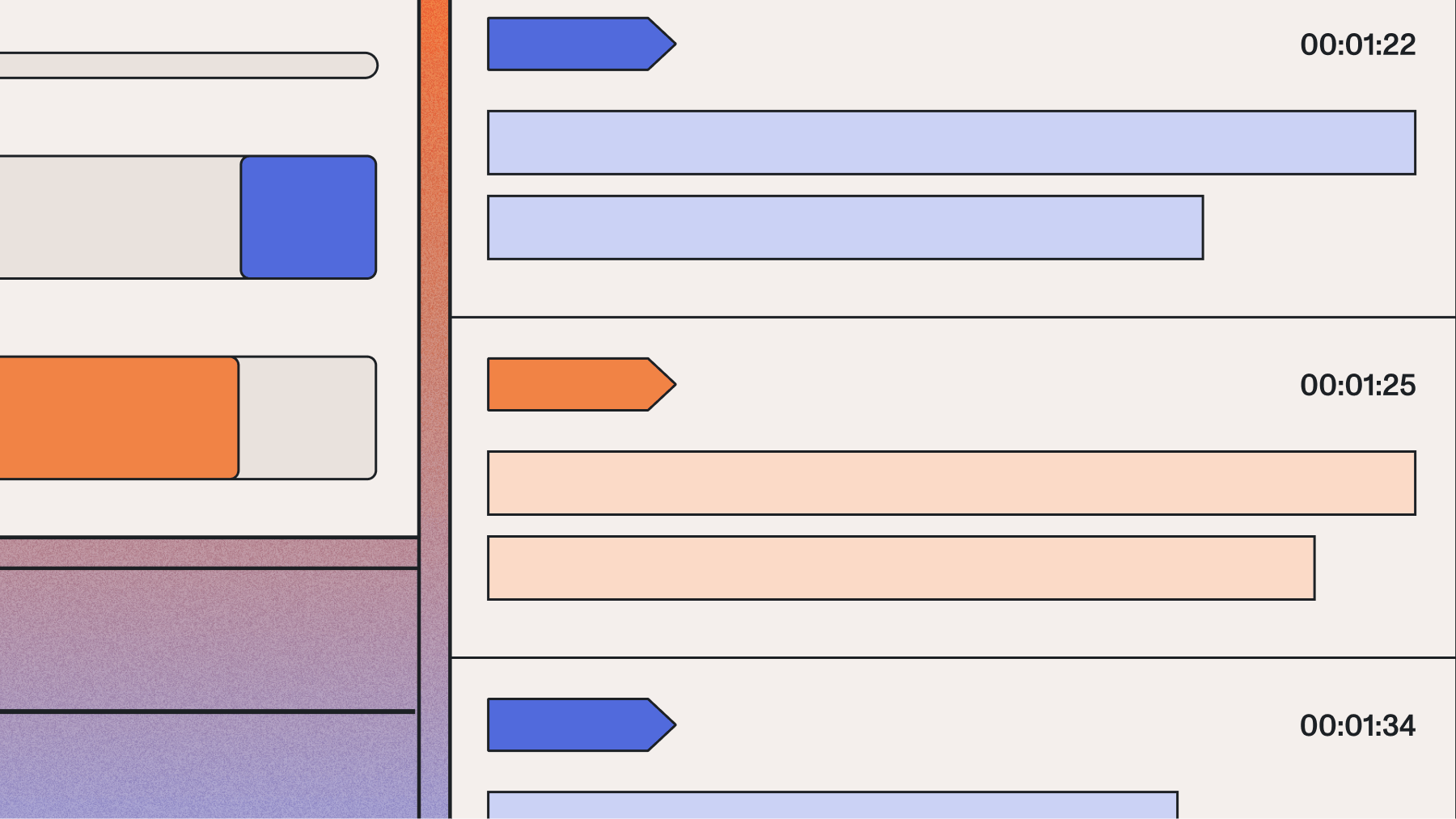

Yes, streaming diarization processes audio in real-time as it's being recorded. This is essential for live applications like meeting transcription or call center coaching. Recent research on real-time systems found that processing time can be as low as 3% of the speech segment's duration, demonstrating high efficiency. However, offline diarization that processes complete recordings typically achieves higher accuracy because it has full context. Choose based on whether you need immediate results or maximum accuracy.

How many speakers can diarization systems handle?

Most systems perform well with 2-5 speakers and can handle up to 10-15 speakers with decreasing accuracy. Performance depends on voice distinctness, speaker change frequency, and audio quality.