Build an AI-powered video conferencing app with Next.js and Stream

Learn how to build a Next.js video conferencing app that supports video calls with live transcriptions and an LLM-powered meeting assistant.

In this tutorial, you’ll learn how to build a Next.js video conferencing app that supports video calls with live transcriptions and an LLM-powered meeting assistant.

By the end of this tutorial, you will learn everything from creating the Next.js app to implementing video call functionality with the Stream Video SDK, adding real-time transcriptions, and integrating an LLM that can answer questions during the call.

Here’s a preview of the final app:

0:00 /0:49 1×

Project demo

Project requirements

To follow along with this tutorial, you will need the following requirements:

- Node.js 18.17 or later to build with Next.js.

- Free Stream Account for the video calling functionality.

- AssemblyAI Account for the real-time transcription and LLM functionality

Set up the project

To get started quickly, we provide a starter template with a ready-made Next.js project that already includes the setup process of the Stream React SDK. We'll explore the template in the next section after we're done with the initial setup.

If you’re also interested in following the video SDK setup process, you can check this tutorial.

Clone the starter project from GitHub:

git clone -b starter-project git@github.com:GetStream/assemblyai-transcription-llm-assistant.git

Then, go to the project folder and rename .env.template to .env and add your API keys:

NEXT_PUBLIC_STREAM_API_KEY= STREAM_SECRET= ASSEMBLY_API_KEY=

You can get free API keys for both Stream and AssemblyAI on their websites. You can find the AssemblyAI key in your dashboard. See the following video for how to get your Stream API key:

0:00 /0:10 1×

Get your Stream API key

Next, install all project dependencies with npm or yarn, and start the app:

cd assemblyai-collaboration npm install # or yarn npm run dev # or yarn dev

Now, you can open and test the app on the given localhost port (usually 3000). You can already start a video call and talk to other people! Multiple people can now join your meeting by running the same app locally with the same callId (see next section). Of course, you could also host the app and send out the meeting link.

Let's briefly review the app architecture before implementing real-time transcription and LLM functionality.

The architecture of the app

Here’s a high-level overview of how the app works.

Folder structure and files

The application is a Next.js project bootstrapped with create-next-app. Refer to the Next.js docs for more details about the folder structure and files. The most relevant files are:

app/page.tsx: It contains the entry point for the app page.app/api/token/route.tsx: Handles API requests to the Stream API, specifically the token generation for authentication.components/CallContainer.tsx: Handles UI components for the video callcomponent/CallLayout.tsx: Handles the layout and state changes in the app to adapt the UIhooks/useVideoClient.ts: Sets up the Stream video client.

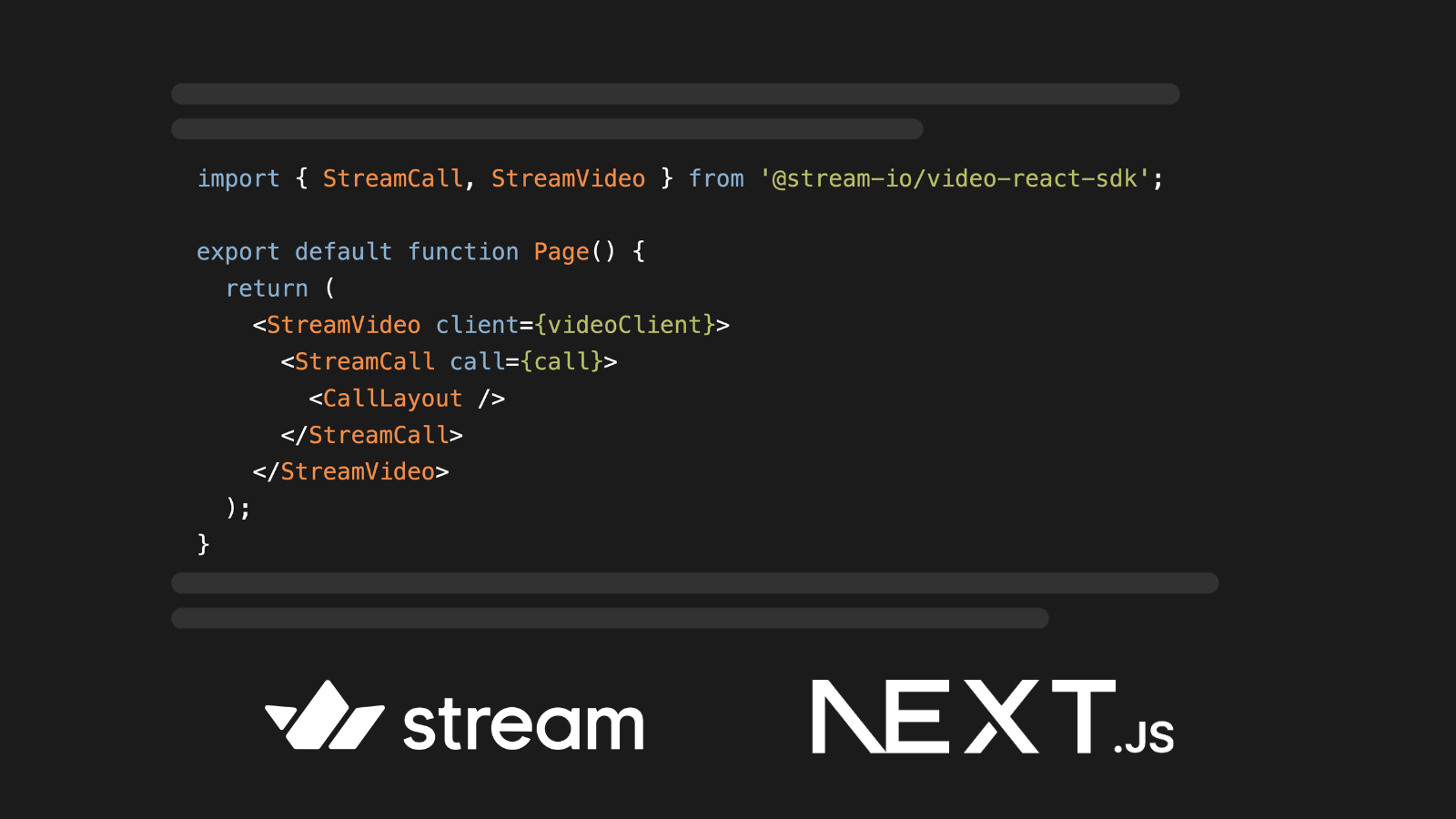

Video call functionality using Stream

The video conferencing functionality is implemented using the Stream React Video SDK,, a comprehensive toolkit consisting of UI components, React hooks, and context providers that allow you to easily add video and audio calling, audio rooms, and live streaming to your app.

All calls are routed through Stream's global edge network, which ensures lower latency and higher reliability due to its proximity to end users.

The video call is started in components/CallContainer.tsx. You can edit the callId to change the meeting room. We recommend changing the below callId to your own unique id.

const callId = '123412341234'; const callToCreate = videoClient?.call('default', callId);

Live transcription and LLM calls using AssemblyAI

For the real-time transcription and LLM calls, we’ll use the AssemblyAI Node SDK.

This functionality isn’t included in the starter project. So let’s write some code to add this!

Set up a microphone recorder

We need access to the raw microphone feed to transcribe what people are saying. This data is then fed into AssemblyAI’s Streaming Speech-to-Text service, and the returned text is displayed to the users in real-time.

There are multiple ways to do this using Stream’s SDK, e.g., using audio filters. In our case, we use a few utility functions and hooks from Stream. Specifically, we utilize Stream’s useMicrophoneState from their useCallStateHooks, which then gives us access to a MediaStream object.

First, create a helper function helpers/createMicrophone.ts that takes a MediaStream object as a parameter.

Inside createMicrophone, we create two inner functions startRecording and stopRecording. startRecording uses the AudioContext Interface to handle the recording. Here, we listen to new incoming audio data and append it to a buffer. If we have enough data, we can call the onAudioCallback and pass the data to the outside, where we can then forward it to the speech-to-text service.

stopRecording simply closes the connection and resets the buffer. Add the following code:

export function createMicrophone(stream: MediaStream) { let audioWorkletNode; let audioContext: AudioContext; let source; let audioBufferQueue = new Int16Array(0); return { async startRecording(onAudioCallback: any) { audioContext = new AudioContext({ sampleRate: 16_000, latencyHint: 'balanced', }); source = audioContext.createMediaStreamSource(stream); await audioContext.audioWorklet.addModule('audio-processor.js'); audioWorkletNode = new AudioWorkletNode(audioContext, 'audio-processor'); source.connect(audioWorkletNode); audioWorkletNode.connect(audioContext.destination); audioWorkletNode.port.onmessage = (event) => { const currentBuffer = new Int16Array(event.data.audio_data); audioBufferQueue = mergeBuffers(audioBufferQueue, currentBuffer); const bufferDuration = (audioBufferQueue.length / audioContext.sampleRate) * 1000; // wait until we have 100ms of audio data if (bufferDuration >= 100) { const totalSamples = Math.floor(audioContext.sampleRate * 0.1); const finalBuffer = new Uint8Array( audioBufferQueue.subarray(0, totalSamples).buffer ); audioBufferQueue = audioBufferQueue.subarray(totalSamples); if (onAudioCallback) onAudioCallback(finalBuffer); } }; }, stopRecording() { stream?.getTracks().forEach((track) => track.stop()); audioContext?.close(); audioBufferQueue = new Int16Array(0); }, }; }

We also need a small helper function to merge buffers. With this function, we concatenate incoming audio data until we have 100ms of audio data. Create a file helpers/mergeBuffers.ts with the following code:

export function mergeBuffers(lhs: any, rhs: any) { const mergedBuffer = new Int16Array(lhs.length + rhs.length); mergedBuffer.set(lhs, 0); mergedBuffer.set(rhs, lhs.length); return mergedBuffer; }

Now we can integrate the helper functions into our app. Add the following lines of code to component/CallLayout.tsx. Here, we access the media stream through useCallStateHooks and can later forward it to the createMicrophone function:

export default function CallLayout(): JSX.Element { const { useCallCallingState, useParticipantCount, useMicrophoneState } = useCallStateHooks(); const { mediaStream } = useMicrophoneState();

Implement real-time transcription

Now that we have access to the microphone feed, we can forward the data to AssemblyAI’s Streaming Speech-to-Text service and receive transcriptions in real time.

Add the assemblyai Node SDK to the project:

npm install assemblyai # or yarn add assemblyai

Create an authentication token

To authenticate the client, we’ll use a temporary authentication token. Create a Next.js route in app/api/assemblyToken/route.ts that handles the API call.

Here, we create a token with the createTemporaryToken function. You can also determine how long the token should be valid by specifying the expires_in parameter in seconds.

export async function POST() { const apiKey = process.env.ASSEMBLY_API_KEY; if (!apiKey) { return Response.error(); } const assemblyClient = new AssemblyAI({ apiKey: apiKey }); const token = await assemblyClient.realtime.createTemporaryToken({ expires_in: 3_600_000_000, }); const response = { token: token, }; return Response.json(response); }

Then, add a new file helpers/getAssemblyToken.ts and add a function that fetches a token:

export async function getAssemblyToken(): Promise<string | undefined> { const response = await fetch('/api/assemblyToken', { method: 'POST', headers: { 'Content-Type': 'application/json' }, cache: 'no-store', }); const responseBody = await response.json(); const token = responseBody.token; return token; }

Add a Real-Time transcriber

Now, we can set up an AssemblyAI RealtimeTranscriber object that authenticates with a token. Make sure that the sampleRate matches the sample rate of the microphone stream.

Additionally, create the transcriber.on('transcript') function, which is called when real-time transcripts are received. AssemblyAI sends two types of transcripts: Partial transcripts, which are returned as soon as the audio is being streamed, and Final transcripts, which are returned after a moment of silence and can be considered as finished sentences. You can access the type through the transcript.message_type parameter.

For now, we simply pass both partial and final transcripts to the UI through the setTranscribedText dispatch, but later, we will handle the two cases differently.

Create a new file helpers/createTranscriber.ts and add the following code:

export async function createTranscriber( setTranscribedText: Dispatch<SetStateAction<string>> ): Promise<RealtimeTranscriber | undefined> { const token = await getAssemblyToken(); if (!token) { console.error('No token found'); return; } const transcriber = new RealtimeTranscriber({ sampleRate: 16_000, token: token, endUtteranceSilenceThreshold: 1000, }); transcriber.on('transcript', (transcript: RealtimeTranscript) => { if (!transcript.text) { return; } if (transcript.message_type === 'PartialTranscript') { setTranscribedText(transcript.text); } else { // Final transcript setTranscribedText(transcript.text); } }); return transcriber; }

Implement the LLM-powered AI assistant

To implement an LLM-powered assistant, we use AssemblyAI’s LeMUR. LeMUR is a framework that allows you to apply LLMs to spoken data and can be easily integrated through the assemblyai Node SDK.

Add a new route app/api/lemurRequest/route.ts that handles the LeMUR API call. Here, we initialize an AssebmlyAI client with our API key, and can then call client.lemur.task and pass any prompt we want to the LLM.

We prepare a final prompt consisting of some instructions and the prompt from the user. As input_text we just leave some context to keep it simple, but here we could add the whole meeting transcript to provide more information for the model.

export async function POST(request: Request) { const apiKey = process.env.ASSEMBLY_API_KEY; const client = new AssemblyAI({ apiKey: apiKey }); const body = await request.json(); const prompt = body?.prompt; const finalPrompt = `You act as an assistant during a video call. You get a question and I want you to answer it directly without repeating it. If you do not know the answer, clearly state that. Here is the user question: ${prompt}`; const lemurResponse = await client.lemur.task({ prompt: finalPrompt, input_text: 'This is a conversation during a video call.', // TODO: For now we just give some context, but here we could add the actual meeting text. }); const response = { prompt: prompt, response: lemurResponse.response, }; return Response.json(response); }

We also need to set up a trigger word for the assistant. In our example, we check the transcript for the word "llama," but this can be configured to be any other word.

Inside createTranscriber.ts we can react to transcriptions we receive from the AssemblyAI SDK. The transcriber.on(‘transcript’) callback is the perfect place for this.

Here, we first check if the word is detected by simply testing if there is any occurrence of our trigger word inside the transcript.

If we receive a final transcript, we consider it a finished sentence with the user question, in which case we want to call our processPrompt function if the transcript contains the trigger word.

Here’s the code:

transcriber.on('transcript', (transcript: RealtimeTranscript) => { // Detect if we're asking something for the LLM setLlamaActive(transcript.text.toLowerCase().indexOf('llama') > 0); if (transcript.message_type === 'PartialTranscript') { setTranscribedText(transcript.text); } else { setTranscribedText(transcript.text); if (transcript.text.toLowerCase().indexOf('llama') > 0) { processPrompt(transcript.text); } } });

Show the live transcription and the meeting assistant in the UI

We implemented all the necessary logic for both the transcription and the calls to LeMUR and received answers. Now, we need to show this to the users. In this part, we will connect our functionality to the UI.

Starting with the transcriptions itself, we first need to initialize our transcriber. We do this inside CallLayout.tsx which holds all the UI for the call using Stream’s built-in UI components.

Our transcriber serves different purposes, for which we will define helper functions. These purposes are:

- Tracking the transcribed text

- Checking whether a prompt for the LLM is currently being said

- Calling when to process a prompt since the request from the user has been formulated

For the first, we need a state property for the transcribed text. The same is true for the second purpose but here we need a boolean to toggle whether or not a prompt is currently being said or not.

We add two state properties at the top of the CallLayout element:

const [transcribedText, setTranscribedText] = useState<string>(''); const [llmActive, setLllmActive] = useState<boolean>(false);

When processing the prompt we have to be able to also display an answer somehow, so we create another state property for that:

const [llmResponse, setLlmResponse] = useState<string>('');

With that, we can first define a function to process the prompt. We’ll wrap it inside a useCallback hook to prevent unnecessary re-renders. Then, we first initiate a call to the lemurRequest route we defined earlier. We’ll then set the response we get back using the setLlmResponse function that we will display to the user in a second.

However, we don’t want this to be shown on screen forever, so we add a timeout function (we’ve configured it to be called after 7 seconds, but that’s just an arbitrary number). After this time, we reset the response and the transcribed text and set the LLM to inactive.

Here’s the code:

const processPrompt = useCallback(async (prompt: string) => { const response = await fetch('/api/lemurRequest', { method: 'POST', headers: { 'Content-Type': 'application/json' }, body: JSON.stringify({ prompt: prompt }), }); const responseBody = await response.json(); const lemurResponse = responseBody.response; setLlmResponse(lemurResponse); setTimeout(() => { setLlmResponse(''); setLllmActive(false); setTranscribedText(''); }, 7000); }, []);

With that we prepared everything we need to initialize our transcriber. We don’t want to initialize it directly, but we add a button to the UI to click and call the initialization function only then.

For this to work we need to have a reference to hold onto for the transcriber. But also, we need references for the microphone and whether transcription and LLM detection are active. So, let’s add these first at the top of our CallLayout component:

const [robotActive, setRobotActive] = useState<boolean>(false); const [transcriber, setTranscriber] = useState< RealtimeTranscriber | undefined >(undefined); const [mic, setMic] = useState< | { startRecording(onAudioCallback: any): Promise<void>; stopRecording(): void; } | undefined >(undefined);

Now, we can define a initializeAssemblyAI function - again, wrapped in a useCallback hook. Here, we first create a transcriber, and then connect it to make it active.

Then, we create a microphone and connect the recorded data of it to the transcriber using its sendAudio function. Finally, we update the properties we just defined. Here’s the code:

const initializeAssemblyAI = useCallback(async () => { const transcriber = await createTranscriber( setTranscribedText, setLllmActive, processPrompt ); await transcriber.connect(); const mic = createMicrophone(mediaStream); mic.startRecording((audioData: any) => { transcriber.sendAudio(audioData); }); setMic(mic); setTranscriber(transcriber); }, [mediaStream, processPrompt]);

Next, we add the first piece of UI to the component. We start it off with a button to activate the transcription and LLM service (calling the initializeAssemblyAI function on tap). For fun, we added a robot image to be tapped (see here in the repo).

Add the following code after the <CallControls /> element inside the CallLayout component:

<button className='ml-8' onClick={() => switchRobot(robotActive)}> <Image src={robotImage} width={50} height={50} alt='robot' className={`border-2 border-black dark:bg-white rounded-full transition-colors ease-in-out duration-200 ${ robotActive ? 'bg-black animate-pulse' : '' }`} /> </button>

We show a button with the image inside, and if the robotActive property is true, we add a pulsing animation to it.

For this to work, we need to add the switchRobot function. If that is clicked and the transcriber is currently active, we clean it up and close the connection to the microphone. If the transcriber is not active we initialize it.

The code for this is straightforward:

async function switchRobot(isActive: boolean) { if (isActive) { mic?.stopRecording(); await transcriber?.close(false); setMic(undefined); setTranscriber(undefined); setRobotActive(false); } else { await initializeAssemblyAI(); console.log('Initialized Assembly AI'); setRobotActive(true); } }

All that remains for us to do is add UI for both the transcription and the response we’re getting from our LLM. We’ve already embedded the <SpeakerLayout /> UI element in a container <div> that has the relative TailwindCSS property (equal to position: relative in native CSS).

We position all of the element absolute to overlay the video feed. We start off with the LLM response. If we have a value for the llmResponse property, we show the text in a div with some styling attached, positioning it to the top-right. Add this code next to the <SpeakerLayout> element inside the containing <div>:

{llmResponse && ( <div className='absolute mx-8 top-8 right-8 bg-white text-black p-4 rounded-lg shadow-md'> {llmResponse} </div> )}

We do a similar thing for the transcribedText but position it relative to the bottom:

<div className='absolute flex items-center justify-center w-full bottom-2'> <h3 className='text-white text-center bg-black rounded-xl px-6 py-1'> {transcribedText} </h3> </div>

The final piece of UI that we add is intended to indicate that we are currently listening to a query of the user. This means, that the llmActive property is true, that gets triggered when the user mentions our trigger word. We add a fun animation of a Llama (see image here) that enters the field of view while listening to a prompt and animates away when a prompt finished processing.

Here’s the code for it, adding it next to the previous two elements:

<div className={`absolute transition ease-in-out duration-300 bottom-1 right-4 ${llmActive ? 'translate-x-0 translate-y-0 opacity-100' : 'translate-x-60 translate-y-60 opacity-0'}`}> <Image src={llamaImage} width={200} height={200} alt='llama' className='relative' /> </div>

With this, we have finished building up the UI for our application. We can now go and run the app to see the functionality for ourselves.

Final app

Now, you can run the app again and test all features:

npm run dev # or yarn dev

Click the robot icon in the bottom right corner to activate live transcriptions.

To activate the AI assistant, use the trigger word "Hey Llama" and then ask your question.

The app is now ready for deployment so other people can join your meetings. For example, you could easily deploy it to Vercel by following their documentation.

Learn more

We hope you enjoyed building this app! Now, you can build Next.js apps with video calling functionality and live transcriptions.

If you want to learn more about building with Stream’s SDKs to integrate video, audio, or chat messaging into your apps, check out the following resources:

If you want to learn more about how to analyze audio and video files with AI, check out more of our AssemblyAI blog, like this article on summarizing audio with LLMs in Node.js. Alternatively, feel free to check out our YouTube channel for educational videos on AI and AI-adjacent projects.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.