Is Word Error Rate Useful?

What is Word Error Rate and is it a useful measurement of accuracy for speech recognition systems? In this article, we examine the answer to these questions, as well as explore other alternatives to Word Error Rate.

What is Word Error Rate?

Word Error Rate is a measure of how accurately an Automatic Speech Recognition (ASR) system performs. Quite literally, it calculates how many “errors” are in the transcription text produced by an ASR system, when compared to a human transcription.

Broadly speaking, it’s important to measure the accuracy of any machine learning system. Whether it’s a self-driving car, an AI system like Amazon Alexa, or an Automatic Speech Recognition system like the ones we develop at AssemblyAI, if you don’t know how accurate your AI system is, you’re flying blind.

In the field of Automatic Speech Recognition, the Word Error Rate has become the de facto standard for measuring how accurate a speech recognition model is. A common question we get from customers is “What’s your WER?” In fact, when our company was accepted into Y Combinator back in 2017, one of the first questions the YC partners asked us was “What’s your WER?”

However, WER is far from the only metric to use when analyzing the accuracy and utility of a speech recognition system.

In this blog post, we’ll go over how WER is calculated and how useful of a measurement this calculation is for comparing speech recognition systems. Then, we’ll also examine several other metrics and techniques in addition to WER and discuss the best holistic metrics and processes to consider during the evaluation process.

How To Calculate Word Error Rate (WER)

The actual math behind calculating a Word Error Rate is the following:

What we are doing here is combining the number of Substitutions (S), Deletions (D), and Insertions (N), divided by the Number of Words (N).

So for example, let’s say the following sentence is spoken:

"Hello there"

If our Automatic Speech Recognition (ASR) is not very good, and predicts the following transcription:

"Hello bear"

Then our Word Error Rate (WER) would be 50%. That’s because there was 1 Substitution, “there” was substituted with “bear”. Let’s say our ASR system instead only predicted:

"Hello"

And for some reason didn’t even predict a second word. In this case, our Word Error Rate (WER) would also be 50%. That’s because there is a single Deletion - only 1 word was predicted by our ASR system when what was actually spoken was 2 words.

The lower the Word Error Rate the better (all other things equal). You can think of word accuracy as 1 minus the Word Error Rate. So if your Word Error Rate is 20%, then your word accuracy, i.e. how accurate your transcription is, is 80%.

Is Word Error Rate useful?

Is Word Error Rate actually a good measurement of how well a speech recognition system performs?

As with everything, it is not black and white. Overall, Word Error Rate can tell you how “different” the automatic transcription was compared to the human transcription, and generally, this is a reliable metric to determine how “good” an automatic transcription is.

For example, take the following:

Spoken text:

“Hi my name is Bob and I like cheese. Cheese is very good.”

Predicted text by model 1:

"Hi my frame is knob and I bike leafs. Cheese is berry wood"

WER: 46%

Predicted text by model 2:

"Hi my name is Bob and I bike cheese. Cheese is good."

WER: 15%

As we can see, model 2 has a lower WER of 15%, and is obviously way more accurate to us as humans than the predicted text from model 1. This is why, in general, WER is a good metric for determining the accuracy of an Automatic Speech Recognition system.

However, take the following example:

Spoken:

"I like to bike around"

Model 1 prediction:

"I liked to bike around"

WER: 20%

Model 2 prediction:

"I like to bike pound"

WER: 20%

In the above example, both Model 1 text and Model 2 text have a WER of 20%. But Model 1 clearly results in a more understandable transcription compared to Model 2. That’s because even with the error that Model 1 makes, it still results in a more legible and easy-to-understand transcription. This is compared to Model 2, which makes a mistake in the transcription that results in the transcription text being illegible (i.e., “word soup”).

To further illustrate the downfalls of Word Error Rate, take the following example:

Spoken:

"My name is Paul and I am an engineer"

Model 1 prediction:

"My name is ball and I am an engineer"

WER: 11.11%

Model 2 prediction:

"My name is Paul and I'm an engineer"

WER: 22.22%

In this example, Model 2 does a much better job producing an understandable transcription, but it has double the WER compared to Model 1. This difference in WER is especially pronounced in this example because the text contains so few words, but still, this illustrates some “gotchas” to be aware of when reviewing WER.

What these above examples illustrate is that the Word Error Calculation is not “smart”. It is literally just looking at the number of substitutions, deletions, and insertions that appear in the automatic transcription compared to the human transcription. This is why WER in the “real world” can be so problematic.

Take for example the simple mistake of not normalizing the casing in the human transcription and automatic transcription.

Human transcription:

"I live in New York"

Automatic transcription:

"i live in new york"

WER: 60%

In this example, we see that the automatic transcription has a WER of 60% even though it perfectly transcribed what was spoken. Simply because we were comparing the human transcription with New York capitalized, compared to new york lowercase, the WER algorithm sees these as completely different words.

This is a major “gotcha” we see in the wild, and it’s why we internally always normalize our human transcriptions and automatic transcriptions when computing a WER, through things like:

- Lowercasing all text

- Removing all punctuation

- Changing all numbers to their written form ("7" -> "seven")

- Etc.

We discuss normalization in greater detail later in this article.

Are there any alternatives to Word Error Rate?

Word Error Rate is one of the best metrics we have today to determine the accuracy of an Automatic Speech Recognition system, considering the tradeoff between simplicity and human values. There have been some alternatives proposed, but none have stuck in the research or commercial communities. A common technique used is to weight Substitutions, Insertions, and Deletions differently. So, for example, adding 0.5 for every Deletion versus 1.0.

However, unless the weights are standardized, it’s not really a “fair” way to compute WER. System 1 could be reporting a much lower WER because it used lower “weights” for Substitutions, for example, compared to System 2.

While, for the time being, Word Error Rate is here to stay, there are some other important metrics and techniques to consider when comparing a speech recognition system that will provide a more holistic view.

Read also: Why evals in voice AI are so hard (and how to fix them)

Other metrics and techniques for comparing speech recognition systems

If you’re looking to truly compare and evaluate speech recognition systems, there are several other metrics you should consider in addition to WER. These metrics/techniques include:

- Proper noun evaluation

- Choosing the right dataset

- Normalizing

Proper noun evaluation helps evaluators better understand the model's performance on proper nouns specifically, which are disproportionately important to the meaning of language.

The Jaro-Winkler distance allows a model to get 'partial credit' for transcribing proper nouns as different but similar words. For example, if the name 'Sarah' is transcribed as 'Sara', then Jaro-Winkler will count this as partially correct, whereas WER will count this as completely incorrect.

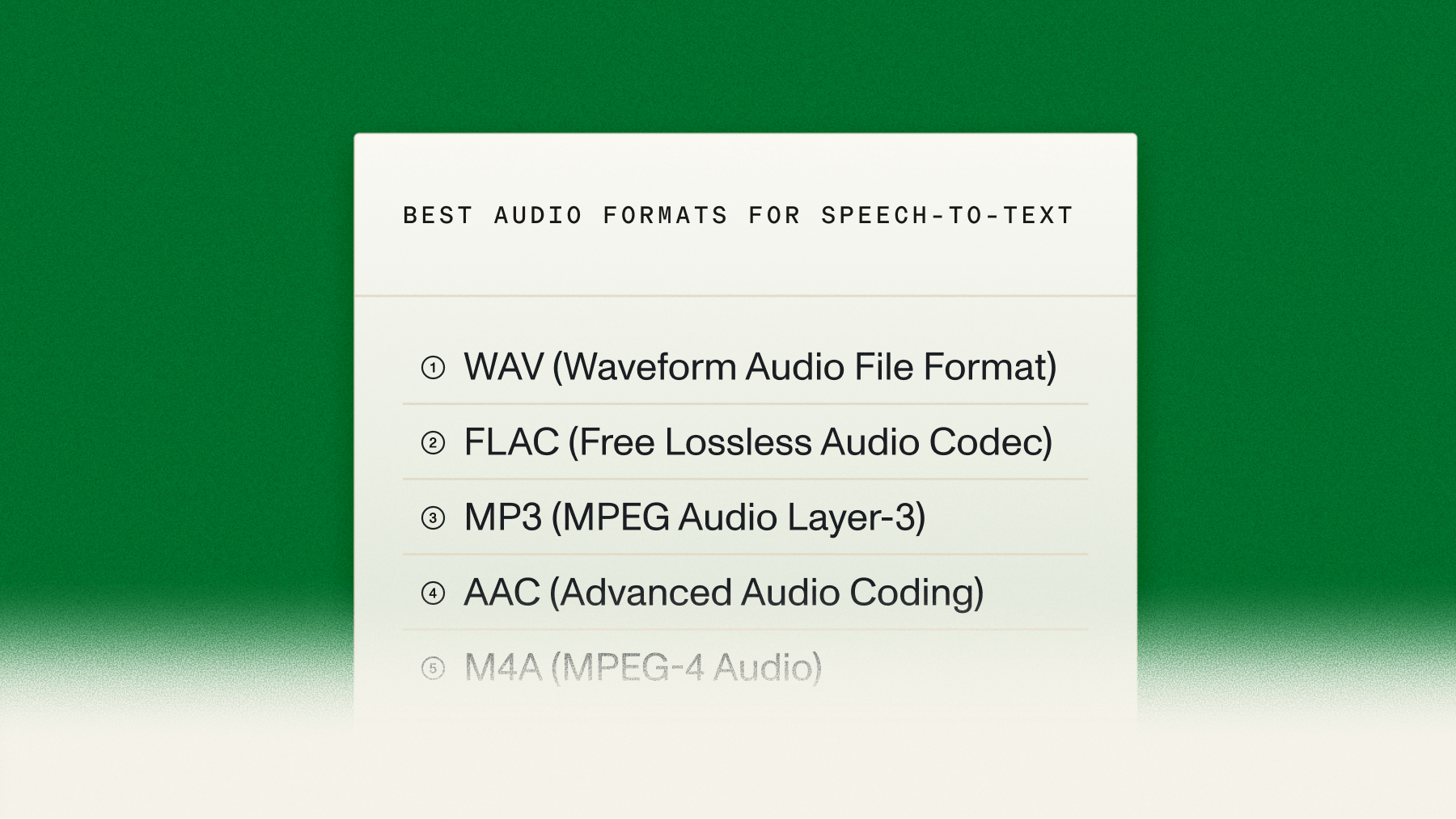

Choosing the right evaluation datasets is critically important to be confident in the evaluation results. It’s important to choose datasets that:

- Are relevant to the intended use case of the ASR model

- Simulate real-world datasets by already having, or by adding, noise to the audio file

Together, these considerations will ensure a more accurate comparison across models.

Finally, we should also consider the process of normalization when comparing ASR systems. A normalizer takes in a model’s output and then “normalizes” it to allow for a better, more fair comparison. For example, the normalizer may standardize spellings, remove disfluencies, or even ignore irrelevant discrepancies. A consistent normalizer helps evaluators be more confident in the results of their comparison.

This blog post goes into greater detail for each of the above metrics and techniques for those interested in using them during their comparison of speech recognition systems.

While WER is still an excellent starting point for comparison, the other metrics and techniques discussed in this article should always be considered in order to make a proper, fair evaluation of Automatic Speech Recognition systems.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

%20benchmark%20might%20be%20lying%20to%20you%20(1).png)