What is real-time speech to text?

Real-time speech to text converts spoken words into accurate text instantly for live captions, meeting transcription, and voice commands as you speak.

Real-time speech to text converts spoken words into accurate text instantly for live captions, meeting transcription, and voice commands as you speak, with ongoing accessibility research exploring real-time devices to help people with hearing loss communicate.

Real-time speech-to-text converts spoken words into written text as you speak, delivering transcriptions within milliseconds rather than waiting for complete recordings to process. Part of a voice tech market projected to reach nearly $50 billion by 2029 according to market data, this streaming approach processes audio continuously in small chunks, enabling live captions on video calls, instant voice commands, and meeting notes that appear as conversations unfold.

Understanding how real-time transcription works becomes essential as voice interfaces and live collaboration tools reshape how we interact with technology. This article explains the core concepts behind streaming speech recognition, from audio capture and processing to speaker identification, while covering practical implementation options and performance considerations that determine success in real-world applications.

What is real-time speech-to-text

Real-time speech-to-text converts spoken words into written text instantly as you speak, delivering transcriptions within milliseconds rather than waiting for complete recordings to process. This streaming technology processes audio continuously in small chunks, enabling live captions, voice commands, and meeting transcription that appears as conversations unfold.

Unlike batch processing that analyzes entire recordings at once, real-time systems process small chunks of audio continuously.

Real-time processing: Transcribes audio as it streams, delivering immediate results. Batch processing: Waits for complete recordings before starting transcription.

This technology powers live captions on Zoom calls, voice commands on smartphones, and meeting notes that appear as conversations unfold.

Key terminology:

Streaming transcription

Processing audio in continuous small chunks rather than waiting for complete recordings

Partial results

Initial transcription guesses that appear instantly and get refined as more audio arrives

Latency

The delay between speaking and seeing text appear on screen

Speaker diarization

Technology that identifies who is speaking when in multi-speaker conversations

Word Error Rate (WER)

Industry standard metric measuring the percentage of incorrectly transcribed words

How streaming speech recognition works

Streaming speech recognition transforms your voice into text through three connected steps that happen simultaneously. Each step builds on the previous one while processing continues in real-time.

Audio capture and streaming

Your microphone captures sound waves and immediately converts them into digital data. This happens continuously—not in a single burst like traditional recording. The system encodes this audio into small chunks, typically lasting 20–100 milliseconds each.

These audio chunks stream directly to the speech recognition service without waiting for silence or sentence breaks. It's like a constant flow of data rather than discrete files. The connection stays open throughout your conversation, creating a pipeline where audio flows up and text results flow back down.

The quality of this initial capture affects everything downstream. As an industry report confirms, accuracy remains a challenge with poor audio quality and different accents. For this reason, clear audio from a good headset microphone produces much better results than a laptop's built-in mic picking up room noise.

Real-time processing and partial results

As each audio chunk arrives, the AI model starts working immediately. It doesn't wait to hear your complete sentence before making predictions about what you're saying. You'll see partial results appear almost instantly—maybe "The weather" followed by "The weather today" as you continue speaking.

These early guesses aren't final. The system refines them as more audio arrives and it understands more context. A partial result like "to" might change to "two" or "too" once the system hears the surrounding words.

The AI model balances speed with accuracy by making intelligent predictions based on:

- Sound patterns: What phonemes (basic speech sounds) it detects

- Language modeling: Which word sequences make sense in English

- Context awareness: What's been said earlier in the conversation

- Confidence scoring: How certain it is about each word

Speaker identification and timestamps

To identify who said what in real time, you can use the speaker_labels: true parameter with any of AssemblyAI's main streaming models, including u3-rt-pro, universal-streaming-english, and universal-streaming-multilingual. This enables speaker diarization directly on a single audio stream, allowing the model to distinguish between different speakers as they talk.

This means your meeting transcript shows who said what, not just a wall of mixed-up text from everyone talking.

For use cases where each speaker is already on a separate audio channel (multichannel audio), you can stream each channel independently. The system tracks each speaker through their dedicated audio channel, which provides highly reliable speaker separation.

Word-level timestamps accompany each piece of text, marking exactly when each word was spoken. This precision enables synchronized captions that match lip movement in videos or lets you jump to specific moments in long recordings.

Real-time vs batch processing trade-offs

Choosing between real-time and batch speech-to-text depends entirely on what you need to do. The core difference is simple: real-time processes audio as it's spoken, while batch processing waits for a complete audio file. This distinction creates important trade-offs in speed, accuracy, and implementation complexity.

Key architectural differences:

- Audio processing: Continuous chunks vs. complete files

- Context window: Limited future context vs. full recording context

- Latency priority: Speed over throughput vs. throughput over speed

Speed and latency

Real-time transcription prioritizes speed over throughput. It processes audio in continuous chunks, and according to industry benchmarks, typically returns partial results with latencies between 200-500 milliseconds.

Low latency: Essential for interactive applications like voice commands and live captions. High throughput: Batch processing excels at transcribing hours of finished recordings efficiently.

Accuracy and context

Batch processing typically achieves higher accuracy because models analyze complete recordings with full context. Real-time models make predictions with limited future context but continuously refine results as more audio arrives.

Context advantage: Batch systems distinguish "four" vs "for" by analyzing future words. Refinement advantage: Real-time systems update predictions as conversations progress.

For most applications, modern streaming accuracy is sufficient and the interactivity gain outweighs small accuracy trade-offs.

Use case alignment

Your application's needs will make the choice clear:

- Choose real-time for: Live captioning, meeting transcription for platforms like Circleback AI, voice-controlled devices, and real-time agent assists in call centers. If the user needs to see or interact with the text as it's being generated, you need real-time, a capability that over 80% of professionals in a recent survey predict will be the most transformative in the coming year.

- Choose batch for: Transcribing recorded interviews, podcasts, video files, and call recordings for post-call analysis. If you have a finished audio file and just need the transcript, batch processing is more straightforward.

Common use cases and applications

Real-time speech-to-text serves different needs across various scenarios, each with specific requirements that shape how the technology gets implemented.

Live captions and accessibility

A systematic review found that adding text to video improves speech intelligibility, providing essential access not only for people who are deaf or hard of hearing but also for younger and older adults regardless of their hearing status. Instead of missing out on spoken content, they see synchronized text appearing as people speak.

This application demands high accuracy and proper formatting since, as research demonstrates, errors from automated speech recognition can be significant enough to interfere with meaning.

Key accessibility requirements:

- Universal benefit: Helps all users, not just those with hearing impairments

- Synchronization: Text must appear in real-time as speech occurs

- Accuracy threshold: Errors can interfere with meaning and comprehension

Major broadcasters use real-time transcription for live news, sports commentary, and unscripted events where traditional closed captions can't be prepared in advance. Video conferencing platforms like Zoom and Google Meet now integrate this natively, making meetings more inclusive.

The technology handles challenges like multiple speakers, background music, and varying audio quality. Professional captioning services often combine AI transcription with human oversight to ensure accuracy for critical applications.

Key requirements for live captions:

- Low latency: Text appears in under a second, with modern models delivering final, formatted text in under 300ms.

- Speaker identification: Clear labels for who's talking

- Proper formatting: Punctuation, capitalization, and line breaks

- Error correction: Ability to fix mistakes in real-time

Meeting transcription and collaboration

Real-time meeting transcription transforms how teams capture and use conversation data. Instead of assigning someone to take notes, participants focus on discussion while the system creates searchable, timestamped records automatically. This shift to automation is impactful; McKinsey research found that automated AI transcription can accelerate analysis time by nearly 400 percent.

These transcripts become living documents where teams can search for specific topics, extract action items, or review decisions made weeks ago. Integration with collaboration platforms means transcripts appear directly in Slack channels or Microsoft Teams, making information instantly accessible.

The real power comes from making spoken information searchable and shareable, a stark contrast to traditional methods where, as McKinsey analysis suggests, manual sampling may capture less than 2% of total interactions. With complete transcripts, you can find that product spec discussion from three meetings ago or share exact quotes from a client call without rewatching hours of recordings.

Voice assistants and commands

Voice assistants rely on real-time speech-to-text as their foundation technology. When you say "Hey Siri, set a timer for 10 minutes," the system must transcribe your words instantly to trigger the appropriate action.

This application demands the lowest latency possible—users expect near-instant responses to voice commands. Even delays of half a second feel sluggish and break the natural interaction flow.

Beyond consumer assistants, enterprise applications use voice commands for hands-free operation:

- Warehouse workers updating inventory while handling packages

- Surgeons accessing patient records during operations

- Factory technicians controlling machinery while their hands stay busy

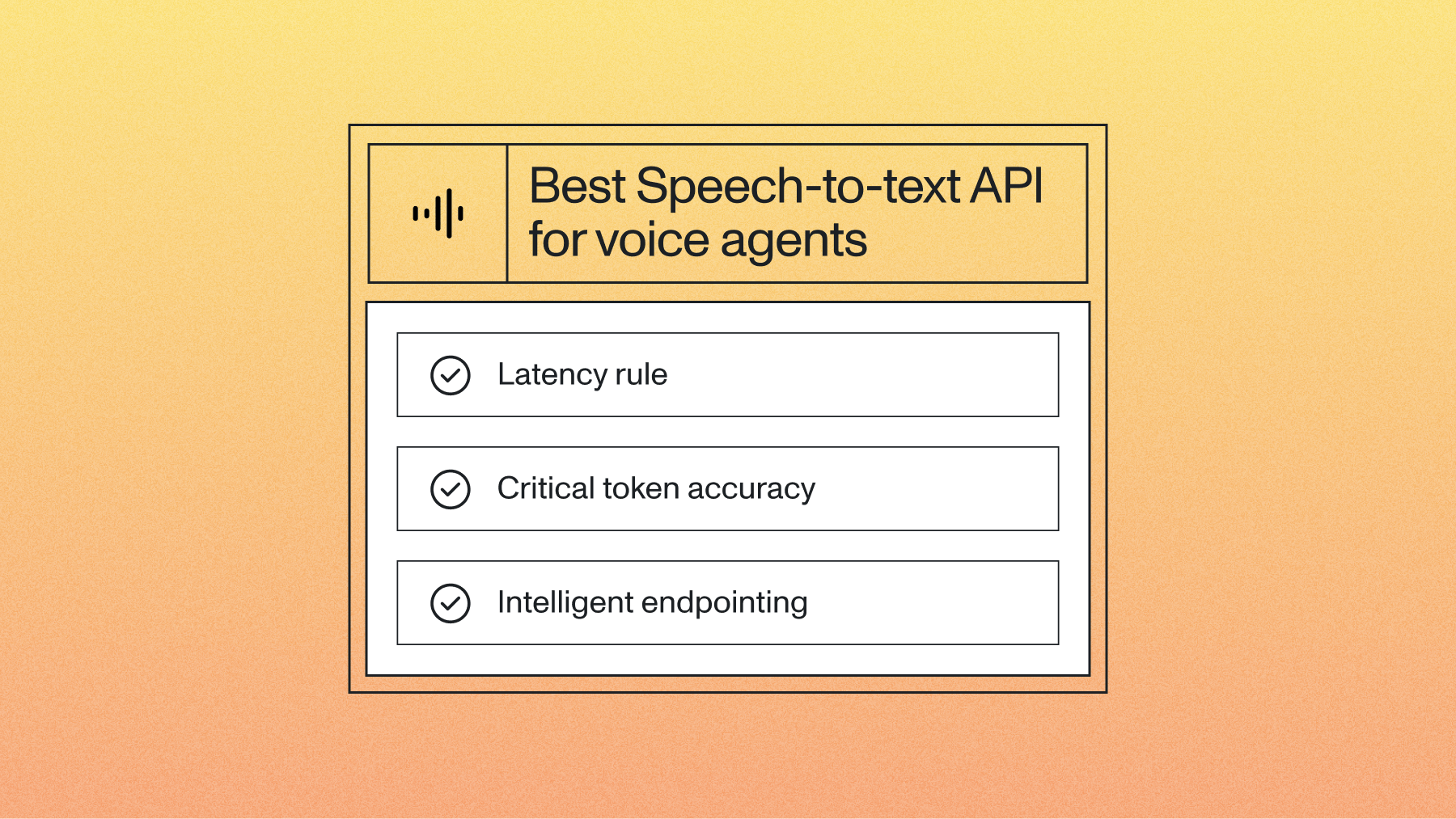

How to evaluate real-time speech-to-text accuracy and performance

Evaluating real-time speech-to-text requires measuring both accuracy and speed since live applications depend on immediate results.

Key evaluation criteria

Transcription accuracy, response latency, real-world performance, user experience quality

Evaluation scenarios (simple to complex):

Basic scenario: Single speaker, quiet room, clear microphone

- Performance: Highest accuracy and lowest latency (under 300ms).

- Use case: Voice commands, dictation

Moderate scenario: Conference call, 3-4 speakers, some background noise

- Performance: High accuracy with slightly increased latency (300-500ms).

- Use case: Meeting transcription

Complex scenario: Noisy environment, overlapping speech, poor audio quality

- Performance: Accuracy is impacted by noise and crosstalk, with latency potentially exceeding 500ms.

- Use case: Contact center analysis

Word Error Rate (WER) varies significantly based on audio quality. For example, AssemblyAI's Universal-3 Pro Streaming model achieves a mean WER of 6.3% across a diverse set of academic and real-world datasets. For detailed performance metrics, see our Benchmarks page.

Word Error Rate (WER)

Word Error Rate (WER): The industry standard for measuring transcription accuracy by calculating the percentage of incorrectly transcribed, inserted, or deleted words.

Lower WER indicates better accuracy, but WER only measures final results, not real-time user experience during live transcription.

Latency metrics

For real-time systems, you need to measure speed. Two key metrics are:

- Time to First Token (TTFT): This measures the delay between when a word is spoken and when the first partial result for that word appears on screen. For a voice command interface, a low TTFT (typically under 300ms) is critical to make the system feel responsive. Industry benchmarks show this is well within the typical 200-500 millisecond latency for real-time transcription.

- Real-Time Factor (RTF): This measures processing speed. An RTF of 0.5 means the system can process 10 seconds of audio in just 5 seconds. A lower RTF indicates a faster, more efficient system.

Testing in real-world conditions

AI models perform best on clean, studio-quality audio, but real users aren't in studios. In fact, an industry report identifies transcription quality, especially in noisy environments, as a primary pain point for teams implementing the technology.

Critical evaluation step: Test with audio reflecting your actual use case—noisy environments, overlapping speakers, or varied accents. This reveals true customer performance.

Implementation options and provider selection

You have three main paths for implementing real-time speech-to-text, each suited to different needs and technical capabilities.

Cloud service APIs

Cloud APIs offer the most flexibility for developers building custom applications. Services from Google Cloud, Microsoft Azure, AWS, and specialized providers like AssemblyAI provide robust APIs that handle the complexity of speech recognition infrastructure.

These services offer multiple model options optimized for different scenarios—conversation models for meetings, command models for voice interfaces, or phone call models for customer service applications. A sector where one analysis found Voice AI can unlock 20-30% cost savings and improve customer service by over 10%.

Most support dozens of languages and provide additional features like speaker diarization.

Cloud API advantages:

- Specialized models: Optimized for specific use cases and audio conditions

- Multilingual support: Dozens of languages without additional setup

- Advanced features: Speaker identification, sentiment analysis, and more

Implementation requires handling API authentication, managing streaming connections, and processing results in your application. Most providers offer software development kits (SDKs) in popular programming languages that simplify integration.

Benefits of cloud APIs:

- Scalability: Handle thousands of simultaneous users

- Reliability: Enterprise-grade uptime and redundancy

- Features: Advanced capabilities like speaker identification

- Updates: Continuous model improvements without code changes

Dedicated transcription applications

Ready-to-use applications provide immediate access to real-time transcription without any development work. Apps like Otter.ai or browser-based tools like Speechnotes work out of the box for personal or small team use.

These applications excel at their specific use cases but offer limited customization. They're perfect for individual users needing quick transcription access or teams wanting to test transcription quality before committing to custom development.

Popular dedicated applications include:

- Live Transcribe on Android for accessibility

- Otter.ai for meeting transcription

- Speechnotes for browser-based dictation

Performance requirements and technical considerations

Latency and response time

Latency measures the delay between speaking and seeing text appear. For real-time applications, this delay needs to feel instantaneous—typically under 500 milliseconds for the first partial results.

Voice assistants need the fastest response times since users expect immediate feedback to commands. Meeting transcription can tolerate slightly longer delays if it means higher accuracy. Live captioning falls somewhere in between, prioritizing readability while maintaining reasonable speed.

Network conditions affect latency significantly. A strong internet connection keeps delays minimal, while poor connectivity can add several seconds of lag. Some systems buffer audio locally to maintain smooth operation during network hiccups.

The processing happens in stages:

- Partial results: First guesses appear in 200–300 milliseconds

- Refined results: Updates arrive as more context becomes available

- Final results: With models like Universal-3 Pro Streaming, final, formatted results can appear in under 300 milliseconds.

Accuracy in real-world conditions

Real conversations rarely match the clean audio used in lab tests. Background noise, multiple people talking, strong accents, and technical jargon all challenge transcription accuracy. For instance, research demonstrates that off-the-shelf voice assistants achieved only 50% to 60% accuracy when transcribing sentences from speakers with dysarthria.

Modern systems achieve very high accuracy in good conditions but performance drops with challenging audio. The key is understanding these limitations and choosing systems that handle your specific environment well.

Common accuracy challenges include:

- Background noise: Coffee shops, busy offices, or outdoor environments

- Overlapping speech: Multiple people talking simultaneously

- Accent variation: Different regional or international pronunciations

- Technical vocabulary: Industry-specific terms or proper nouns

- Audio quality: Phone calls, poor microphones, or compressed audio

Systems handle these challenges through specialized training, noise suppression, and context-aware processing. Some providers offer custom models trained on specific industries or audio conditions.

Getting started with real-time speech-to-text

Real-time speech-to-text transforms how we interact with technology, turning spoken words into usable data the moment they're said. It's the engine behind the next generation of responsive, voice-aware applications.

The best way to understand the trade-offs between latency and accuracy is to see them for yourself. With a modern Voice AI API, you can build a simple application and start transcribing live audio in just a few minutes. For the highest accuracy and advanced features like prompting, our Universal-3 Pro Streaming model (u3-rt-pro) is the state-of-the-art choice. For other real-time use cases, our Universal-Streaming English and Universal-Streaming Multilingual models offer a powerful balance of speed and accuracy across a wide range of audio conditions. Try our API for free to see how quickly you can get text back from a live audio stream.

Frequently asked questions about real-time speech-to-text

How accurate is real-time speech-to-text with background noise?

Modern systems maintain good accuracy in moderate background noise through specialized noise suppression, though very noisy environments like construction sites still present challenges.

What happens when multiple people talk at the same time?

Advanced systems detect overlapping speech and identify speakers, though accuracy decreases when people speak simultaneously.

Can real-time speech-to-text work with phone call audio quality?

Yes, many providers offer models specifically optimized for phone audio that handle the compressed, limited-bandwidth conditions typical of phone calls. These models expect lower audio quality and compensate accordingly.

Which programming languages support real-time speech-to-text integration?

Most cloud providers offer SDKs for Python, JavaScript, Java, C#, and Go with WebSocket streaming support.

How much internet bandwidth does streaming transcription require?

Real-time transcription typically uses 50-100 Kbps for audio upload plus minimal bandwidth for text results. This is comparable to a voice call and works well on most broadband connections.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.