What is speech to text? The complete guide

This complete guide to speech-to-text will walk you through everything you need to know about this technology, including: what it is, how it works, and why we need it.

Speech-to-text is an AI-powered technology that converts spoken language into written text, enabling applications from meeting transcription and live captioning to voice agents and automated documentation. As the field has grown exponentially, market projections show it's expected to reach a market volume of US$73 billion by 2031.

This complete guide covers everything you need to understand speech-to-text: how it works, key technologies, business applications, and implementation strategies. You'll learn to evaluate solutions and choose the right approach for your specific needs.

Whether you're a curious individual looking to boost productivity or a business leader seeking to innovate, speech-to-text can change the way you get things done in today's voice-first world.

What is speech-to-text technology?

Speech-to-text technology is an AI-powered system that automatically converts spoken words into written text with high accuracy. It processes audio input through speech recognition models, handles multiple speakers and languages, and delivers formatted transcriptions for business applications like meeting notes, customer service analysis, and content creation.

This technology combines linguistics, computer science, and AI to function effectively. Here's how modern speech-to-text models work:

- Audio Input: The system receives an audio signal, typically from a microphone or an audio file.

- Signal Processing: The audio is preprocessed for transcoding and audio gain normalization.

- Deep Learning Speech Recognition Model: The audio signal is fed into a speech recognition deep learning model trained on a large corpus of audio-transcription pairs, which generates the transcription of the input audio.

- Text formatting: The raw transcription generated by the speech recognition model is formatted for better readability. This includes adding punctuation, converting phrases like "one hundred dollars" to "$100," capitalizing proper nouns, and other enhancements.

Modern speech-to-text systems often use AI models (particularly deep learning neural networks) to improve their accuracy and adapt to different accents, languages, and speech patterns.

Types of speech-to-text engines

There are several types of speech-to-text engines to consider, each with its own advantages, disadvantages, and ideal use cases.

The right choice for you will depend on your needs for accuracy requirements, language support, integration capabilities, and data privacy concerns.

Cloud-based vs. on-premise

- Cloud-based: These systems process audio on remote servers, offering scalability and no infrastructure maintenance. They're ideal for businesses handling large volumes of data or requiring real-time transcription.

- On-premise / self-hosted: These systems run locally on the user's hardware and can function without internet connectivity. The cost is sometimes less than cloud-based, however, initial costs for hardware and ongoing costs of maintenance and support staff can negate these savings. Some providers, like AssemblyAI, now offer self-hosted deployment options that bring cloud-grade accuracy to on-premise environments without the infrastructure burden of building from scratch.

Open-source vs. proprietary

- Open-source: These engines allow users to view and sometimes modify and distribute the source code, though with specified limitations. They offer flexibility and customization options but may require more technical expertise to implement and maintain.

- Proprietary: Developed and maintained by specific companies, these systems can be tailor-made for specific use-cases, such as industry-relevant audio as we do. Look for proprietary engines that are also continuously updated.

Learn more about cloud-based security measures

Speech-to-text vs. related technologies

While people often use these terms interchangeably, speech-to-text, voice recognition, and speaker recognition are distinct technologies:

Think of speech-to-text as the foundational layer that provides the raw transcript for other technologies to analyze. When you tell Alexa to "play music," it first uses speech-to-text to convert your voice to text, then voice recognition to understand the command. Modern AI systems often combine these technologies for sophisticated Voice AI applications.

How does speech-to-text work?

Speech-to-text works by converting audio into a digital signal, running it through a deep learning model trained on millions of audio-text pairs, and then formatting the raw output into readable text. Modern speech-to-text systems process audio through three core stages, each affecting final accuracy:

1. Audio preprocessing

Before analysis begins, audio input must be converted into a format usable by speech recognition models. This preprocessing stage is critical for accuracy.

- Transcoding: Change the audio format to a standard form (See best audio file formats for speech-to-text).

- Normalization: Adjusting the volume to a standard level.

- Segmentation: Breaking the audio into manageable chunks.

2. Deep Learning speech recognition model

This stage maps audio signals to word sequences using end-to-end deep learning models. Modern systems use architectures like the Transformer and Conformer. AssemblyAI's production models, like Universal-2 and Universal-3 Pro, are highly optimized systems trained on massive datasets.

Universal-3 Pro recently shipped five major improvements: ~19% relative WER improvement on multilingual code-switching, ~5.9% WER improvement on verbatim/disfluency datasets, P50 turnaround time up to 30% faster (P99 up to 34% faster), 19% relative diarization improvement, and better timestamp accuracy. These gains compound—the model gets meaningfully better with each update cycle.

The model learns from large datasets of audio-text pairs, acquiring implicit knowledge of pronunciation and sentence structure. It generates likelihood scores for each word in short time frames, then a decoder produces the most probable word sequence.

3. Text formatting

Raw model output lacks punctuation, capitalization, and proper formatting for entities like emails and URLs. The final step applies inverse text normalization, capitalization, and true-casing using rule-based algorithms or text processing neural networks. This transforms raw transcription into readable, professional text.

Factors affecting speech-to-text accuracy

Speech-to-text accuracy depends on multiple factors across three main categories:

Technical factors

- Audio quality: Clear input with minimal background noise

- File format: Uncompressed formats perform better than heavily compressed audio

- Recording setup: Proximity to microphone and acoustic environment

Speaker factors

- Speaking style: Clear enunciation versus rapid or mumbled speech

- Accents and dialects: Variations from training data patterns

- Vocabulary: Common words versus technical jargon or proper nouns

Contextual factors

- Multiple speakers: Overlapping voices or quick speaker changes

- Language mixing: Code-switching between languages mid-conversation

- Background context: Meeting versus phone call versus presentation

Independent benchmarks help quantify these factors. Hamming.ai's analysis across 4M+ production calls shows Universal-3 Pro Streaming at 307ms P50 latency and 8.14% word error rate—compared to Deepgram Nova-3's 516ms P50 and 9.87% WER. These real-world numbers matter more than lab benchmarks because production audio includes all the noise, accents, and overlapping speech that controlled tests filter out.

Speech-to-text for voice agents

Voice agents—AI systems that hold real-time conversations with humans over phone or web—depend on speech-to-text as the first and most critical link in their processing pipeline. If the transcription is wrong, everything downstream falls apart: the LLM misinterprets the request, and the response makes no sense.

That's why voice agents can't use standard batch transcription. They need streaming speech-to-text—models that transcribe audio in real time as the user speaks, word by word, with latency measured in milliseconds rather than seconds. Batch processing, where you upload a file and wait for results, simply doesn't work when a caller expects an immediate reply.

The multi-provider challenge

Building a voice agent typically means stitching together three separate services: a speech-to-text provider for transcription, an LLM provider for understanding and generating responses, and a text-to-speech provider for speaking the answer back. That's three SDKs to integrate, three billing dashboards to manage, three sets of logs to debug when something goes wrong, and three points of failure that can introduce latency.

This complexity is a real barrier. Developers spend weeks on integration plumbing instead of building the actual agent experience.

What voice agents require from speech-to-text

Not every speech-to-text model is built for conversational AI. Voice agents have specific requirements that go beyond raw transcription accuracy:

- Low latency (~1 second end-to-end): Users expect near-instant responses. Every extra 100ms of delay makes the conversation feel unnatural.

- Turn detection: The model needs to recognize when a speaker has finished their thought—not just when they pause to breathe.

- Interruption handling: Real conversations involve interruptions. The system has to detect when a user cuts in and immediately stop the current response.

- Entity accuracy: Voice agents in healthcare, finance, and customer service handle names, account numbers, medical terms, and other high-stakes vocabulary. Getting "Lipitor" wrong isn't a minor transcription error—it's a safety issue. In head-to-head comparisons, Universal-3 Pro delivers 92.7% mixed-entity accuracy on these critical tokens—confirmation codes, phone numbers, proper nouns—that voice agents need to act on correctly.

The unified API approach

AssemblyAI's Voice Agent API takes a different approach to the multi-provider problem. Instead of requiring developers to wire together separate services, it provides a single WebSocket API that handles the full voice agent pipeline—speech-to-text, LLM reasoning, and text-to-speech—through one connection.

The speech-to-text foundation is Universal-3 Pro Streaming, which ranks #1 on the Hugging Face Open ASR Leaderboard. A flat rate of $4.50/hr covers the entire pipeline, replacing three separate invoices with one. For context, OpenAI's Real-Time API costs roughly $18/hr—making AssemblyAI's approach about 4x cheaper for comparable (and often better) speech accuracy.

The idea is invisible infrastructure: developers build their voice agent on top, and from the end user's perspective, it feels like the developer built the whole thing themselves. It works with existing frameworks like LiveKit and Pipecat, so teams don't have to rearchitect their stack to get started.

Common challenges and limitations

While modern speech-to-text is powerful, understanding limitations helps set realistic expectations and design better products.

Accuracy limitations

Even advanced models struggle with specialized terminology and strong accents. Industry-specific jargon often requires custom vocabulary configuration. The "final mile" of accuracy remains challenging for edge cases.

Environmental challenges

Research shows word error rates jump from near-zero to over 50% in noisy, multi-speaker environments. Multiple speakers, poor microphone quality, and ambient noise are the biggest accuracy enemies.

Contextual understanding

AI models don't truly understand context like humans do, leading to errors with homophones or ambiguous phrases. For example, one systematic review found speech recognition had higher error rates than manual keyboarding in clinical settings. Context clues that humans naturally understand remain challenging for AI.

Bias and fairness

Models trained on non-diverse data may perform poorly for certain demographics. Research found a 16-percentage-point accuracy gap between the voices of Black and white participants in one study. Responsible providers actively work to mitigate bias through diverse training data.

Benefits of speech-to-text technology

Speech-to-text provides major advantages for individuals and businesses across industries. As adoption grows, we're discovering new applications and benefits continuously.

- Increased productivity: Speech-to-text can reduce time spent on manual transcription and note-taking.

- Improved accessibility: This technology provides support for individuals with hearing impairments, mobility issues, or learning disabilities, building on a history of federal accessibility requirements like the mandate for captioning capabilities in television sets made after 1993.

- Better customer experiences: Businesses using speech-to-text in customer service operations can reduce average handling time and improve first-call resolution rates.

- Cost reduction: Automated transcription can be cheaper than human transcription services and allows businesses to reallocate resources to more complex, high-value tasks.

- Better data analysis: Speech-to-text enables more efficient analysis of large volumes of data, leading to more informed decision-making. For example, a 2024 study in healthcare found that AI-powered documentation improved quality and reduced consultation length by over 26%.

- Improved compliance and record-keeping: Speech-to-text provides accurate documentation of conversations and meetings. Features like AssemblyAI's Guardrails add an additional layer of safety by helping ensure transcript outputs meet compliance requirements before they reach downstream systems.

- Flexibility and convenience: This technology can be used across various devices and integrated with existing software to offer users flexibility in how and where they work.

Applications of speech-to-text technology

Speech-to-text technology powers applications across industries and personal use cases. You might already use it daily through Siri or Alexa. Here are the most prominent applications and real-world examples:

Personal use cases

- Dictation and note-taking: Students and professionals use speech-to-text to quickly capture ideas, create documents, or take notes during lectures and meetings. For example, a journalist might use speech-to-text to transcribe interviews in real time, saving hours of manual transcription work.

- Accessibility: Speech-to-text provides support for individuals with hearing impairments. It enables real-time captioning of live events, phone calls, and video content to make information more accessible.

- Voice commands and virtual assistants: Speech-to-text powers virtual assistants like Siri, Alexa, and Google Assistant. According to U.S. market analysis, these common assistants collectively hold an estimated 92.4% of the smartphone assistant market share, allowing users to set reminders, send messages, or control smart home devices using their voice.

Business applications

- Customer service and call centers: Many companies use speech-to-text to transcribe customer calls automatically. This allows for easier analysis of customer interactions, identification of common issues, and improvement of service quality.

- Meeting transcription: Businesses use speech-to-text to create searchable archives of meetings and conferences. This helps with record-keeping, allows absent team members to catch up, and makes it easier to reference important discussions later.

- Voice agents: Companies are building AI-powered voice agents that handle customer calls, schedule appointments, and answer questions in real time. These agents rely on streaming speech-to-text as the foundation of their conversational pipeline—transcribing caller speech instantly so an LLM can generate a response.

- Applying LLMs to spoken data: With frameworks like AssemblyAI's LLM Gateway, developers can apply large language models directly to transcripts to perform advanced tasks like summarization, question answering, and automated note-taking.

- Content creation: Podcasters and video creators use speech-to-text to generate accurate transcripts and subtitles for their content to improve accessibility and SEO.

- Legal and medical transcription: Law firms and healthcare providers use specialized speech-to-text systems to transcribe depositions, court proceedings, and medical notes. For healthcare specifically, AssemblyAI's Medical Mode delivers significantly better accuracy on medical terminology—medication names, procedures, anatomical terms—that general-purpose models frequently get wrong. It's available as an add-on for both Universal-3 Pro and Universal-2.

Real-world examples of speech-to-text technology

Jiminny in sales and customer success

Jiminny uses AssemblyAI's speech-to-text to power sales coaching and call recording features. This integration helps customers achieve improved win rates through AI insights for data-driven coaching. The result is better forecasting accuracy and deeper customer knowledge.

Marvin in user research

Marvin integrated AssemblyAI's speech transcription and PII redaction models into their user research tools. This helps users spend significantly less time analyzing data. Teams can focus on extracting meaningful insights from customer interviews rather than manual transcription.

Screenloop in hiring intelligence

Screenloop embedded AssemblyAI's transcription model into their interview process tools. Customers see reduced time on manual hiring tasks, faster time-to-hire, and lower candidate drop-off. The automation also reduces rejected offers for open roles.

Siro in field sales coaching

Siro uses AssemblyAI to power AI-driven coaching for field sales teams. By transcribing and analyzing in-person sales conversations, Siro achieved a 90% reduction in customer complaints and support tickets—demonstrating how accurate transcription feeds directly into better business outcomes when paired with conversation intelligence.

How speech-to-text evolved

Speech-to-text began in the 1950s with Bell Labs' "Audrey," which could only recognize digits from a single speaker. Progress was slow for decades, limited by computing power and data availability. The first major leap came with Hidden Markov Models (HMMs), which predicted word sequence probabilities.

The real revolution started with deep learning and neural networks. By training AI models on massive datasets, modern systems learn complex speech patterns with remarkable accuracy. This shift transformed speech-to-text from a niche tool to a foundational component of modern applications.

Free vs. paid speech-to-text solutions

When exploring speech-to-text, you'll find both free and paid options. The right choice depends entirely on your goal.

Paid solutions, typically offered as APIs, are designed for reliable, scalable products. Companies like AssemblyAI handle the complexity of training and improving AI models. You focus on core features while benefiting from high accuracy, security, support, and advanced capabilities like speaker diarization.

How to choose the right speech-to-text tool

Not every speech-to-text solution fits every business use case. Here are key factors to consider when selecting the best tool:

- Accuracy: Look for tools with high transcription accuracy rates. In fact, a recent survey of over 200 tech leaders found that accuracy, quality, and performance were among the top three most important factors when evaluating an AI vendor. Models like AssemblyAI's Universal-3 Pro achieve near-human-level performance across a wide range of data.

- Language support: Consider whether the tool supports the languages you need. Some solutions offer multilingual capabilities, while others specialize in specific languages or dialects.

- Pricing: Compare pricing models (pay-as-you-go, subscription-based, etc.) and guarantee they align with your usage patterns and budget.

- Integration options: Check if the tool easily integrates with your existing systems and workflows. APIs and SDKs can facilitate seamless integration.

- Customization capabilities: Look for advanced customization options. For example, AssemblyAI's Universal-3 Pro model supports natural language prompting (up to 1,500 words) to guide transcription style and keyterms prompting (up to 1,000 words) to improve recognition of domain-specific terms. These features offer far more control than traditional custom vocabulary.

- Processing speed: Consider both real-time transcription capabilities and batch processing speeds for pre-recorded audio.

- Additional features: Evaluate extra functionalities like speaker diarization, punctuation, sentiment analysis, or content summarization.

- Security and compliance: Double-check that the tool meets your data security requirements and complies with relevant regulations (like GDPR and HIPAA), as an industry survey revealed that over 30% of product leaders see security as a significant challenge for integration. Look for features like Guardrails that add safety layers between transcript output and downstream systems.

- Scalability: Choose a solution that can handle your current needs and scale as your requirements grow.

- Support and documentation: Consider the level of technical support and the quality of documentation provided by the vendor.

Popular speech-to-text tools

1. AssemblyAI

AssemblyAI is a Voice AI infrastructure platform offering a suite of AI models through a simple API. It provides both real-time and asynchronous transcription, a comprehensive framework for applying Large Language Models (LLMs) to spoken data, and a Voice Agent API for building conversational AI.

Features:

- Industry-leading accuracy with Universal-2 and Universal-3 Pro models — Universal-3 Pro recently shipped ~19% WER improvement on multilingual benchmarks, up to 30% faster P50 turnaround time, and 19% relative diarization improvement

- LLM Gateway: A unified API to apply over 15 models from providers like Anthropic, OpenAI, and Google directly to your transcripts for tasks like summarization, Q&A, and content generation.

- Advanced customization with natural language prompting and keyterms prompting

- Speech Understanding: A suite of models including Speaker Diarization, Sentiment Analysis, Summarization, Topic Detection, Key Phrases, and Auto Chapters.

- Medical Mode for significantly improved accuracy on healthcare terminology

- Multilingual support across 99+ languages

- Voice Agent API for building real-time conversational AI with a single WebSocket connection

- Guardrails for adding safety and compliance layers to transcript outputs

- Self-hosted deployment option for organizations requiring on-premise infrastructure

Pros:

- Highly accurate transcriptions, especially with Universal-3 Pro

- Comprehensive platform combining transcription, speech understanding, and LLM access

- Excellent documentation and developer support

- Flexible pricing for various usage levels

Cons:

- Primarily focused on API integration—may not be ideal for non-technical users

Pricing:

- Free tier: $50 in free transcription credits

- Pre-recorded: Universal-3 Pro at $0.21/hr, Universal-2 at $0.15/hr — with add-ons for keyterms prompting ($0.05/hr), speaker diarization ($0.02/hr), and Medical Mode ($0.15/hr)

- Streaming: Universal-3 Pro Streaming at $0.45/hr, Universal-Streaming English/Multilingual at $0.15/hr

- Voice Agent API: $4.50/hr flat rate covering STT, LLM, and TTS

- Custom: Personalize your plan with volume discounts

2. Google Cloud Speech-to-Text

Google Cloud Speech-to-Text is a cloud-based speech recognition service using Google's machine learning technology. It offers wide language support and integrates seamlessly with other Google Cloud services.

Features:

- Real-time and asynchronous transcription

- Support for 125+ languages and variants

- Noise cancellation and speaker diarization

- Integration with other Google Cloud services

Pros:

- Wide language support

- Good integration with Google ecosystem

- Reliable and scalable

Cons:

- Can be complex for beginners

- Less competitive pricing for high-volume users

- Lower accuracy compared to specialized providers

Pricing:

- Google offers a free tier with a monthly usage limit.

- Paid usage is billed per minute, with different rates for standard, medical, and other specialized models.

- For the most current information, please refer to the official Google Cloud Speech-to-Text pricing page. (Pricing is subject to change.)

3. Amazon Transcribe

Amazon Transcribe is a cloud-based ASR service for adding speech-to-text to applications. As part of AWS, it offers seamless integration with Amazon services and both real-time and batch transcription.

Features:

- Real-time and batch transcription

- Custom vocabulary and language models

- Automatic language identification

- Speaker diarization and channel separation

- Integration with AWS ecosystem

Pros:

- Seamless integration with AWS services

- Good accuracy for common use cases

- Scalable for large-volume transcription needs

Cons:

- Learning curve for AWS environment

- Limited advanced AI features compared to specialized providers

- Higher cost for some use cases

- Limited accuracy for more specialized use cases

Pricing:

- Amazon offers a free tier with a limited number of transcription minutes for new customers.

- Paid usage is typically billed per second, with different rates for standard and real-time transcription.

- For the most current information, please refer to the official Amazon Transcribe pricing page. (Pricing is subject to change.)

Getting started with speech-to-text

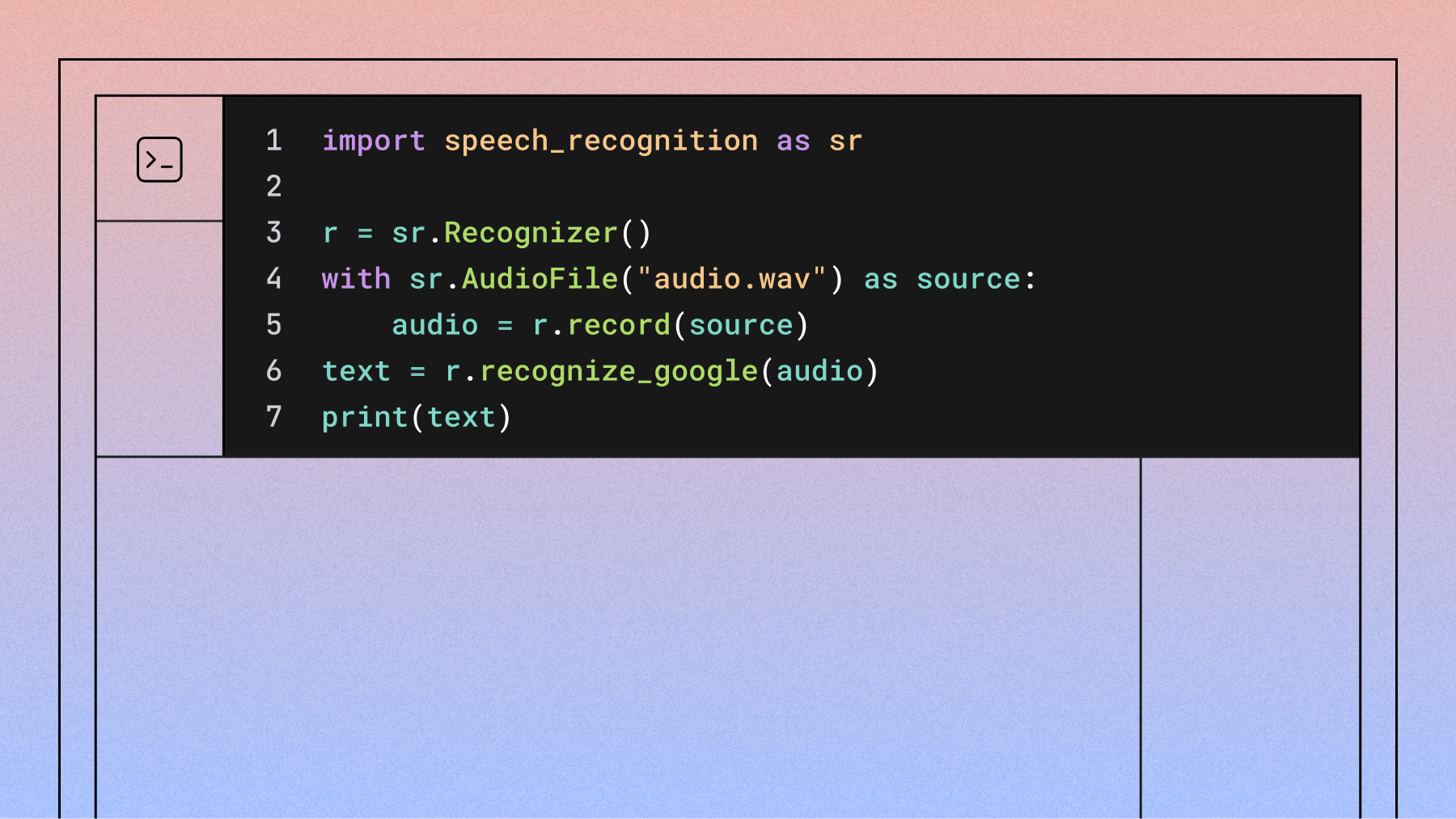

Integrating speech-to-text is more accessible than you might think. Here's a simple path to get started:

- Define your goal. What do you want to achieve? Are you transcribing meetings for searchable records, analyzing customer calls for sentiment, or adding captions to video content? A clear goal will guide your technical choices.

- Choose your approach. For personal use, a simple transcription app might be enough. If you're building a product, a speech-to-text API is the way to go. An API allows you to integrate transcription directly into your application's workflow.

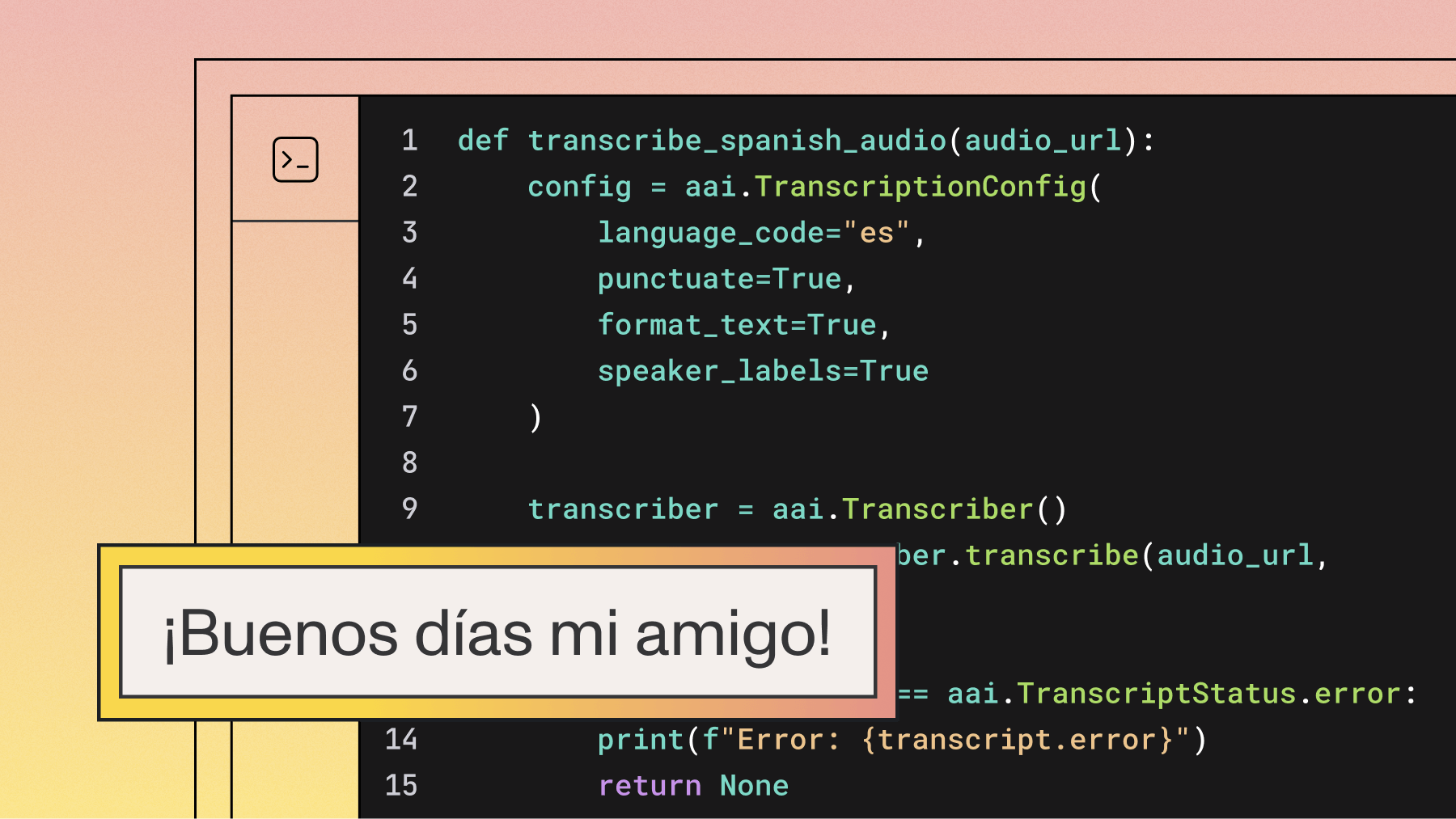

- Start building (for developers). Find a developer-friendly API, sign up for a free API key, and read the documentation. Most providers offer quickstart guides that let you make your first transcription request in just a few minutes with a few lines of code.

The future of speech-to-text technology

Speech-to-text technology is poised for exciting advancements with current AI research breakthroughs. Expected improvements include:

- Enhanced accuracy: Better performance in noisy environments with multiple speakers

- Advanced features: Emotion detection and intent recognition capabilities

- Context understanding: More sophisticated meaning extraction beyond basic transcription

- Voice agents and real-time conversational AI: As voice agents become more prevalent in customer service, healthcare, and enterprise workflows, speech-to-text models will need to deliver even lower latency, more accurate turn detection, and better handling of domain-specific vocabulary—pushing the entire field toward truly natural human-AI conversation

New applications will emerge across industries. Healthcare could see more accurate medical transcription improving patient care. Education might develop personalized learning experiences based on real-time speech analysis.

However, challenges remain. Privacy concerns and data security will be ongoing issues as systems process increasingly sensitive information. There's also the risk of bias in AI models, which could lead to unequal performance across demographics.

Unlock the power of speech-to-text with AssemblyAI

Speech-to-text technology has revolutionized how we interact with devices, create content, and process information. You're not just a user of this technology—you can be a builder.

AssemblyAI provides a powerful, developer-friendly speech-to-text API built on AI models like Universal-3 Pro. It provides both streaming (real-time) and asynchronous transcription capabilities, plus a Voice Agent API for building conversational AI with a single integration. You also get access to features like:

- Custom vocabulary and keyterms prompting for improved accuracy in specific domains

- Advanced AI models like speaker diarization, sentiment analysis, and content summarization

- Medical Mode for healthcare-specific transcription accuracy

- Guardrails for compliance and safety

- Multilingual support for global applications

- Excellent documentation and customer support for smooth integration

Try AssemblyAI today to experience the future of speech recognition technology

Frequently asked questions about speech-to-text

What's the difference between speech-to-text and voice recognition?

Speech-to-text converts spoken words to written text, while voice recognition is broader and includes speaker identification and command understanding.

How accurate is speech-to-text technology?

Modern commercial APIs can achieve over 95% accuracy on clean audio, but performance drops with background noise or multiple speakers. For instance, academic research shows that in a noisy environment, the word error rate can increase by nearly 30% compared to a clean one. Independent benchmarks from Hamming.ai across 4M+ production calls show AssemblyAI's Universal-3 Pro Streaming achieving an 8.14% word error rate on real-world audio.

Do I need an internet connection for speech-to-text?

Most high-accuracy systems require internet connectivity for cloud processing, while on-device models work offline but with reduced accuracy. Some providers offer self-hosted deployment options that run on your own infrastructure without requiring internet access.

Can speech-to-text handle multiple speakers?

Yes, through speaker diarization or speaker labels. Advanced systems identify and label who is speaking when, creating conversation-style transcripts.

What's the best free speech-to-text tool for beginners?

For basic transcription, try Google Docs Voice Typing or Otter.ai; for building applications, start with AssemblyAI or Google Cloud Speech-to-Text free tiers.

What is the best speech-to-text API for voice agents?

Voice agents need streaming speech-to-text with sub-second latency, accurate entity recognition, and natural turn detection—batch APIs can't keep up with real-time conversation. AssemblyAI's Voice Agent API handles the full pipeline (speech-to-text, LLM, and text-to-speech) through a single WebSocket connection built on Universal-3 Pro Streaming, at $4.50/hr — roughly 4x cheaper than OpenAI's Real-Time API.

Which speech-to-text API has the best accuracy for technical terminology?

Accuracy on specialized terms depends on how well the model handles out-of-vocabulary words—look for APIs supporting custom vocabulary or keyterms prompting. AssemblyAI's Universal-3 Pro supports keyterms prompting (up to 1,000 words) and natural language prompting (up to 1,500 words) for fine-grained control over domain-specific recognition. For healthcare specifically, Medical Mode provides significantly better accuracy on medical terminology out of the box.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

.png)