How to create a phone-based voice agent

Build a phone-based voice agent with sub-300ms speech-to-text, SIP or Twilio telephony, an LLM, and TTS. Architecture, latency targets, and code patterns.

A phone-based voice agent is an AI system that answers or places phone calls, understands what the caller is saying, and responds in natural speech — with no human in the loop. The best ones pick up in under a ring, handle interruptions the way a person does, and hang up before anyone realizes they weren't talking to a human.

Getting there takes four things: a telephony provider to carry the call, a streaming speech-to-text model to hear, an LLM to think, and a text-to-speech model to respond. This guide walks through each layer, the latency budget they share, and the architecture decisions that decide whether your agent feels natural or obviously robotic.

We'll use the AssemblyAI Universal-3 Pro Streaming model for transcription throughout — it's built specifically for voice agents, with 307ms P50 latency and native 8kHz mulaw support so phone audio doesn't need resampling.

What is a phone-based voice agent?

A phone-based voice agent is a Voice AI application that conducts full conversations over the public telephone network (PSTN) or a SIP trunk. Unlike an IVR ("press 1 for billing"), it listens to free-form speech, understands intent with a Large Language Model, and replies in synthesized voice — all inside a single phone call.

Common jobs for phone-based voice agents:

- Inbound support: answer questions, triage tickets, hand off to a human

- Appointment scheduling and confirmations

- Insurance verification and benefits lookup

- Outbound reminders, surveys, and follow-ups

- Lead qualification and sales discovery

- After-hours coverage for tier-1 calls

The technology stack is the same regardless of direction. The only thing that changes is who initiates the call.

The four components of a phone-based voice agent

Every phone-based voice agent has the same four pieces. Get any one of them wrong and the whole conversation falls apart.

1. Telephony (the phone network layer)

This is what connects your agent to a real phone number. You have three practical options:

- Twilio Voice + Media Streams — the most common choice. Twilio gives you a number, handles the PSTN, and streams 8kHz mulaw audio to your server over WebSocket.

- SIP trunk (Telnyx, Plivo, direct carrier) — more control and lower per-minute cost once you're at scale. More setup work.

- Managed voice agent platforms (Vapi, LiveKit, Pipecat, Daily, Retell) — they abstract telephony, STT, LLM, and TTS orchestration into one SDK. Fastest path to a working prototype.

The audio format matters here. Traditional telephony runs at 8kHz mulaw (μ-law), not the 16kHz PCM most audio tools assume. A speech-to-text model that accepts mulaw natively saves you a resampling step and the latency that comes with it.

2. Streaming speech-to-text (the ears)

This is the most time-sensitive component. The speech-to-text model has to produce transcripts fast enough that your LLM can start generating before the caller finishes talking — otherwise there's an awkward silence every turn.

What to look for:

- Sub-300ms P50 latency on real phone audio, not studio recordings

- Immutable transcripts — finalized words don't change, so downstream systems can act on partials with confidence

- Intelligent endpointing that combines acoustic pauses with semantic cues (knowing "my number is five five five" isn't a complete thought)

- Native telephony format support — 8kHz mulaw input without resampling

- Accuracy on alphanumerics — phone numbers, confirmation codes, email addresses

The Universal-3 Pro Streaming model hits 307ms median latency with 21% fewer alphanumeric errors than the previous generation of streaming speech-to-text models, which matters when your agent is writing down a credit card number.

3. The LLM (the brain)

The LLM takes the transcript, decides what the agent should say, and optionally calls tools — a calendar API, your CRM, a payment processor. Any modern model works: GPT-4o, Claude Sonnet, Gemini Flash. Pick for latency first, capability second — a smarter response that arrives 800ms late feels worse than a slightly simpler one that lands instantly.

The LLM Gateway lets you route transcripts to multiple LLM providers through a single API, which is useful when you want to switch models without touching the rest of your stack.

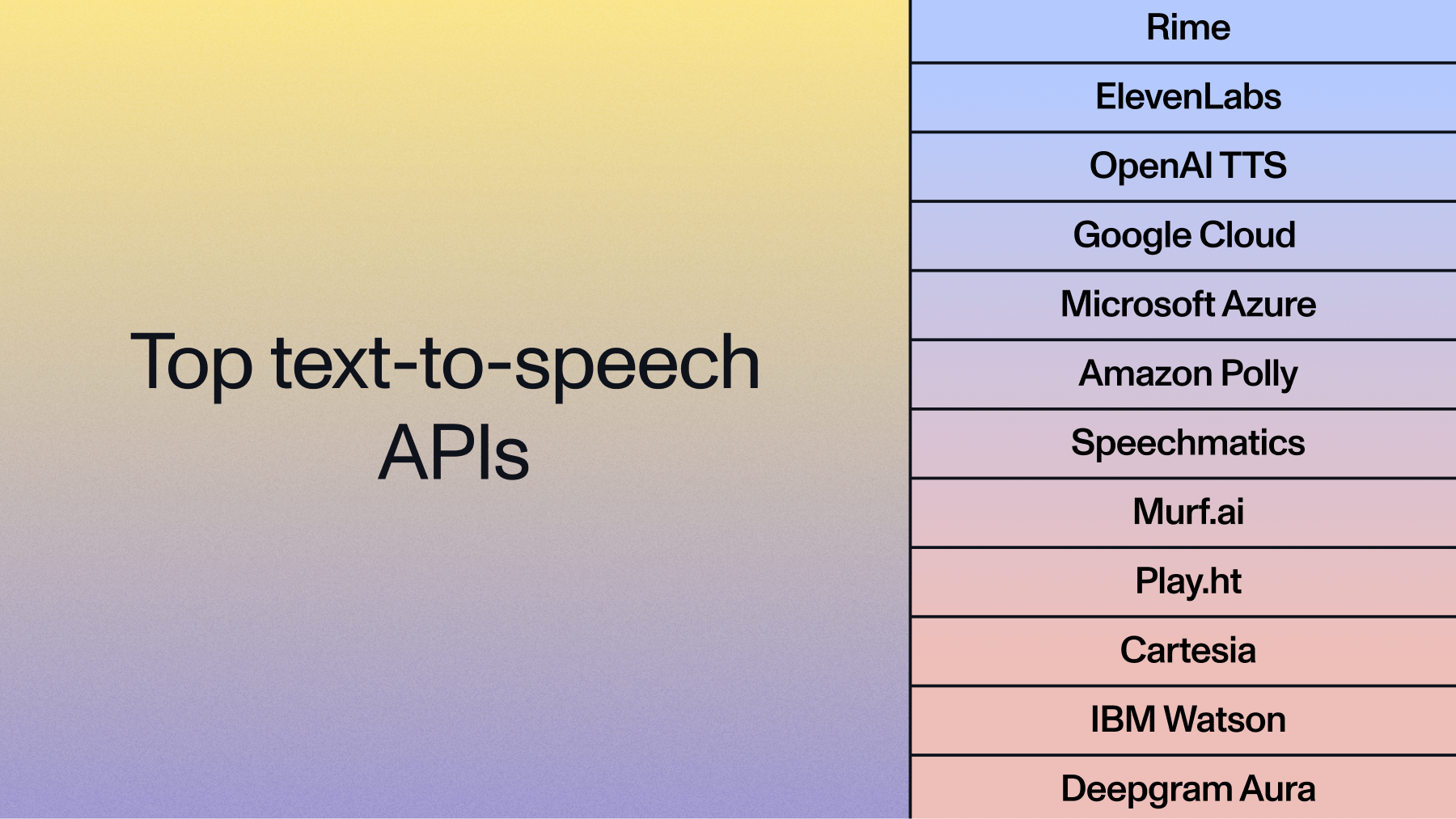

4. Text-to-speech (the mouth)

TTS converts the LLM's reply back into audio. Three things matter:

- Time to first audio byte (under 400ms is goodTwilio phone agent with AssemblyAI Universal-3 Pro)

- Voice naturalness and prosody

- Ability to stream audio in chunks so the caller hears speech while the rest is still generating

Stream the TTS output back to Twilio as mulaw frames and you're done.

Architecture: how the pieces fit together

Here's the reference architecture for a phone-based voice agent using Twilio for telephony. If you use a managed platform like Vapi or LiveKit, most of the middle is handled for you.

<pre>

Caller's phone

│

│ PSTN

▼

Twilio Voice

│ TwiML → open WebSocket

▼

Your server (WebSocket bridge)

┌────┴────┐

▼ ▼

Universal-3 TTS

Pro Streaming (ElevenLabs /

(STT) Cartesia / etc.)

│ ▲

│ transcript │ synthesized audio

▼ │

LLM (GPT-4o / Claude / etc.)

│ text response

└───────────►

</pre>

Audio flows in two directions continuously. Inbound audio (caller → agent) goes to the speech-to-text model. Outbound audio (agent → caller) is generated by the TTS model. Your server's job is to bridge them and hand transcripts to the LLM at the right moment.

The latency budget

The single biggest quality driver in a phone-based voice agent is end-to-end latency from "caller stops talking" to "agent starts talking." Under 800ms feels natural. Over 1,500ms feels like a bad satellite connection.

Here's where the time goes in a well-tuned pipeline:

Two rules fall out of this budget:

- Every component has to stream. Batch anything and you're over budget.

- Don't wait for a complete transcript before calling the LLM. Start generating on partials that your STT model flagged as stable.

The Universal-3 Pro Streaming model produces immutable partials — once a word is finalized, it won't change — which lets the LLM start generating while the caller is still finishing their sentence.

Building a phone-based voice agent with Twilio and AssemblyAI

Here's a minimal server that bridges Twilio Media Streams into AssemblyAI Universal-3 Pro Streaming. This handles the inbound audio path; wire it up to your LLM and TTS of choice.

<pre><code class="language-python">import asyncio

import base64

import json

import os

import websockets

from fastapi import FastAPI, WebSocket

from fastapi.responses import Response

app = FastAPI()

ASSEMBLYAI_KEY = os.environ["ASSEMBLYAI_API_KEY"]

AAI_WS = (

"wss://streaming.assemblyai.com/v3/ws"

"?sample_rate=8000&encoding=pcm_mulaw&format_turns=true"

)

@app.post("/incoming-call")

async def incoming_call(request):

# TwiML tells Twilio to open a Media Stream to our /ws endpoint

twiml = """<Response>

<Connect>

<Stream url="wss://your-server.ngrok.app/ws" />

</Connect>

</Response>"""

return Response(content=twiml, media_type="application/xml")

@app.websocket("/ws")

async def ws(ws: WebSocket):

await ws.accept()

async with websockets.connect(

AAI_WS,

additional_headers={"Authorization": ASSEMBLYAI_KEY},

) as aai:

async def twilio_to_aai():

async for msg in ws.iter_text():

data = json.loads(msg)

if data["event"] == "media":

# Twilio sends base64 mulaw at 8kHz

audio = base64.b64decode(data["media"]["payload"])

await aai.send(audio)

async def aai_to_logic():

async for raw in aai:

evt = json.loads(raw)

if evt.get("type") == "Turn" and evt.get("end_of_turn"):

transcript = evt["transcript"]

# Hand off to your LLM + TTS here

print("Final turn:", transcript)

await asyncio.gather(twilio_to_aai(), aai_to_logic())

</code></pre>Two things to note:

- encoding=pcm_mulaw and sample_rate=8000 match Twilio's native format. No resampling.

- format_turns=true gives you clean turn boundaries with punctuation — easier to pass to an LLM than raw partials.

For a full runnable example — FastAPI server, ElevenLabs TTS, GPT-4o with function calling for order lookup and appointment booking — clone the companion repo:

git clone https://github.com/kelsey-aai/phone-voice-agent-assemblyaiDesign decisions that make or break a phone-based voice agent

Most failed phone-based voice agent projects fail the same way: the tech works in a demo, then breaks on real calls. Here are the decisions that matter in production.

Turn detection strategy

Fixed silence timers (wait 800ms, then respond) feel broken. Real callers pause mid-sentence to think, and the agent keeps cutting them off. Modern streaming models combine acoustic silence with semantic signals — the model knows that "the number is five five five…" is mid-thought even if there's a pause. Use intelligent endpointing with tunable thresholds, not a fixed timer.

Barge-in handling

Callers interrupt. When they do, the agent has to stop talking immediately, not finish its sentence. That means you need to pipe STT transcripts back even while TTS is playing, and cut the TTS audio the moment you detect the caller started a new turn.

Alphanumeric accuracy

The hardest thing a phone-based voice agent does is get a confirmation code right on the first try. "B as in boy, eight, zero, zero, five" — that's what your STT model has to capture. Telephony audio is 8kHz, which already hurts accuracy; noisy environments hurt it more. Use a model measured on alphanumeric benchmarks, not just overall word error rate.

Function calling and tool use

Real phone agents don't just talk — they book the appointment, push the update to the CRM, charge the card. That means the LLM needs to call tools during the conversation. Keep tool calls short (under 500ms) or the silence becomes obvious. Preload what you can before the call if you know the caller ID.

PII redaction and compliance

Phone calls pick up credit cards, medical information, and account numbers. If you're in healthcare, financial services, or Europe, you need PII redaction and often a BAA or DPA. AssemblyAI enables covered entities and their business associates subject to HIPAA to use AssemblyAI services to process protected health information (PHI); a Business Associate Addendum (BAA) is available for healthcare deployments.

Concurrency and scale

A single agent is easy. 500 simultaneous calls during a support spike is not. Pick a speech-to-text provider with unlimited concurrency and session-based pricing so you're not renegotiating contracts when traffic grows. Universal-Streaming supports unlimited concurrent streams at a flat $0.15/hr.

Common use cases for phone-based voice agents

The pattern is consistent: phone-based voice agents work best on high-volume, well-scoped calls where the caller wants a specific outcome and the range of conversation is predictable.

- Healthcare: appointment scheduling, insurance verification, prescription refills, post-visit follow-ups. See our guide to voice agents in healthcare.

- Contact centers: tier-1 deflection, after-hours coverage, outbound reminders. Often paired with real-time agent assist for calls that escalate to humans.

- Financial services: balance inquiries, payment reminders, fraud verification.

- E-commerce and logistics: order status, delivery coordination, returns initiation.

- Field service: dispatch, status updates, technician check-ins.

In every one of these, the bottleneck is the same: how accurately the agent hears the caller, and how fast it can respond.

How to evaluate a phone-based voice agent before shipping

Demos lie. Here's the evaluation loop we recommend before putting an agent on a real phone number:

- Record 50–100 real calls (with consent) or synthesize them from your top call drivers

- Measure turn-by-turn latency from caller-stops to agent-starts, not just STT latency in isolation

- Audit transcription errors on critical entities: names, addresses, confirmation codes, dollar amounts

- Score completion rate: did the agent actually do the task, or hand off?

- Read the transcripts: you'll find prompt failures and tool-call bugs you'd never catch by listening alone

Most teams skip step 3 and ship with a 90% WER model that silently fumbles phone numbers. Don't.

Phone-based voice agent vs. IVR vs. chatbot

An AI voice agent isn't a smarter IVR — it's a replacement for the human on the other end of the phone for calls that don't need a human.

Putting it together

Building a phone-based voice agent in 2026 is a matter of wiring four well-understood components together: telephony, streaming speech-to-text, an LLM, and TTS. The difference between an agent that feels natural and one that doesn't isn't the LLM — it's the latency budget and the speech-to-text accuracy on phone audio.

Start with a managed platform if you need to ship this quarter. Drop to a Twilio + custom stack when you need to control the latency and accuracy yourself. Either way, the streaming speech-to-text layer is the part that will make or break the caller experience, so pick it carefully.

If you're building phone-based voice agents that need to sound human, Universal-3 Pro Streaming is the reference benchmark for sub-300ms streaming speech-to-text with native telephony support. The companion GitHub repo — github.com/kelsey-aai/phone-voice-agent-assemblyai — is a ~200-line FastAPI server you can fork and ship today.

Frequently asked questions

What is a phone-based voice agent?

A phone-based voice agent is an AI system that answers or places phone calls, transcribes the caller's speech in real time, decides what to say with a Large Language Model, and responds with synthesized voice — all within a single live phone call. It replaces IVR menus and tier-1 human agents for well-scoped tasks like scheduling, verification, and support.

How does a phone-based voice agent work?

A phone-based voice agent combines four components: a telephony provider (Twilio, SIP, or a managed platform) to carry the call, a streaming speech-to-text model to transcribe audio in real time, an LLM to understand intent and generate replies, and a text-to-speech model to speak the response. Everything streams, so the caller hears a reply roughly 800ms after they stop talking.

What is the best speech-to-text for a phone-based voice agent?

The best speech-to-text for a phone-based voice agent is a streaming model with sub-300ms latency, native 8kHz mulaw support, immutable transcripts, intelligent endpointing, and strong accuracy on alphanumerics. AssemblyAI's Universal-3 Pro Streaming model hits 307ms P50 latency and 21% fewer alphanumeric errors than the previous generation, which matters when the agent is capturing phone numbers or confirmation codes.

How do I build a phone-based voice agent with Twilio?

To build a phone-based voice agent with Twilio, create a Twilio phone number, configure TwiML to open a Media Stream to your server's WebSocket endpoint, and bridge the 8kHz mulaw audio into a streaming speech-to-text model like Universal-3 Pro Streaming. Pipe final transcripts into an LLM, then stream the response back through a text-to-speech model as mulaw frames for Twilio to play.

What is the difference between a phone-based voice agent and an IVR?

An IVR uses touch-tone input and rigid menus ("press 1 for billing"), while a phone-based voice agent understands free-form natural speech, maintains conversation context with an LLM, handles interruptions, and can complete tasks end-to-end without handing off to a human. The caller experience is closer to talking to a person than navigating a menu.

How much does it cost to run a phone-based voice agent?

The main cost components are telephony (Twilio per-minute voice rates), streaming speech-to-text (Universal-3 Pro Streaming is $0.15/hour of session time), the LLM (varies by provider and tokens used), and TTS (per-character or per-minute). A typical phone-based voice agent call costs a few cents per minute end-to-end at scale, with the exact number driven by which LLM and TTS you pick.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.