Real-time entity extraction from speech: Capturing emails, phone numbers, and addresses in live audio

Real time entity extraction from audio captures emails, phone numbers, and addresses during live calls. Learn the streaming STT plus NER pipeline, step by step.

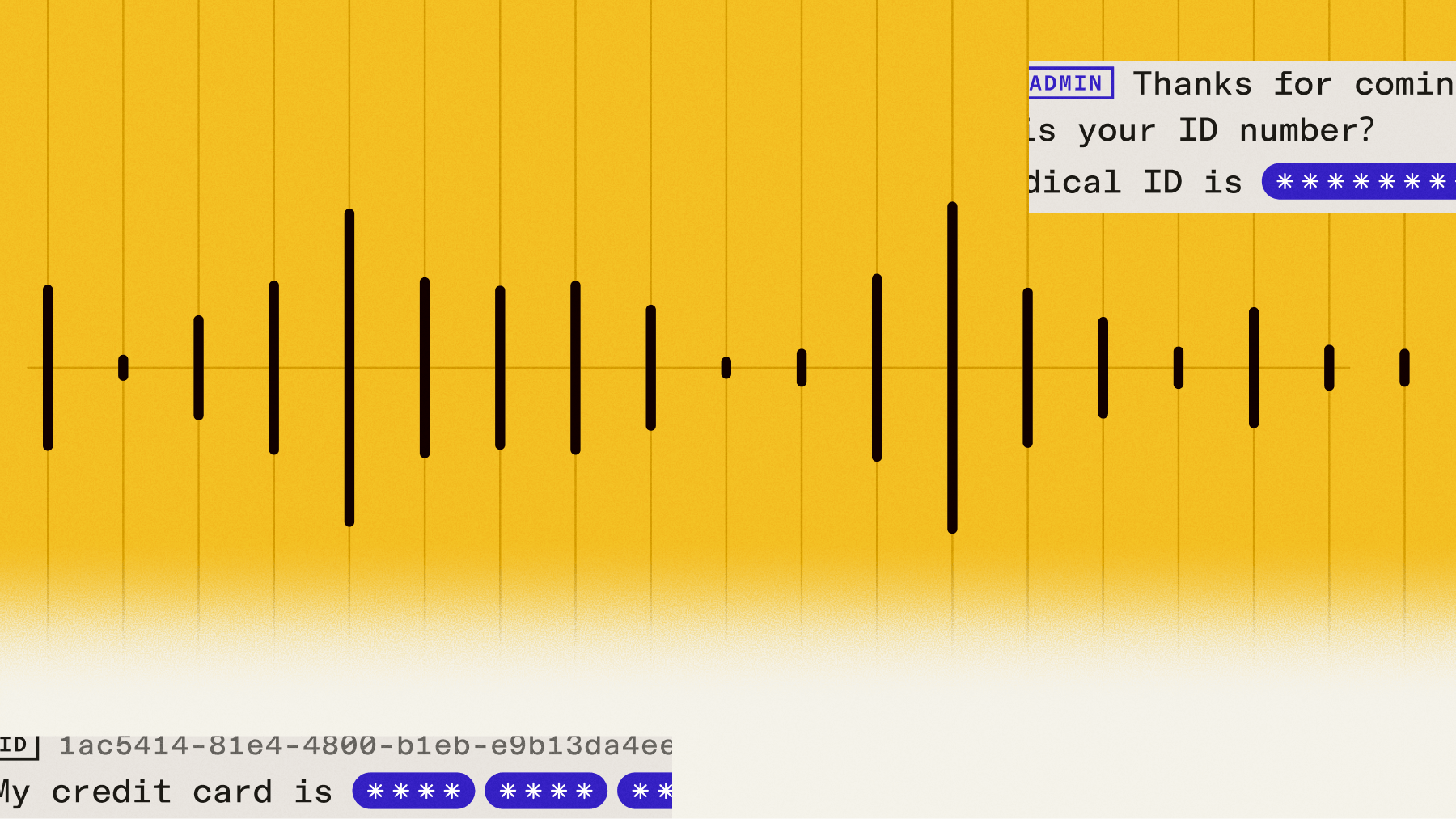

Real-time entity extraction from speech automatically identifies and captures specific information—like emails, phone numbers, and addresses—from live conversations as they happen. Instead of waiting for calls to end and manually transcribing contact details, modern Voice AI systems extract structured data instantly while people are still speaking. This eliminates the delays and errors that come with traditional post-call data entry.

This capability transforms applications like call centers, meeting transcription, and voice assistants by providing immediate access to actionable information. When a customer mentions their email address during a support call, the system captures and formats it correctly in real-time, updating your CRM without any manual intervention. This article explains how real-time entity extraction works, covers the technical challenges of processing live audio, and shows you how to implement it effectively in production applications.

What is real-time entity extraction from audio?

Real-time entity extraction from audio is the automatic identification of specific information—like emails, phone numbers, and addresses—from live speech as it happens. This means while someone is speaking, the system immediately recognizes and pulls out structured data without waiting for the conversation to end.

Think of it like having a smart assistant listening to calls who instantly highlights important details. When a customer says "my email is john dot smith at company dot com," the system recognizes this as an email address and formats it as john.smith@company.com in your database.

Unlike batch processing that analyzes recordings after they're complete, real-time extraction works during active conversations. You get the structured data instantly, which lets you populate forms, update records, or trigger actions while still talking to the person.

How the streaming STT and NER pipeline works

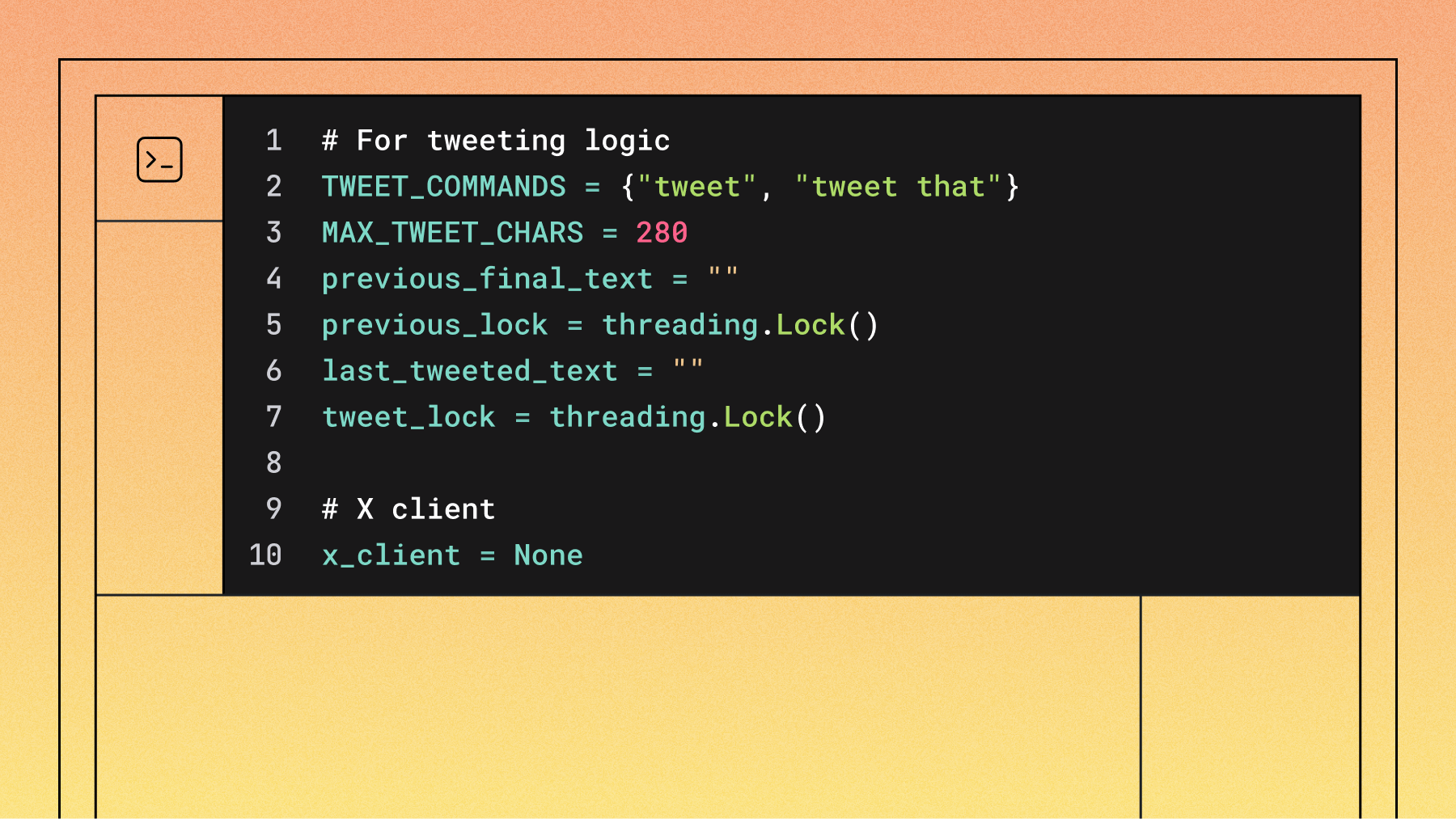

Real-time entity extraction is an inherent capability of the Universal-3 Pro Streaming model, which provides high accuracy for entities like emails and phone numbers out-of-the-box. The streaming model automatically identifies and extracts entities during transcription when you enable format_turns=true. This is different from asynchronous transcription, where entity extraction is controlled by a separate entity_detection=true parameter.

Here's how the unified approach works better:

- Single processing pass: The AI model transcribes speech and identifies entities simultaneously

- No error multiplication: When transcription and entity detection are separate, mistakes in the first step create mistakes in the second step

- Faster results: Processing everything at once eliminates the delay between steps

The old way would transcribe "john dot smith at company dot net" and then try to find entities in that text. If the transcription was wrong, the entity extraction would be wrong too. The unified approach reduces these cascading errors because it's designed to understand both speech patterns and entity structures together.

You'll need to set format_turns to true in your configuration to receive structured data, but this doesn't slow down processing when using modern streaming models.

Real-time vs batch entity extraction

Choosing between real-time and batch processing depends on when you need the extracted information.

Real-time works when you need to act on information during the conversation—like updating a customer record while they're still on the phone. Batch processing is better when accuracy matters more than speed, and you can afford to wait and verify results.

The key difference: real-time gives you one shot to get it right. You can't go back and fix a missed email address after the call ends.

Extracting contact information and other entities from live audio

Contact information requires perfect accuracy because even one wrong character makes an email or phone number useless. Here's how systems handle the unique challenges of capturing this critical data from speech.

Capturing emails and phone numbers

Email addresses and phone numbers create specific problems in speech recognition. When someone says "john dot smith at company dot com," the system must correctly understand that "dot" means a period and "at" means the @ symbol.

Phone numbers get tricky because people say them differently. Some say "five five five, one two three four" while others say "triple five, twelve thirty-four."

The keyterms_prompt parameter helps improve accuracy (note that this costs an additional $0.04/hour):

- What it does: For streaming, you provide up to 100 keyterms of expected terms like common email domains or phone number patterns. The async Universal-3-Pro model supports up to 1,000 words.

- When to use it: You can't combine it with regular prompts—choose one approach

- Dynamic updates: You can update these terms during a live conversation using WebSocket messages

Extracting physical addresses

Physical addresses are more complex because they contain multiple parts that must work together. A complete address needs street number, street name, city, state, and zip code—and people often leave parts out or use abbreviations.

Common address challenges you'll encounter:

- Abbreviations: "St" could mean "Street" or "Saint"

- Missing pieces: People often skip zip codes when speaking

- Informal descriptions: "The building next to Starbucks" isn't a real address

- Regional variations: Local street names are easily misheard

For best results, seed your keyterms_prompt with street names, neighborhoods, and zip codes common in your service area (remembering the 100 keyterms limit for streaming). Always validate extracted addresses against a service like USPS or Google Maps before saving them.

When someone gives an incomplete address, ask specifically for the missing piece rather than requesting the whole address again.

Names, dates, and organizations

Beyond contact information, you'll encounter other important entities in conversations.

Person names face challenges from different cultural naming patterns and pronunciation variations. If you know which customers might call, include their names in your keyterms_prompt.

Dates and times often come as relative references like "next Tuesday" or "end of the month." Extract these phrases and resolve them to actual dates in your application code rather than expecting the AI model to do the conversion.

Company and organization names with unusual spellings benefit most from keyterms preparation. Names like "Pfizer," "LVMH," or "Raytheon" are frequently misheard without context.

Real-time entity extraction use cases

Real-time entity extraction shines in applications where you need structured data immediately during conversations. Here are the most common scenarios where this technology makes a real difference.

Call center and CRM automation

Call centers use real-time entity extraction to eliminate manual data entry during customer calls. Instead of agents typing while trying to listen, the system automatically captures and saves contact information, order numbers, and issue descriptions.

The most effective approach uses dynamic prompting:

- Caller ID lookup: When a call comes in, look up the customer in your system

- Context injection: Add their known information (email domain, address patterns) to keyterms_prompt

- Real-time capture: Extract updated contact details with improved accuracy

- Direct CRM updates: Save entities straight to customer records without agent intervention

This approach removes the post-call data entry step entirely. Order numbers, product names, and support ticket details flow directly into your systems as customers speak.

Meeting transcription and action items

Virtual meetings present different challenges than customer calls. Multiple people speak, entities might reference people not currently talking, and action items often span several conversation turns.

Key patterns for meeting entity extraction:

- Speaker identification: Combine entity detection with speaker diarization to know who said what

- Action item tracking: Look for person name + task + deadline combinations

- Email capture: Grab email addresses mentioned in passing like "send that to sarah at marketing dot com"

- Incomplete information handling: Flag action items missing dates or owners for follow-up

The system must remember entities mentioned earlier in the meeting. When someone says "send that report to her," it needs to link back to an email address mentioned previously.

Voice AI and conversational applications

Voice assistants and chatbots need the fastest possible entity extraction because they must respond immediately. The system extracts entities and uses them in the next response without noticeable delay.

Critical considerations for voice applications:

- Confirmation patterns: Always verify important entities by reading them back—"I have your email as john.smith@company.com, is that correct?"

- Interruption handling: When users correct information mid-response, update your entity data immediately

- Memory management: Store extracted entities in your application, not in the AI model

- Error recovery: If extraction fails, ask clarifying questions rather than guessing

The key is balancing speed with accuracy. You need results fast enough for natural conversation flow, but reliable enough that users trust the system with their information.

Challenges specific to real-time entity extraction

Real-time processing creates unique problems that don't exist when analyzing recorded audio. You get one chance to extract entities correctly, with no opportunity for human review or multiple attempts.

Managing latency in production systems

Speed matters in real-time applications because delays break conversation flow. People notice awkward pauses, and slow systems feel broken even when they work correctly.

Here's what affects processing speed:

- Model architecture: Unified models that do transcription and entity extraction together are faster than separate steps

- Audio buffering: Systems need to balance waiting for complete utterances against response speed

- Network latency: API calls add delay, so choose providers with fast response times

The min_turn_silence parameter controls when the system decides someone has finished speaking. Set it too low and you'll cut people off mid-sentence. Set it too high and you'll add unnecessary delays. Most voice applications work best with 100ms settings.

Remember that users expect immediate responses. If your total processing time exceeds about half a second, people will notice the delay.

Maintaining accuracy with background noise and accents

Audio quality directly affects extraction success. A noisy phone line that makes transcription difficult will also make entity extraction unreliable.

Background noise challenges:

- Multiple speakers: Other people talking can create false entity detections

- Environmental sounds: Traffic, music, or machinery can interfere with speech recognition

- Poor connections: Bad phone lines or low-quality microphones reduce accuracy

Accented speech requires special attention because entity extraction depends on precise pronunciation. Someone with a strong accent saying "john at company dot com" might have their email address missed or misheard.

Use speaker diarization to focus on the main speaker and filter out background voices. For extremely noisy environments, consider audio preprocessing to clean the signal before extraction.

Ensuring accuracy for emails, phones, and addresses

Contact information has zero tolerance for errors. One wrong digit in a phone number or a single typo in an email address makes the entire entity useless.

Building a reliable extraction system requires multiple layers:

- Smart prompting: Use keyterms_prompt with known email domains, phone patterns, and street names from your area (staying within the 100 keyterms limit for streaming)

- Confidence checking: Don't accept low-confidence extractions—ask for confirmation instead

- Format validation: Check that emails follow proper format and phone numbers have the right number of digits

- Confirmation loops: Always read back critical information for user verification

Watch for edge cases like repeated digits ("my PIN is 1111") which sometimes get partially captured. Short utterances with low-information content are the riskiest for extraction errors.

Your validation should happen in layers: first the AI model extracts entities, then your code validates formats, and finally users confirm the information before you save it permanently.

Final words

Real-time entity extraction transforms spoken conversations into structured data instantly, eliminating the delay and errors of manual transcription. The process works by combining speech recognition with entity detection in a single step, capturing emails, phone numbers, and addresses as people speak them naturally.

AssemblyAI's Universal-3 Pro Streaming model handles this unified processing approach, delivering both transcripts and extracted entities together with the speed needed for live applications. The keyterms_prompt feature lets you improve accuracy by providing domain-specific vocabulary, while the streaming API enables real-time updates and confirmations during active conversations.

Frequently asked questions

How fast is real-time entity extraction compared to typing the information manually?

Real-time entity extraction captures and formats contact information in under a quarter-second, while manual typing typically takes 10-15 seconds per email or phone number. The extraction happens automatically as people speak, so there's no additional time cost compared to just having a conversation.

Can real-time entity extraction work accurately with phone calls that have poor audio quality?

Poor audio quality affects accuracy, but modern systems like Universal-3 Pro are designed to handle typical phone line noise and compression. For very poor connections or noisy environments, you'll want to implement confirmation prompts where the system reads back extracted entities for verification.

What happens when someone speaks too quickly or mumbles their email address?

When speech is unclear, the confidence score for extracted entities drops. Instead of guessing, well-designed systems should ask for clarification—"I didn't catch your email clearly, could you repeat it?" This prevents incorrect information from being saved.

How do I handle customers who give partial information or correct themselves mid-conversation?

Real-time systems should update entity information immediately when users make corrections. If someone says "my email is john at company dot com—actually, that's dot net," the system needs to replace the extracted entity with the corrected version rather than storing both.

Which entity types work best with real-time extraction from phone conversations?

Email addresses, phone numbers, and names work most reliably because they follow predictable patterns. Physical addresses are more challenging due to abbreviations and missing components, while dates often require clarification because people use relative terms like "next week."

What's the difference between entity detection and PII redaction for voice applications?

Entity detection identifies and captures specific information like emails and phone numbers in real-time so you can use them immediately. PII redaction removes or masks sensitive information from completed transcripts for privacy compliance, but it doesn't work on live streams and isn't meant for capturing usable data.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.