Daily.co voice agent with AssemblyAI Universal-3 Pro Streaming

Build a WebRTC voice agent using Daily.co for real-time audio transport and the AssemblyAI Universal-3 Pro Streaming model for speech-to-text — without Pipecat.

Build a WebRTC voice agent using Daily.co for real-time audio transport and the AssemblyAI Universal-3 Pro Streaming model for speech-to-text — without Pipecat.

This is the bare-metal Daily.co integration. It shows exactly how Daily's audio tracks connect to the AssemblyAI WebSocket, which is useful when you want to embed a voice agent into a custom Daily.co application without pulling in a full pipeline framework.

Architecture

Browser / Phone (Daily.co room participant)

│ WebRTC audio

▼

Daily.co room

│ PCM audio via daily-python SDK

▼

This bot (daily-python)

│ raw PCM bytes

▼

AssemblyAI Universal-3 Pro WebSocket

│ transcript + neural turn signal

▼

OpenAI GPT-4o → Cartesia TTS → PCM audio

▼

Bot sends audio back into Daily room

Prerequisites

- Python 3.11+

- AssemblyAI API key

- Daily.co API key

- OpenAI API key

- Cartesia API key

Quick start

git clone https://github.com/kelsey-aai/voice-agent-dailyco-universal-3-pro

cd voice-agent-dailyco-universal-3-pro

python -m venv .venv && source .venv/bin/activate

pip install -r requirements.txt

cp .env.example .env

# Edit .env with your API keys

# Create a room and bot token

python create_room.py

# Start the bot

python bot.py --room-url https://yourname.daily.co/room --token <bot-token>Open the room URL in your browser and start speaking.

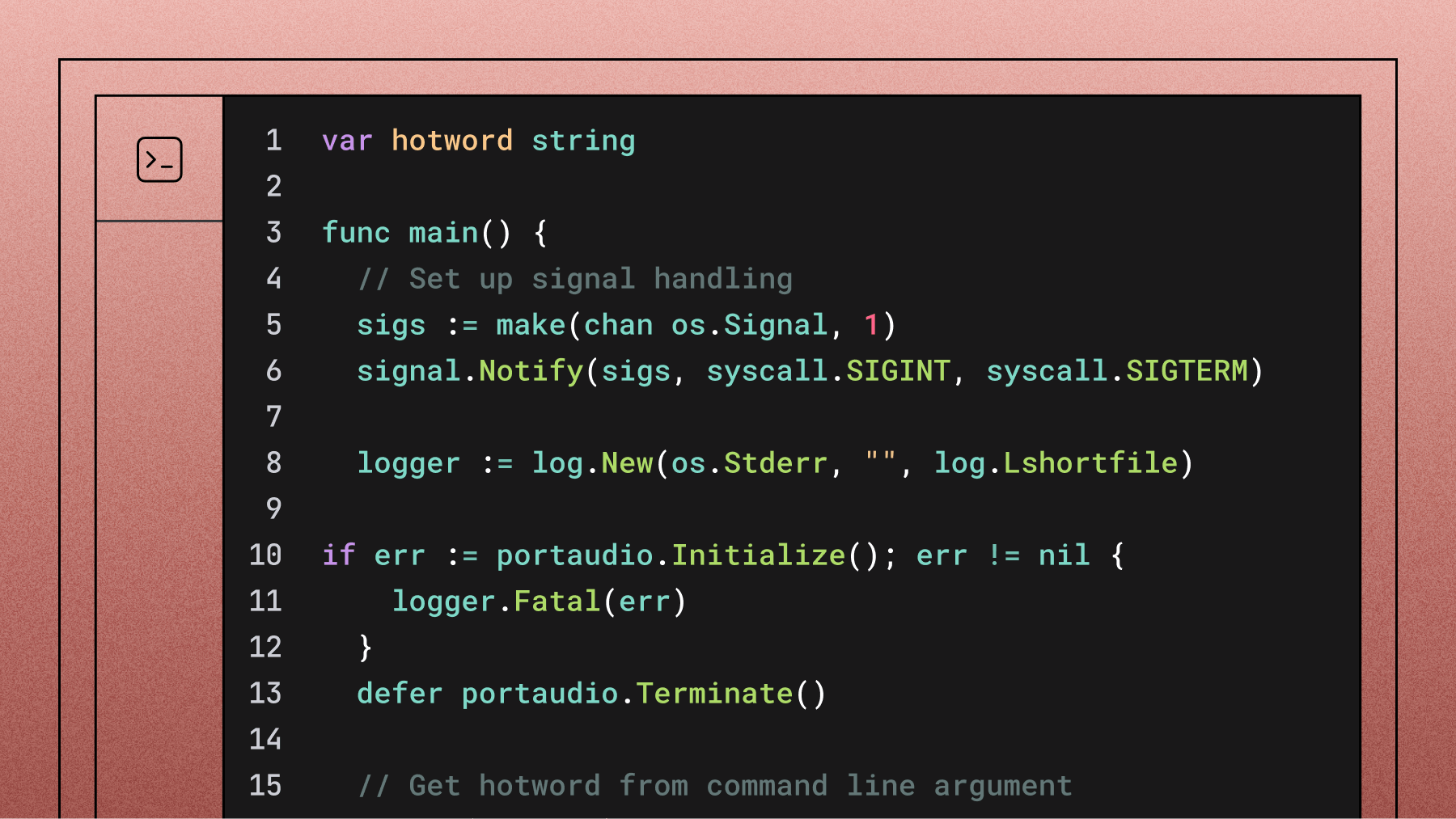

How audio flows

Daily.co calls on_audio_data on your event handler whenever a remote participant speaks. The bot forwards those raw PCM bytes directly to the AssemblyAI WebSocket — no conversion needed at 16kHz

def on_audio_data(self, participant_id, audio_data, sample_rate, num_channels):

if self.aai_ws and not self.aai_ws.closed:

asyncio.create_task(self.aai_ws.send(audio_data))When Universal-3 Pro detects an end-of-turn, the bot generates a response with GPT-4o, synthesizes audio with Cartesia, and injects it back into the room via client.send_audio().

AssemblyAI connection parameters

AAI_WS_URL = (

"wss://streaming.assemblyai.com/v3/ws"

"?speech_model=u3-rt-pro"

"&encoding=pcm_s16le"

f"&sample_rate={SAMPLE_RATE}"

"&end_of_turn_confidence_threshold=0.4"

"&min_turn_silence=300"

f"&token={ASSEMBLYAI_API_KEY}"

)Why direct Daily.co instead of Pipecat?

Pipecat is excellent for production voice agents with complex pipelines. This tutorial is for when you want the primitives — useful if you're embedding a voice agent into a custom Daily.co app and don't want the full framework overhead.

If you do want Pipecat's pipeline abstractions (VAD, context management, interruption handling), see Tutorial 02: Pipecat + Universal-3 Pro Streaming.

Resources

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.