Retell AI + AssemblyAI: custom LLM and post-call analytics

Integrate AssemblyAI with Retell AI for post-call intelligence.

Retell AI + AssemblyAI: custom LLM and post-call analytics

Integrate AssemblyAI with Retell AI for post-call intelligence. This tutorial covers two integration patterns:

- Custom LLM WebSocket — bring your own LLM (OpenAI, Claude, Groq) to Retell's voice platform

- Post-call speech understanding — run every Retell call recording through AssemblyAI's batch API for speaker labels, sentiment analysis, LeMUR action items, and more

Why this pattern?

Retell's native speech-to-text supports Azure and Deepgram for real-time transcription. AssemblyAI's strength is in post-call speech understanding — where the batch transcription API and LeMUR features provide analysis that no other provider offers:

Architecture

Caller ──► Retell AI

│ real-time audio (Deepgram STT by default)

▼

Custom LLM WebSocket (/llm-websocket)

│ OpenAI GPT-4o response

◄── Retell handles TTS + audio playback

│

Call ends

│

AssemblyAI Audio Intelligence

│ recording_url from Retell API

▼

Speaker-labeled transcript, sentiment, chapters,

entity detection, LeMUR action itemsQuick start

git clone https://github.com/kelsey-aai/voice-agent-retell-assemblyai

cd voice-agent-retell-assemblyai

pip install -r requirements.txt

cp .env.example .env

# Edit .env with your API keys

uvicorn server:app --host 0.0.0.0 --port 8000

ngrok http 8000

curl -X POST http://localhost:8000/create-agent

Configure your Retell phone number to use the new agent, then call it.

What AssemblyAI's LeMUR extracts

After each call, LeMUR automatically answers four questions about the conversation:

lemur_result = transcript.lemur.task(

prompt=(

"Based on this call transcript, list:\n"

"1. The main customer issue or request\n"

"2. Whether the issue was resolved (yes/no/partial)\n"

"3. Any follow-up action items\n"

"4. Customer sentiment overall (positive/neutral/negative)"

),

final_model=aai.LemurModel.claude3_5_sonnet,

)

This runs over the full transcript with speaker labels — not just a slice. You get accurate attribution, not a summary of summaries.

Customizing the analytics

Swap the LeMUR prompt for your use case:

# Sales calls

prompt = "Did the prospect agree to a demo? What objections were raised? Next steps?"

# Healthcare

prompt = "What symptoms did the patient report? What instructions were given? Any red flags?"

# Support

prompt = "What product issue was reported? What troubleshooting steps were taken? Resolved?"

Post-call intelligence features

The full AssemblyAI Audio Intelligence config runs on every recording:

config = aai.TranscriptionConfig(

speaker_labels=True,

sentiment_analysis=True,

auto_chapters=True,

entity_detection=True,

content_safety=True,

iab_categories=True,

summarization=True,

)

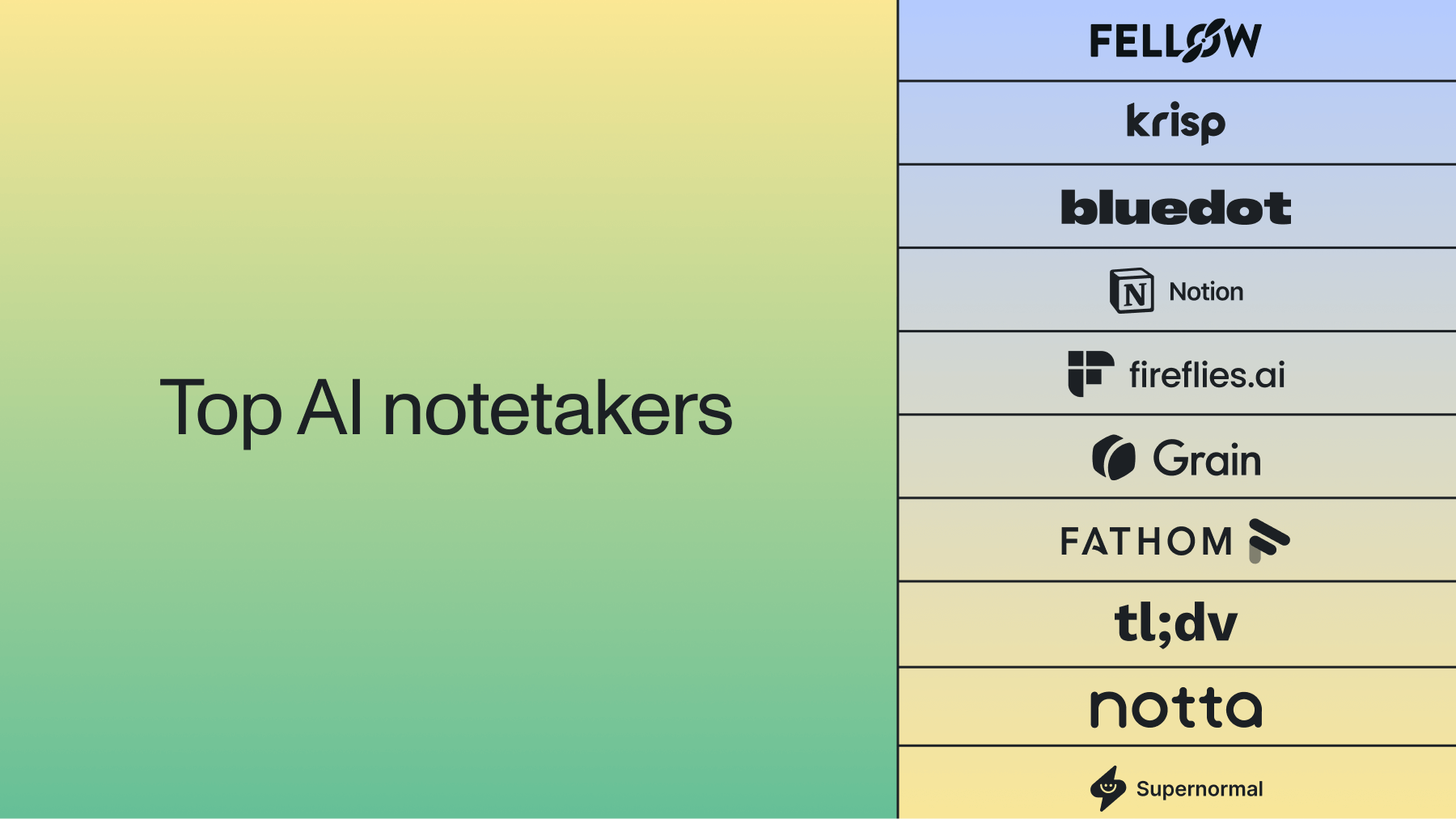

Related tutorials

- Tutorial 04: Twilio + Universal-3 Pro Streaming — build a custom phone agent with full control over the real-time audio pipeline

- Tutorial 03: Vapi + AssemblyAI — another managed voice platform with a similar integration pattern

Resources

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.