How do I build an AI medical scribe using speech-to-text?

This guide walks you through the complete technical architecture—from capturing audio in noisy clinical environments to generating structured SOAP notes that integrate seamlessly with your EHR system.

Building an AI medical scribe requires solving complex technical challenges that go far beyond basic speech recognition. You need specialized systems that handle medical terminology, process multiple speakers accurately, and maintain the precision required for clinical documentation where errors can directly impact patient care.

This guide walks you through the complete technical architecture—from capturing audio in noisy clinical environments to generating structured SOAP notes that integrate seamlessly with your EHR system. You'll understand the specific requirements for medical-grade speech-to-text, the role of Large Language Models in clinical note generation, and the critical compliance considerations that separate healthcare applications from general transcription systems.

What is an AI medical scribe?

An AI medical scribe is software that listens to your patient conversations and automatically creates medical notes. This means it captures everything said during the appointment and turns it into structured documentation like SOAP notes without you having to type anything.

The system works in the background while you focus on your patient. Instead of looking at a computer screen or writing notes, you can maintain eye contact and have natural conversations while the AI handles all the documentation.

These systems connect directly to your Electronic Health Record (EHR) so the notes appear in the patient's file automatically. You just need to review and approve them before they become part of the official medical record.

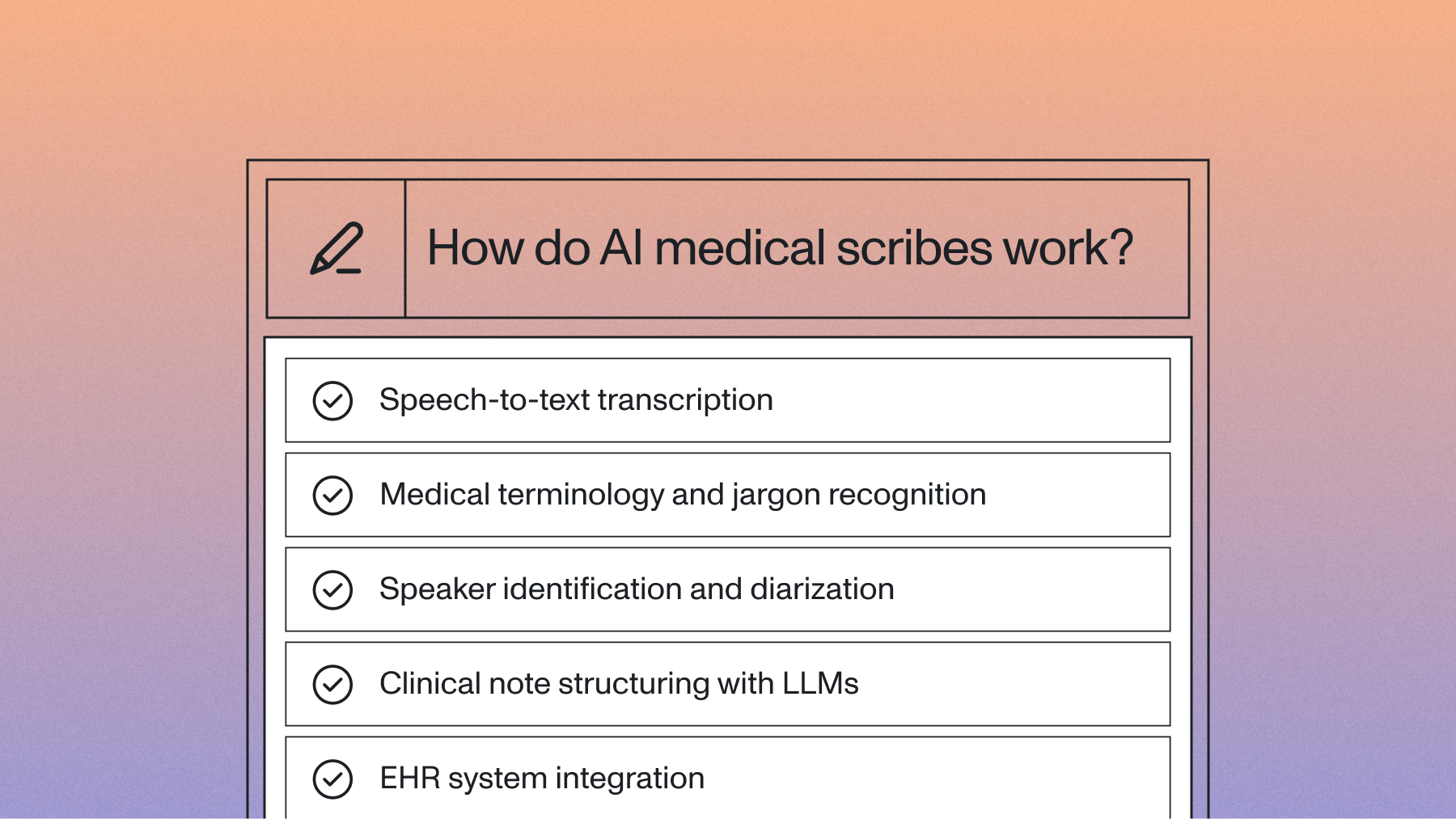

How do AI medical scribes work?

Building an AI medical scribe requires four connected steps that work together. If any step fails, your entire system breaks down.

Let's break down each step so you understand what's actually happening.

Speech-to-text transcription

Speech-to-text systems convert your conversations into written words as you're speaking. This means the text appears in real-time, not after the appointment ends.

Your medical environment creates unique challenges that regular speech recognition can't handle:

- Medical terms: Words like "hydroxychloroquine" or "esophagogastroduodenoscopy" that normal systems completely butcher

- Multiple voices: The system needs to tell the difference between you, your patient, nurses, and family members

- Background noise: Medical equipment, hallway chatter, and air conditioning all interfere with audio

Regular speech-to-text models trained on everyday conversations will fail catastrophically with medical content. When you say "prescribe metformin 500mg," a general model might write "met for men 500 message"—creating dangerous errors in your documentation.

Medical terminology and jargon recognition

Medical speech recognition needs special training because healthcare uses thousands of unique words that don't exist in normal conversation. When you say "patient presents with intermittent claudication," every word in that phrase requires specialized medical training to recognize correctly.

Drug names create the biggest problems. Here's what goes wrong with regular systems:

- "Celebrex" becomes "Celexa"—completely different medications

- "Zantac" turns into "Xanax"—one treats heartburn, the other anxiety

- "Clonidine" gets confused with "Klonopin"—blood pressure versus seizure medication

Your AI medical scribe must also handle medical abbreviations correctly. When you say "BP one-forty over ninety," it needs to write "blood pressure 140/90" in proper medical format.

Speaker identification and diarization

Speaker diarization is technology that figures out who's talking at each moment. This matters because you need to know whether symptoms came from the patient or observations came from you.

The system learns voice patterns to keep track of different speakers even when people interrupt each other. When your patient says "my chest hurts when I breathe" and you respond "I hear crackling in your left lung," the AI must correctly label who said what.

This gets complicated when nurses pop in to report vitals or family members provide medical history. Without accurate speaker separation, critical information gets misattributed in your final notes.

Clinical note structuring with LLMs

Large Language Models (LLMs) take your conversation transcript and organize it into proper SOAP note format. The LLM pulls out symptoms, physical exam findings, diagnoses, and treatment plans from natural conversation flow.

Here's how it works. This conversation: Patient: "I've had this headache for three days. Mostly on the right side." Doctor: "Any nausea or vision changes?" Patient: "Some nausea, yes. No vision problems." Doctor: "Your blood pressure is 150/95. This looks like a tension headache related to your hypertension."

Becomes this organized note:

- Subjective: Patient reports 3-day right-sided headache with nausea, denies vision changes

- Objective: BP 150/95, elevated

- Assessment: Tension headache, likely hypertension-related

- Plan: [Based on your treatment discussion]

You still need to review and edit these AI-generated notes. The technology assists but doesn't replace your clinical judgment.

EHR system integration

The final step pushes your structured notes directly into your Electronic Health Record through API connections. This eliminates copying and pasting between different systems.

Each EHR expects information formatted differently. Your AI needs to format notes for Epic differently than Cerner or other systems. Successful integration means you click one button to transfer the AI's documentation into your patient's official record.

What are the technical requirements for medical speech-to-text?

Medical transcription needs much higher standards than regular speech recognition because mistakes can harm patients. A wrong medication name or incorrect dosage could be dangerous.

Medical transcription accuracy standards

Your medical documentation needs near-perfect accuracy on clinical terms because errors directly affect patient care. A transcription that changes "15mg" to "50mg" could cause a dangerous overdose. Confusing "hypertension" with "hypotension" completely reverses the diagnosis.

You need speech-to-text systems that demonstrate accuracy on medical benchmark datasets before you use them. These tests check recognition of drug names, body parts, procedures, and medical abbreviations for your specific specialty.

Real-time streaming processing

You need streaming transcription that processes speech as it happens, not batch processing that waits until conversations end. Real-time feedback lets you see if the AI is capturing information correctly so you can repeat important details if needed.

Streaming must work under 300 milliseconds—any longer and you'll notice the delay disrupting your workflow. The technical challenge involves processing audio chunks while remembering context from earlier in the conversation.

Batch processing won't work for medical workflows. You can't wait until after appointments to discover the AI missed critical information.