What is the best speech to text api to build ai medical ambient scribes?

Speech to text API for medical ambient scribes: compare real-time, HIPAA-compliant options with medical vocabulary, speaker diarization, and latency.

Medical ambient scribes represent one of healthcare's most promising AI applications—systems that automatically document doctor-patient conversations in real-time, creating clinical notes as physicians speak. Building these systems requires speech-to-text APIs that understand medical terminology, deliver instant results during patient visits, and meet strict healthcare security requirements. The API you choose determines whether your ambient scribe produces accurate documentation that saves physicians time or creates more problems with incorrect medical terms and missing context.

Most general speech-to-text APIs fail in medical environments because they can't handle specialized vocabulary, lack real-time performance, or miss healthcare compliance requirements. This guide examines the specific capabilities medical ambient scribes need, compares leading APIs designed for healthcare applications, and provides practical implementation strategies for building reliable clinical documentation systems that physicians trust.

What is a speech-to-text API?

A speech-to-text API is a cloud service that converts spoken words into written text using AI models. This means you send audio files or live speech to the API, and it returns typed transcripts without you needing to build speech recognition technology yourself. Medical ambient scribes are AI systems that automatically document doctor-patient conversations in real-time, creating clinical notes as physicians speak. Building these systems requires speech-to-text APIs that understand medical terminology, work instantly during patient visits, and meet healthcare security requirements. The API you choose determines whether your ambient scribe produces accurate documentation that saves physicians time or creates more problems with incorrect medical terms and missing context.

Most APIs work through simple REST calls—you upload audio, receive text back. But not all speech-to-text APIs handle medical conversations well.

Real-time streaming vs batch transcription

You have two options for processing audio: streaming or batch.

Streaming processes audio as someone speaks, giving you text within milliseconds. This works by sending small audio chunks continuously to the API, which returns partial transcripts that build into complete sentences. Medical ambient scribes need streaming because physicians want to see their notes appearing live during patient visits.

Batch transcription waits until recording finishes, then processes the entire file. While batch often achieves slightly better accuracy since the AI model sees the full context, the delay makes it useless for live documentation. The difference between 200-millisecond streaming and waiting 30 seconds after each conversation determines whether physicians trust your ambient scribe.

What makes a speech-to-text API suitable for medical ambient scribes?

Medical conversations aren't like regular phone calls or meetings. You need specific capabilities that most general APIs can't handle:

- Medical vocabulary recognition: Specialized terms that sound similar but mean different things.

- Real-time performance: Sub-second response times that don't disrupt patient care.

- Speaker separation: Knowing who said what in doctor-patient conversations.

- Healthcare compliance: Legal requirements for handling patient information.

Medical terminology and clinical jargon recognition

General speech-to-text APIs turn medical terms into nonsense. "Metoprolol" becomes "metal patrol." "Dyspnea" transforms into "this near." These aren't occasional errors—they happen constantly because standard APIs train on everyday speech, not clinical conversations.

Medical AI models train specifically on healthcare datasets containing pharmaceutical names, anatomical terms, procedure codes, and disease classifications. They understand that "CHF" means congestive heart failure, not random letters. When a physician says "start Lisinopril 10mg daily," these models recognize each component: the drug name, dosage, and frequency.

The difference impacts every medical specialty:

- Cardiology: Drug names like "atenolol" vs everyday words.

- Surgery: Procedure terminology that sounds like common phrases.

- Pediatrics: Childhood conditions with complex names.

- Psychiatry: Medication names that general models consistently miss.

Real-time streaming and latency requirements

Physicians need text appearing within 500 milliseconds of speaking. Any longer breaks their concentration and disrupts patient interaction. This isn't just about transcription speed—multiple components affect total delay.

Your audio travels to the API server, gets processed through AI models, receives formatting, then returns as text. Each step adds milliseconds. APIs optimized for medical use minimize every component through edge servers, optimized model architectures, and efficient response formatting.

If your physician pauses to check the screen and doesn't see their recent words, they'll lose confidence in the system.

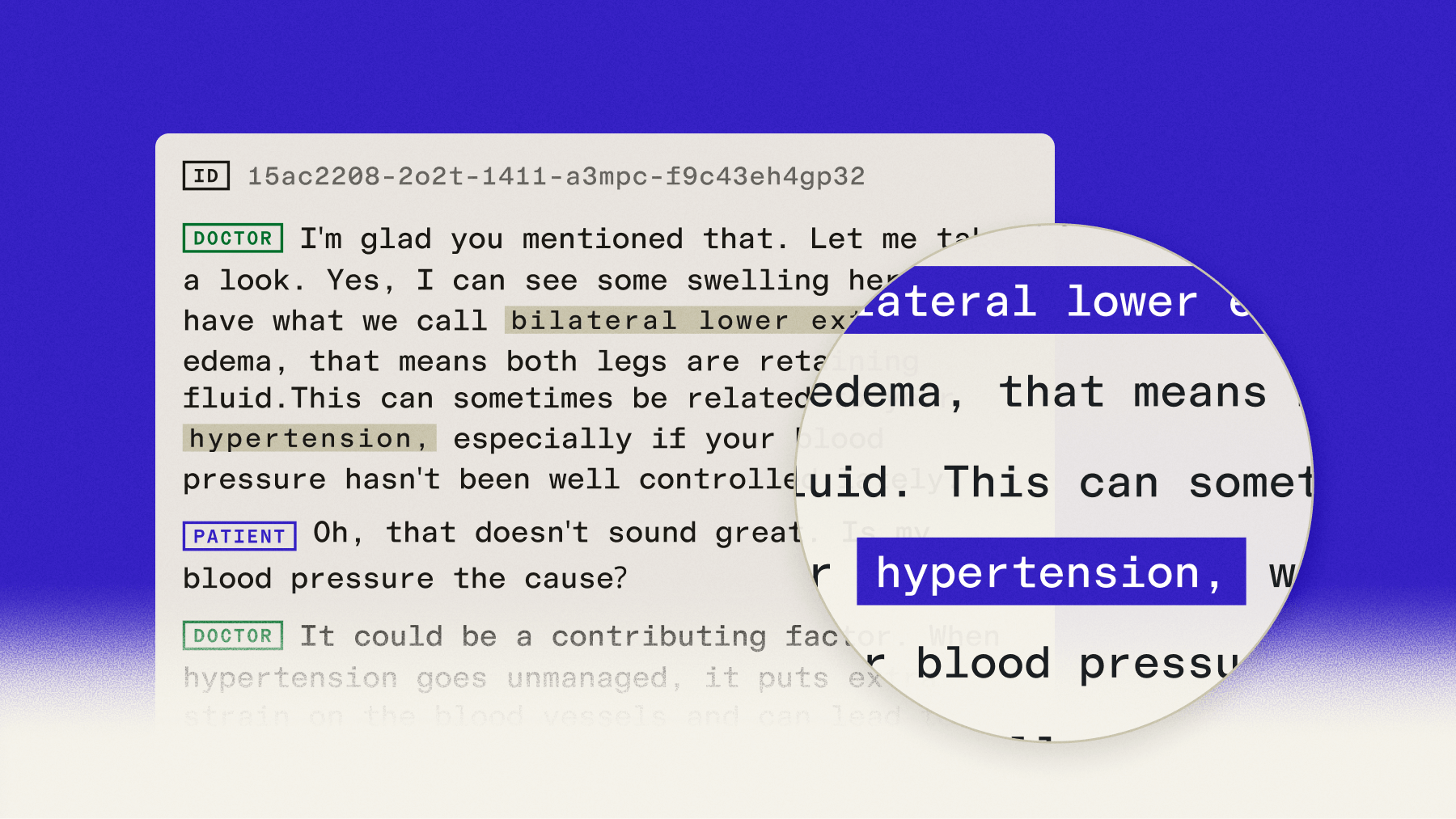

Speaker diarization for doctor-patient conversations

Medical documentation requires separating physician observations from patient statements. Speaker diarization labels each part of the transcript with who spoke—"Doctor" or "Patient"—so clinical notes distinguish subjective complaints from objective assessments.

Quality diarization handles tricky situations:

- Overlapping speech: When doctors and patients talk simultaneously.

- Similar voices: Maintaining accuracy when speakers sound alike.

- Brief interjections: Correctly attributing "yes," "mm-hmm," or short questions.

Without accurate speaker separation, your ambient scribe creates confusing notes that mix physician assessments with patient responses.

HIPAA compliance and data security

Healthcare organizations can't legally use APIs that don't sign Business Associate Agreements (BAAs). This legal requirement ensures the API provider protects patient health information according to HIPAA standards.

Beyond paperwork, medical APIs implement specific security measures:

- End-to-end encryption: Audio and text stay encrypted during transmission and storage.

- Access controls: Only authorized users can access transcription data.

- Audit logging: Complete records of data access for compliance reporting.

- Data residency: Options to keep patient information within specific regions.

Top speech-to-text APIs for medical ambient scribes

Several providers offer speech-to-text, but only some provide the medical-specific features you need.

AssemblyAI

AssemblyAI's Medical Mode is a $0.15/hr add-on enabled via domain="medical-v1" that enhances accuracy on clinical terminology through specialized training on medical conversations. For ambient scribes, the Universal-3 Pro Streaming model delivers sub-300ms latency while maintaining high accuracy on drug names and medical procedures. Medical Mode is also available on all AssemblyAI pre-recorded models for post-visit batch processing workflows. Speaker diarization is included, accurately separating doctor and patient speech without additional cost.

Internal benchmarks across multiple clinical datasets show Universal-3 Pro with Medical Mode achieving a 4.97% Missed Entity Rate on medical terminology — meaningfully lower than alternatives on specialized clinical vocabulary.

For healthcare compliance, AssemblyAI enables covered entities and their business associates subject to HIPAA to use the AssemblyAI services to process protected health information (PHI). AssemblyAI is considered a business associate under HIPAA, and offers a Business Associate Addendum (BAA) required under HIPAA to ensure appropriate safeguarding of PHI.

The platform's forward-deployed engineers work directly with healthcare teams to optimize performance for specific clinical environments and specialties.

OpenAI Whisper

Whisper provides solid general transcription but lacks medical-specific training. While the open-source model allows self-hosting for data control, it doesn't include native real-time streaming — you need workarounds that add complexity and latency. Medical terminology accuracy falls behind specialized alternatives, especially for pharmaceutical names. Organizations choosing Whisper run it on their own servers, handling HIPAA compliance, scaling, and performance optimization themselves.

Google Cloud Speech-to-Text (Medical Models)

Google Cloud Speech-to-Text offers two dedicated medical models: medical_conversation for multi-speaker clinical consultations and medical_dictation for single-physician dictation. Both provide real-time streaming and automatic punctuation. Google Cloud's compliance certifications make it viable for medical applications, though accuracy on specialized pharmaceutical terminology varies by specialty and you'll typically need more post-processing to handle edge cases. Priced at $0.0474/minute for the medical models.

Amazon Transcribe Medical and AWS HealthScribe

Amazon offers two relevant products for ambient scribes. Amazon Transcribe Medical is the API-level service that recognizes clinical terms across specialties including cardiology, neurology, and radiology — good for developers who want to build custom pipelines on AWS infrastructure. AWS HealthScribe is Amazon's higher-level ambient scribe service, which combines medical transcription with structured note generation and is worth evaluating if your organization is already deeply on AWS. Both are HIPAA-eligible with BAA coverage. Pricing includes per-minute transcription plus AWS infrastructure charges, which can complicate total cost calculations.

How to evaluate speech-to-text APIs for medical ambient scribes

Testing beats marketing claims every time. Here's how to evaluate APIs systematically.

Accuracy testing with medical audio samples

Word Error Rate (WER) measures overall transcription accuracy. But for medical ambient scribes, Missed Entity Rate (MER) on clinical terminology matters more — it measures specifically how often drug names, diagnoses, procedures, and dosages are transcribed incorrectly. General APIs achieving 95% WER on clear audio often perform significantly worse on medical entities.

Test with real clinical recordings including:

- Medication discussions: Drug names, dosages, administration instructions.

- Diagnostic conversations: Disease names, symptoms, test results.

- Procedure descriptions: Surgical procedures, treatment protocols, equipment names.

Record samples from different medical specialties since cardiology terminology differs significantly from psychiatry or pediatrics.

Essential features for medical documentation

Beyond basic transcription, check for these capabilities:

- Automatic punctuation: Proper sentence structure without manual editing.

- Number formatting: Medication dosages and vital signs formatted correctly.

- Timestamp precision: Exact timing for medical-legal requirements.

- Confidence scores: Indicators when the API is uncertain about accuracy.

- Custom vocabulary: Ability to add institution-specific terms.

Pricing models and total cost of ownership

APIs typically charge per minute, but structures vary:

- Streaming surcharges: Real-time typically costs more than batch transcription.

- Medical model premiums: Specialized models carry additional fees — AssemblyAI's Medical Mode is $0.15/hr on top of base pricing.

- Volume discounts: Significant breaks at higher usage tiers.

- Feature add-ons: Enhanced security features may cost extra.

Calculate total costs including API charges, infrastructure, integration development, and maintenance.

How to implement speech-to-text for medical ambient scribes

Technical implementation affects whether your ambient scribe works reliably in clinical environments.

Technical integration requirements

Start with secure API authentication using proper key management that never exposes credentials in your code. Most providers offer SDKs for popular programming languages, simplifying integration.

Your audio needs specific requirements:

- Format: WAV, MP3, or FLAC work with most APIs.

- Sample rate: 16kHz is the recommended sample rate for voice agent and medical scribe use cases.

- Channels: Mono for single microphone, stereo for separate doctor/patient mics.

Real-time streaming requires persistent connections using WebSockets. Your application sends audio chunks continuously while receiving partial transcripts that update as context becomes available.

Testing and optimizing for clinical environments

Exam rooms create acoustic challenges that hurt transcription accuracy. Medical equipment, HVAC systems, and hallway noise interfere with speech capture. Position microphones closer to physicians than wall-mounted alternatives for a better signal-to-noise ratio.

Test across different clinical scenarios:

- Routine consultations: Clear speech with standard medical terminology.

- Pediatric visits: Children's voices and background noise.

- Emergency situations: Rapid speech and multiple speakers talking over each other.

- Telehealth sessions: Compressed audio and varying connection quality.

Monitor accuracy continuously and adjust microphone placement, audio settings, or API parameters based on real-world performance.

Final words

Building reliable medical ambient scribes requires speech-to-text APIs designed specifically for healthcare's unique challenges — medical terminology recognition, real-time performance, speaker separation, and healthcare compliance aren't optional features. The gap between general transcription and medical-grade speech-to-text becomes obvious when "prescribe metformin twice daily" becomes "describe metal forming twice daily" in patient records.

AssemblyAI's Medical Mode addresses these challenges through Voice AI models trained specifically on clinical conversations, delivering the accuracy healthcare providers need while maintaining sub-second latency for natural documentation flow during patient visits. Success depends on choosing APIs built for medical applications rather than adapting general-purpose solutions.

Frequently asked questions

How accurate should speech-to-text APIs be for medical ambient scribes?

Medical documentation typically requires 95% accuracy or higher for clinical usability. For medical terminology specifically, Missed Entity Rate (MER) is the more meaningful benchmark — AssemblyAI's Universal-3 Pro with Medical Mode achieves 4.97% MER on clinical terms, compared to higher rates for general-purpose APIs.

Can speech-to-text APIs understand pharmaceutical names and medical procedures?

Specialized medical AI models recognize drug names, anatomical terms, and procedures that general APIs consistently misinterpret. These models train on clinical datasets containing vocabulary physicians use daily, though accuracy varies significantly between providers.

What latency do medical ambient scribes need for real-time documentation?

Physicians need transcription appearing within 500 milliseconds of speaking to maintain natural workflow. Higher latency disrupts concentration and patient interaction, making doctors lose confidence in the ambient scribe system.

Which speech-to-text APIs offer Business Associate Agreements for HIPAA compliance?

Healthcare-focused providers like AssemblyAI, Google Cloud, and Amazon offer BAAs and security features enabling HIPAA-compliant implementations. Organizations must ensure their chosen provider signs a BAA and implements required patient information safeguards.

How does speaker diarization work in doctor-patient conversations?

Speaker diarization analyzes voice characteristics to identify different speakers, labeling transcript segments as "Doctor" or "Patient." Quality varies between providers, with medical-specialized APIs typically offering better separation accuracy for clinical conversations where attribution affects documentation integrity.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.