Best scalable voice AI solutions for customer service

Voice AI for customer service: Best use cases, architecture, and accuracy benchmarks to automate calls 24/7, reduce hold time, and escalate to humans.

Voice AI transforms customer service by enabling natural conversations between customers and automated systems. Instead of navigating rigid phone menus, customers speak naturally while the system understands their intent, accesses account information, and provides immediate assistance. This technology combines speech recognition, natural language understanding, and response generation to handle everything from account inquiries to appointment scheduling.

The challenge lies in implementation—Voice AI only delivers value when speech recognition accurately captures customer details like account numbers and order IDs. Poor transcription creates cascading failures that frustrate customers and eliminate cost savings. This guide covers the core technologies, proven use cases, and implementation requirements that determine whether Voice AI succeeds or fails in production customer service environments.

What is Voice AI for customer service?

Voice AI for customer service means automated systems that handle customer calls through natural conversation. This means customers can speak normally, use different phrases for the same request, and provide context mid-sentence without breaking the system.

Unlike traditional phone menus, Voice AI adapts to customer speech patterns. You don't need to memorize specific commands or navigate through numbered options. The system understands intent without requiring scripted responses.

Four core technologies work together to make this possible:

- Speech recognition: Converts your spoken words into text

- Natural language understanding: Figures out what you actually want

- Response generation: Creates appropriate answers to your questions

- Text-to-speech synthesis: Converts text responses back into spoken words

Orchestration platforms like LiveKit, Pipecat, and Vapi coordinate these components. They manage the entire conversation flow from hearing your question to speaking the response.

How Voice AI agents work

Voice AI processes your conversation in three stages that must complete within milliseconds to feel natural.

Stage 1 converts your speech to text through speech recognition. This foundation stage is critical—when it fails, everything else breaks down. In real customer service calls, the system deals with phone line compression, background noise, and short sentences, which is much harder than processing clean audio recordings.

Stage 2 interprets what you mean using natural language understanding. The system reasons through conversation history to determine your intent and decide what action to take. If the speech recognition heard "cancel my order" instead of "cancel my water delivery," the entire interaction goes wrong.

Stage 3 generates and speaks a response. The system selects the right response strategy, retrieves necessary information from your account, and converts the text answer into natural-sounding speech.

The entire process needs to complete in under 800 milliseconds for conversations to feel natural. Speech recognition typically takes around 300 milliseconds, followed by language processing and response generation.

Speech-to-text: The foundation of Voice AI

Speech-to-text makes or breaks your Voice AI system. When this layer fails, customers have to repeat themselves, calls escalate unnecessarily, and the automation provides no value.

Poor transcription creates cascading problems. When "policy number B4K7X9" becomes "policy number before K7X9," the system can't find your account. You end up repeating information you already provided clearly.

Real customer service calls present specific challenges that clean audio benchmarks don't capture:

- Names and account details: Email addresses, policy numbers, and order IDs get mangled without context

- Phone audio quality: Compressed phone lines and headset noise reduce accuracy significantly

- Short responses: Brief answers like "yes" or account numbers often get transcribed incorrectly

- Turn-taking: Detecting when you're done speaking versus just pausing mid-thought

Production Voice AI systems operate over actual phone connections with background noise and short bursts of speech. The real test isn't a clean studio recording—it's your headset during a busy day with office noise in the background.

Natural language understanding and intent detection

Natural language understanding operates on two levels: explicit requests and contextual interpretation. When you say "check my balance," that's explicit. When you follow up with "what about my savings account?" the system needs to understand you're still talking about account balances.

The system maintains conversation history and tracks what was discussed earlier. This context allows it to interpret follow-up questions correctly without making you repeat background information.

Intent classification includes confidence scoring. When the system isn't sure what you want, it should ask for clarification rather than guessing. A customer saying "I want to cancel" could mean an order, subscription, or appointment—the system needs to clarify before taking action.

Context injection is now strongly supported through prompting capabilities. Modern speech recognition models accept up to 1,500 words of context through prompt parameters, allowing you to provide product names, policy language, common customer intents, and relevant vocabulary. This context dramatically improves the system's understanding of domain-specific terms and customer requests.

Response generation and text-to-speech synthesis

Response generation chooses between simple lookups and complex processes. Checking your account balance requires one database query. Rescheduling an appointment with specific time constraints involves multiple API calls and decision points.

The system needs integration with your company's backend systems:

- Customer databases: For account information and history

- Scheduling platforms: For appointment management

- Order systems: For transaction processing

- Knowledge bases: For answering common questions

Natural-sounding speech synthesis improves user acceptance, but accuracy matters more than voice quality. Most customers prefer a slightly robotic voice that gets their information right over a beautiful voice that makes mistakes.

Higher-quality speech synthesis adds response delay. You might choose faster, less natural synthesis to keep conversations flowing smoothly rather than waiting for perfect pronunciation.

Key benefits of Voice AI for customer service

Voice AI delivers measurable improvements when implemented correctly. The key is treating accuracy and response time as requirements, not nice-to-have features.

Resolution rate has become the primary success metric. Instead of measuring whether customers felt satisfied with the interaction, companies now track whether the problem got solved. This shift reflects a more mature approach to evaluating Voice AI effectiveness.

24/7 availability and operational scalability

Voice AI provides continuous service without staffing constraints. Your customers get help at 2am just as effectively as at 2pm. The system doesn't take breaks, get tired, or vary in knowledge based on which shift is working.

Traditional contact centers require proportional staffing for volume spikes. Double the calls means hiring double the agents. Voice AI handles demand fluctuations with incremental computing costs rather than expensive human resources.

Global coverage happens automatically. Time zone differences and seasonal demand peaks don't require months of recruitment and training. The same system serves customers in New York and Tokyo with identical capability.

Most successful deployments use a hybrid model. Voice AI handles routine, high-volume interactions while complex or sensitive issues route to human agents. This division of labor maximizes both efficiency and customer satisfaction.

Faster resolution and improved customer satisfaction

Eliminating hold times only improves satisfaction when the Voice AI accurately understands what customers need. Speed without accuracy just creates frustrated customers faster.

Voice AI delivers consistent service quality regardless of timing. Unlike human agents who might have knowledge gaps or fatigue-related errors, the system provides the same level of service for every interaction.

First-call resolution improves dramatically for routine inquiries. When customers get immediate answers about account balances, order status, or appointment scheduling, both satisfaction and operational efficiency increase.

The consistency advantage extends beyond individual calls. Every customer receives identical treatment based on the same knowledge base and decision logic, eliminating variability between different agents.

Cost reduction and ROI impact

Voice AI enables cost redistribution rather than simple reduction. Automated systems handle repetitive tasks while human agents focus on complex problems requiring judgment and empathy.

Direct savings come from comparing automation costs versus fully-loaded agent salaries. However, poor accuracy eliminates these savings completely. Failed automation that forces customers to wait for human agents costs more than no automation at all.

Indirect benefits include better resource allocation and improved job satisfaction. When agents spend time on engaging, complex work instead of repetitive information gathering, both performance and retention improve.

Scalability economics favor Voice AI for growing businesses. Expanding service capacity requires additional computing power rather than hiring and training new employees.

Common use cases for Voice AI in customer service

Start with use cases that have predictable patterns and clear success criteria. The most reliable implementations handle interactions where you can easily measure whether the customer got what they needed.

Automated inbound support and query resolution

FAQ handling works well because questions follow predictable patterns with consistent answers. The system draws responses from your knowledge base without needing perfect recognition of specific customer details.

Account information retrieval requires higher accuracy because customer identifiers must be transcribed correctly. Account numbers, order IDs, and reference codes need precise recognition for database lookups to work.

Basic troubleshooting follows guided flows with clear escalation points. The system walks customers through standard resolution steps and identifies when problems exceed its capability.

Key challenge: Alphanumeric codes like order numbers frequently get transcribed incorrectly. Test specifically with your actual account numbers and confirmation codes rather than relying on general accuracy metrics.

Appointment scheduling and booking management

New appointment booking involves checking availability, handling scheduling constraints, and confirming details with calendar integration. The predictable structure makes this ideal for Voice AI implementation.

Appointment changes and cancellations follow similar patterns. Customers can modify existing bookings without agent assistance, and the system updates all relevant systems automatically.

Technical considerations include:

- Natural date recognition: Understanding "next Thursday afternoon" requires language processing beyond basic transcription

- Identity verification: Confirming customer identity before account access

- Constraint handling: Managing preferences for specific times, providers, or services

Scheduling conversations have natural confirmation steps that catch misunderstandings before they become problems. This built-in error recovery makes appointment management one of the most reliable Voice AI applications.

Order tracking and transaction processing

Order status inquiries provide high value with low complexity. Customers get immediate updates about shipping, delivery dates, and order details without waiting for agent availability.

Transaction processing covers routine modifications like address changes, cancellations, and return initiation. The system handles these updates while maintaining proper security verification.

Authentication typically uses knowledge-based verification. Customers provide account numbers plus additional identifiers like zip codes or dates of birth for access verification.

Challenge: Order confirmation codes and tracking numbers often contain mixed letters and numbers that speech recognition struggles with. Consider providing keypad input alternatives for these specific data types.

Implementation requirements and best practices

Technical infrastructure and performance requirements determine whether Voice AI succeeds or fails in production. Plan these as design constraints rather than post-deployment optimizations.

Architecture and integration patterns

API-first integration combines specialized components for optimal performance. You choose best-in-class speech recognition, language models, and synthesis while orchestration platforms coordinate the workflow. This approach gives you control over accuracy-critical components.

Platform-based solutions handle all components within integrated systems. These work well when you prioritize deployment speed over component optimization. The tradeoff is less control over individual performance characteristics.

Hybrid approaches use platforms for coordination but specialized APIs where accuracy matters most. This balances implementation speed with performance optimization for critical functions.

Integration requirements span multiple business systems. Voice AI needs real-time access to customer databases, scheduling platforms, order management, and knowledge bases. Plan for integration latency as part of your overall response time budget.

Performance and accuracy requirements

Test accuracy against your specific vocabulary rather than general benchmarks. Account numbers, product names, and customer identifiers in your business require validation with actual customer data.

Use appropriate models for different interaction types. Cost-effective models work for basic FAQ responses while critical transactions need higher-accuracy recognition. Match model capability to use case requirements rather than using one model everywhere.

Response time targets should stay under 800 milliseconds for natural conversation flow. Break this into components: speech recognition around 300 milliseconds, language processing, database queries, and speech synthesis.

Test with realistic audio conditions. Use actual phone connections with background noise rather than clean studio recordings. Your production performance will differ from published benchmarks based on real-world audio quality.

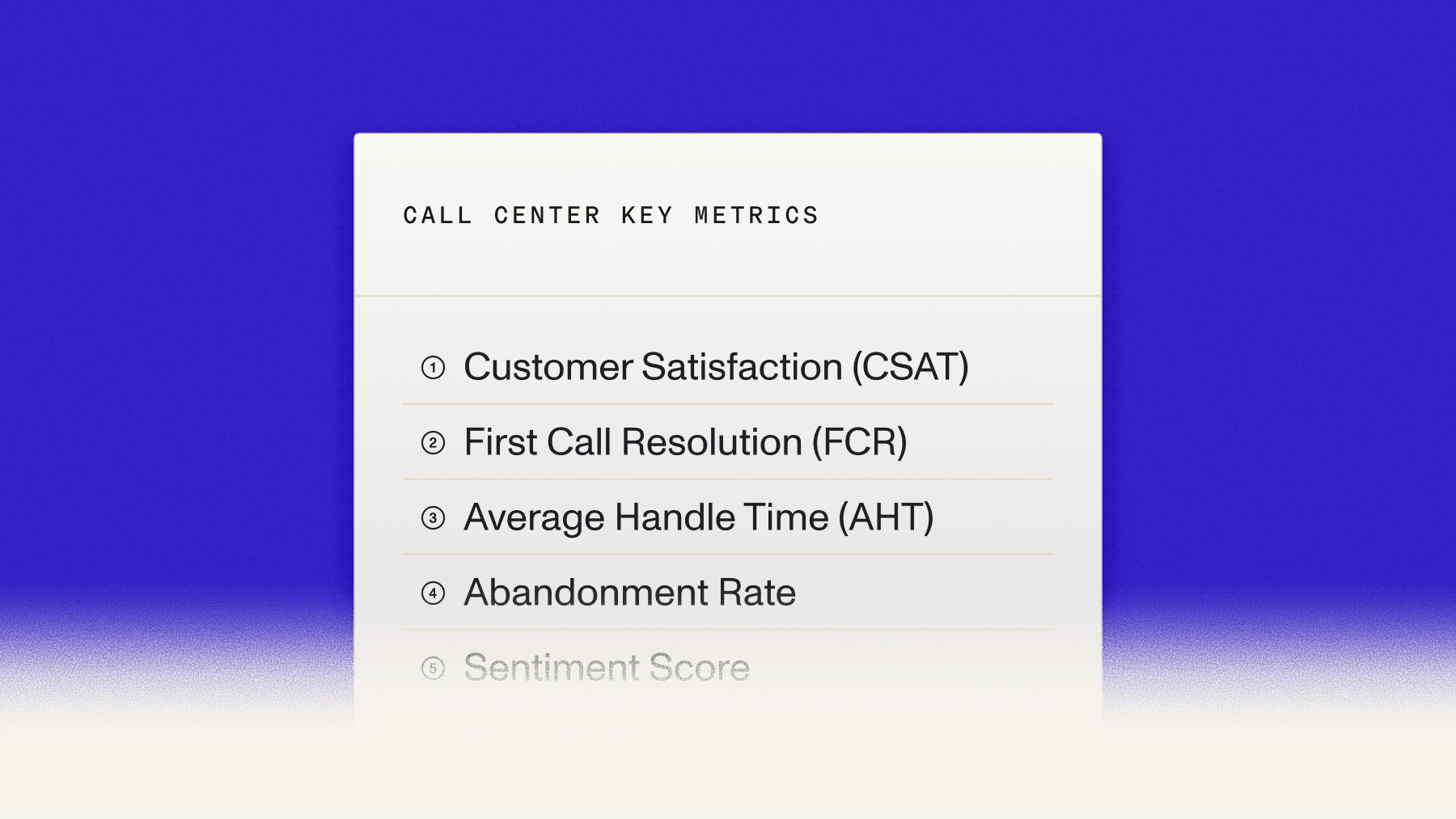

Monitor operational metrics that matter to your business:

- Resolution rate: Percentage of problems actually solved

- Escalation frequency: How often customers demand human agents

- Entity accuracy: Correct recognition of critical customer data

Common challenges and how to address them

Phone audio degrades speech recognition accuracy compared to digital recordings. Choose models trained on telephony data and validate performance with actual phone call recordings.

Turn detection problems create customer frustration. Cutting off customers mid-sentence or waiting too long for responses both harm the experience. Tune silence thresholds based on your conversation patterns rather than using default settings.

Short utterance problems occur with brief responses like "yes," "no," or single numbers. Modern speech recognition models handle these more robustly, especially when you use prompting to indicate expected response types. For example, instructing the model to expect "yes/no" style responses improves accuracy. Add validation rules only as a fallback for edge cases where business risk requires additional verification.

Entity recognition of mixed alphanumeric codes improves significantly with built-in model features. Use keyterms_prompt and prompt parameters to provide examples of your order IDs, policy numbers, and account codes. This approach delivers better accuracy than building validation layers from scratch. Keep custom validation as a secondary option for compliance-critical flows where regulations require additional verification steps.

Legacy system integration often lacks real-time API access. Older contact center platforms weren't designed for millisecond response requirements. Plan integration delays into your latency budget and build fallback handling for system failures.

Final words

Voice AI transforms customer service by providing immediate, consistent support that scales with your business needs. The technology works when you respect its requirements and limitations rather than expecting perfect performance without proper implementation.

Successful deployments start with accurate speech recognition, especially for the specific terms and identifiers your customers use. AssemblyAI's Universal-3 Pro Streaming delivers consistent low latency for real-time customer conversations, while Universal-3-Pro provides the highest accuracy for asynchronous transcription of recorded calls. Universal-Streaming is optimized for streaming applications where millisecond response times matter most in support conversations.

Frequently asked questions

How does Voice AI handle customers with strong accents or speaking different languages?

Modern Voice AI systems trained on diverse speaker data handle common accent variations effectively, but accuracy decreases with heavily accented speech over compressed phone connections. For multilingual support, test performance with the specific languages and dialects your customer base uses rather than relying on general language support claims.

What happens when Voice AI can't understand what a customer is saying?

Well-designed systems use confidence scoring to identify uncertain interpretations and ask for clarification instead of guessing. When confidence drops below threshold levels, the system should acknowledge the difficulty and either request rephrasing or transfer to a human agent rather than proceeding with potentially incorrect actions.

Can Voice AI access customer account information securely during calls?

Voice AI systems authenticate customers using knowledge-based verification like account numbers combined with additional identifiers before accessing sensitive information. The systems integrate with existing customer databases through secure APIs while maintaining audit trails of all account access for compliance requirements.

How accurate does speech recognition need to be for customer service applications?

Focus on entity accuracy for your specific business data rather than overall transcription accuracy. Account numbers, order identifiers, and customer names must be recognized correctly for the system to function, while minor errors in conversational words usually don't impact successful problem resolution.

What customer service tasks should you avoid automating with Voice AI?

Avoid automating emotionally charged situations, complex problem-solving that requires human judgment, or interactions involving sensitive topics like medical information or financial hardship. These situations benefit from human empathy and decision-making that current Voice AI cannot replicate effectively.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.