How accurate is speech-to-text in 2026?

Discover speech-to-text accuracy rates in 2026, measurement methods, real-world benchmarks, and optimization strategies for developers building voice-enabled applications.

Speech-to-text accuracy determines whether AI applications succeed or fail in production. As early research demonstrated, there is a direct correlation between lower error rates and a user's ability to complete tasks. Whether you're building meeting transcription, contact center analytics, or voice assistants, accuracy directly shapes how users experience your product—and whether they stick with it.

This guide covers current speech-to-text accuracy benchmarks, measurement methods, and optimization strategies for developers. You'll learn how accuracy is measured, what factors affect it, how to set realistic expectations for your use case, and how to optimize transcription quality in production environments.

Modern speech recognition systems achieve over 90% accuracy in optimal conditions. However, the real story is more nuanced—accuracy varies dramatically based on audio quality, accents, domain-specific terminology, and real-world conditions that benchmarks don't always capture.

What is speech-to-text accuracy?

Speech-to-text accuracy is the measure of how precisely an AI model converts spoken words into written text—expressed as a percentage, where 100% means a perfect, error-free transcript. It's the foundational metric for evaluating any speech recognition system, and it directly determines whether your application produces output that's useful or frustrating. A difference of just 5-10 percentage points in accuracy can be the difference between a transcript users trust and one they have to manually correct.

But here's where it gets interesting—accuracy isn't just about getting words right. Modern speech recognition systems must handle punctuation, capitalization, speaker changes, background noise, and context-dependent phrases. A system might correctly transcribe "there," "their," and "they're" phonetically but still fail if it chooses the wrong spelling for the context.

An 85% accurate system produces about 15 errors per 100 words, making transcripts difficult to read and requiring significant manual cleanup. A 95% accurate system produces only 5 errors per 100 words—often just minor punctuation or formatting issues that don't impede understanding.

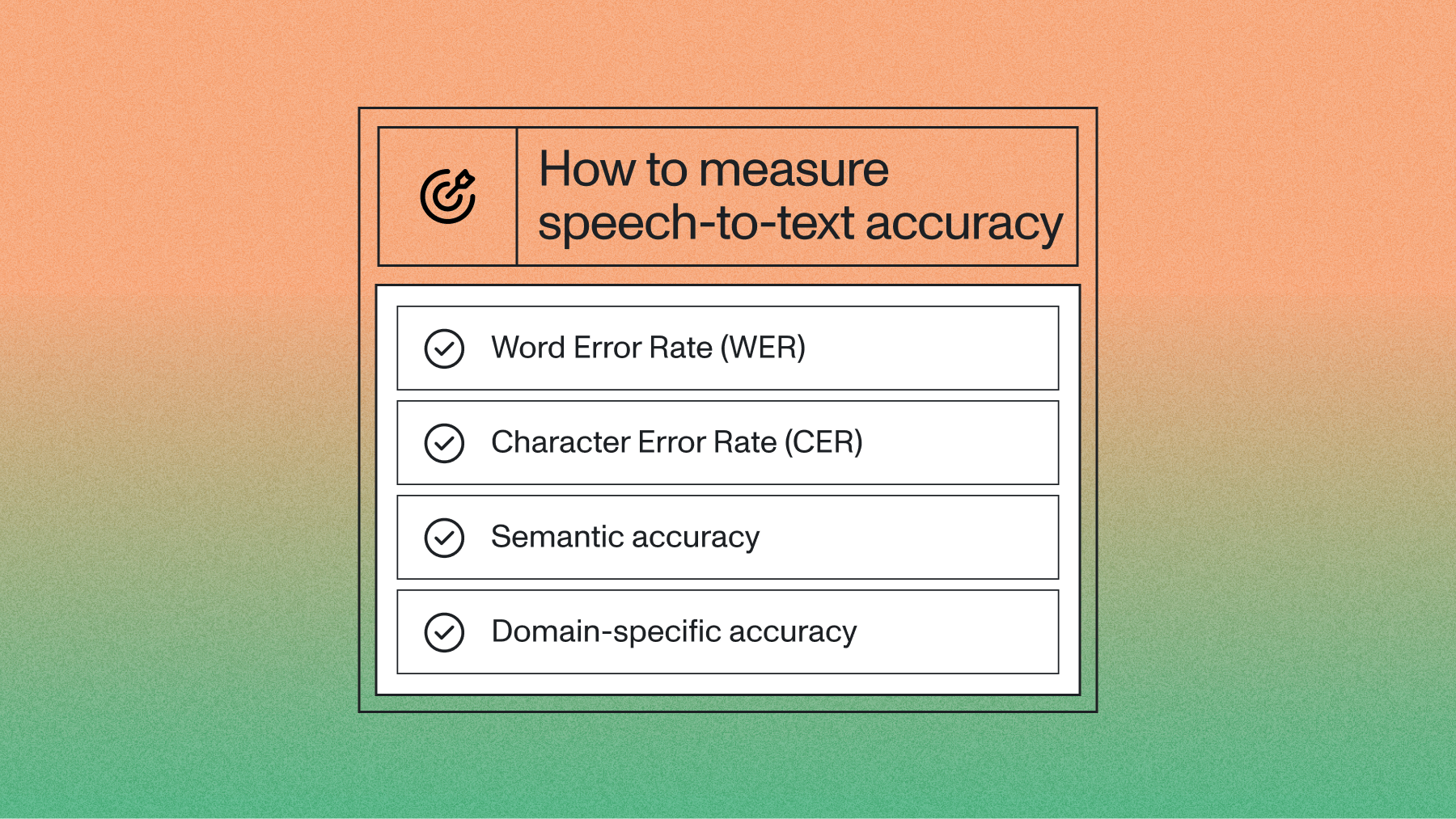

How is speech-to-text accuracy measured?

Understanding how accuracy is measured helps you evaluate providers, set realistic expectations, and choose the right metric for your use case.

Word Error Rate (WER)

The industry standard for measuring speech recognition accuracy is Word Error Rate (WER). This metric calculates the percentage of words that are incorrectly transcribed, substituted, inserted, or deleted.

Here's how WER calculation works:

WER Formula: (Substitutions + Insertions + Deletions) / Total Words in Reference × 100

Example calculation:

- Reference transcript: "The quick brown fox jumps over the lazy dog" (9 words)

- AI transcript: "The quick brown fox jumped over a lazy dog" (9 words)

- Errors: 1 substitution ("jumps" → "jumped"), 1 substitution ("the" → "a")

- WER: (2 errors ÷ 9 total words) × 100 = 22.2%

- Accuracy: 100% - 22.2% = 77.8%

Beyond WER: Real-world accuracy metrics

WER provides a standardized comparison, but it doesn't tell the complete story. Other important metrics include:

Character Error Rate (CER)

Measures accuracy at the character level rather than word level. Useful for languages without clear word boundaries.

Semantic accuracy

Evaluates whether the meaning is preserved, even if specific words differ. "Cannot" vs "can't" might register as a WER error but convey identical meaning.

Semantic Word Error Rate (Semantic WER)

An emerging metric that uses an LLM as a judge to evaluate whether meaning is preserved, rather than checking word-for-word accuracy. Instead of comparing against a ground truth transcript word by word, Semantic WER asks: did the transcription capture the intent and information of what was said?

Domain-specific accuracy

How well the system handles specialized terminology in fields like medical, legal, or technical domains.

This distinction matters enormously for modern AI-native applications. When a voice agent receives a transcript and passes it to an LLM, a substitution like "yep" for "yes" or "cannot" for "can't" has zero impact on what the LLM understands—but both register as errors in traditional WER.

Frameworks like Pipecat's open-source STT benchmark are standardizing Semantic WER as an evaluation tool, using reasoning models as judges to reduce scoring bias.

One important practical insight: in voice agent contexts, a substitution (a plausible guess) is often preferable to a deletion (a missed word). A deletion can cause a "hanging" turn where the agent receives nothing and the conversation stalls. Traditional WER treats both error types identically (S=1, D=1), but Semantic WER and use-case-specific weighting can reflect that distinction.

Real-world vs. benchmark accuracy: Setting realistic expectations

There's often a significant gap between the accuracy numbers you see in marketing materials and what you'll experience in a production environment. Benchmarks use clean, standardized audio, but the real world is messy.

Companies like Veed and CallSource that build products on top of Voice AI know that real-world performance is the only metric that matters for user satisfaction. Your users aren't speaking in a recording studio—they're on conference calls with spotty internet, in noisy cars, or using low-quality microphones.

WER also penalizes formatting choices. A model that transcribes "I cannot" will score differently than one that outputs "I can't," even when both are correct. Models that add punctuation, capitalize proper nouns, or annotate speaker labels may score higher WER against a bare ground truth—despite producing a more useful transcript.

Ground truth quality is another hidden variable in most published benchmarks. Human-transcribed reference files contain inconsistencies—missed disfluencies, formatting preferences, ordering differences—that inflate WER for models that transcribe more faithfully or differently.

When reviewing benchmark claims, ask: how was the ground truth generated, and was it normalized before scoring? These choices can shift WER by several percentage points, making comparisons across providers misleading without a controlled, identical evaluation setup.

The key takeaway: always test any speech-to-text model with audio that represents your actual use case. This is the only way to set realistic expectations and choose a provider that delivers the quality your application needs.

Human vs AI accuracy: Setting realistic expectations

When evaluating speech-to-text models, human transcription serves as the ultimate benchmark. Professional human transcriptionists achieve near-perfect accuracy in optimal conditions—they bring a lifetime of contextual knowledge that allows them to decipher heavily mumbled words, navigate complex cross-talk, and interpret severe background noise.

Modern Voice AI models have closed this gap dramatically. For example, AssemblyAI's Universal-3 Pro is engineered for high accuracy on challenging audio, getting names, account numbers, medical terms, and accented speech right where other models may approximate.

This distinction becomes critical when building real-time applications. If you're developing a voice agent, you can't wait for a human to transcribe audio—you need immediate, highly accurate speech understanding so your LLM responds to what was actually said.

This is where AssemblyAI's real-time models excel. For voice agents that need immediate, highly accurate speech understanding, AssemblyAI provides the foundational STT component with models like Universal-3 Pro Streaming. Developers can integrate our high-accuracy streaming STT with third-party services for LLM reasoning and text-to-speech (TTS) to build a complete, robust speech-to-speech pipeline. Getting the STT input right is critical, because if the transcription is wrong, the entire downstream agent responds incorrectly.

The question isn't whether AI can match humans perfectly. It's whether AI accuracy is sufficient for your specific use case while delivering the speed and scale your application requires.

Current accuracy landscape and realistic expectations

Industry-standard datasets

Most accuracy claims reference performance on standardized datasets:

LibriSpeech: Clean, read speech from audiobooks. Models typically achieve 95%+ accuracy on this dataset, but it doesn't reflect real-world conditions.

Common Voice: More diverse speakers and accents, representing realistic usage patterns. Accuracy rates are generally 5-10 percentage points lower than LibriSpeech.

Switchboard: Conversational telephone speech, which is significantly more challenging due to crosstalk, hesitations, and informal language.

Understanding these datasets matters when interpreting accuracy claims. A model that performs well on LibriSpeech may struggle with your contact center audio—and vice versa.

The best practice: always test any model against audio that represents your actual use case. Benchmark scores are a starting point, not a verdict.

Factors that impact speech-to-text accuracy

Understanding what affects accuracy helps you optimize your implementation and set realistic expectations. The factors fall into three main categories.

Audio quality factors

- Microphone quality: Higher-quality microphones capture clearer audio signals. Built-in laptop microphones typically produce lower accuracy than dedicated USB microphones or headsets.

- Background noise: Even moderate noise from traffic, air conditioning, or office chatter causes transcription errors—particularly for quieter speakers.

- Audio compression: Heavily compressed formats like low-bitrate MP3s introduce artifacts that confuse speech recognition models.

- Recording environment: Hard surfaces create echo and reverberation; soft furnishings absorb sound and improve clarity.

Speaker-related factors

- Accent and dialect: Models trained on limited accent data may struggle with regional variation, though modern systems handle diverse accents better than earlier generations.

- Speaking pace: Very fast or very slow speech reduces accuracy. Most systems perform best at natural, conversational speeds.

- Pronunciation clarity: Mumbling or slurred speech significantly impacts accuracy regardless of model quality.

- Voice characteristics: Pitch, tone, and speech patterns affect how easily AI systems process a given voice.

Content and context factors

- Vocabulary complexity: Simple conversational language achieves higher accuracy than technical jargon or specialized terminology.

- Proper nouns: Names of people, companies, or places often cause errors—especially if they're outside the model's training vocabulary. Modern models need support for proper noun metrics (emails, addresses, websites) in addition to general WER.

- Numbers and dates: Disambiguating "fifteen" vs "50" or date formats requires context that models don't always have.

- Language mixing: Code-switching between languages within a conversation reduces accuracy for most models.

Industry applications and accuracy requirements

Different use cases have varying accuracy requirements based on their tolerance for errors and the cost of mistakes. The right metric to measure depends as much as the target number itself.

Contact centers and customer service

Accuracy requirement: 90%+ for automated systems, 85%+ for agent assistance

Contact centers processing thousands of calls daily need high accuracy for sentiment analysis, compliance monitoring, and automated responses. Even small improvements in accuracy can significantly impact customer satisfaction and operational efficiency. For example, a McKinsey report found that deploying speech analytics can lead to customer satisfaction score improvements of 10 percent or more and operational cost savings of 20 to 30 percent. Market data shows that 76% of winning teams prioritize accuracy over cost, and the builder confidence in voice AI stands at 82%, though this still creates a gap with 55% user satisfaction.

Meeting transcription and note-taking

Accuracy requirement: 88%+ for readable transcripts, 92%+ for searchable archives

Meeting transcription tools must balance accuracy with real-time performance. The speed of AI is a key advantage here; research from McKinsey highlights that automated transcription can accelerate analysis time by nearly 400 percent compared to traditional methods. Users typically accept minor errors in live transcripts but expect higher accuracy in final processed versions.

Voice assistants and commands

Accuracy requirement: 95%+ for critical commands, 90%+ for general queries

Voice assistants need extremely high accuracy for important actions like making purchases or sending messages. For voice agents that pass transcripts directly to LLMs, Semantic WER is often a better evaluation metric—meaning preservation matters more than word-level perfection, and a missed word that causes a turn hang is far more damaging than a minor substitution.

Legal and medical transcription

Accuracy requirement: 98%+ due to regulatory and safety requirements

High-stakes domains require near-perfect accuracy because errors can have serious legal or medical consequences. To illustrate the risk, one study found that one in every 250 words in an AI-assisted clinical document contained a clinically significant error. Medical teams increasingly rely on Keyword WER (KW_WER) and Missed Entity Rate alongside traditional WER. AssemblyAI's Universal-3 Pro Medical Mode demonstrates this approach with a 4.9% medical entity error rate versus Deepgram's 7.3%, showing that specialized models tailored for medical terminology can deliver superior accuracy where it matters most. Since a missed drug name or dosage is far more consequential than a missed filler word, these applications often combine AI transcription with human review.

Confidence scoring and accuracy monitoring

No AI model is perfect, so how do you handle the inevitable errors? This is where confidence scores come in. For each word transcribed, a speech recognition model can provide a confidence score—a value typically between 0.0 and 1.0—that represents its certainty about that specific word.

As a developer, you can use these scores to build more robust applications:

- Flag low-confidence words: Automatically highlight words with a confidence score below a certain threshold (e.g., 0.85) in the user interface, signaling to the user that the word may be incorrect.

- Trigger human review: If the average confidence score for a transcript is low, automatically route it to a human-in-the-loop workflow for review and correction. This is critical for high-stakes applications like those built by JusticeText for legal evidence.

- Analyze error patterns: Monitor which types of audio consistently produce low-confidence scores to identify opportunities for improving audio quality or implementing custom vocabulary.

Confidence scores transform accuracy from a simple percentage into an actionable tool for improving application reliability and user trust.

Improving speech-to-text accuracy in your applications

Pre-processing optimization

Audio enhancement: Clean up audio before transcription by reducing background noise, normalizing volume levels, and filtering out artifacts.

Format optimization: Use uncompressed or lightly compressed audio formats when possible. WAV files typically produce better results than heavily compressed MP3s.

Segmentation: Break long audio files into smaller segments to improve processing and accuracy, particularly for batch transcription tasks.

Implementation best practices

- Keyterms Prompting: Provide a list of domain-specific terms—product names, acronyms, proper nouns—to improve recognition accuracy. Example: Terms: Nguyen, Beauchamp, GERD, AFib. Before: "afib/gerd" → After: "AFib/GERD". The number of supported terms depends on the model: Universal-3 Pro supports up to 1,000 words for pre-recorded audio, while Universal-3 Pro Streaming supports up to 100 words for real-time use cases.

- Contextual guidance via prompting: Models like Universal-3 Pro accept natural language prompts that let you specify the audio's domain, formatting preferences, and how to handle disfluencies—no model retraining required.

- Confidence scoring: Use confidence scores to identify potentially inaccurate transcriptions and flag them for human review or additional processing.

- Multi-pass processing: Run important audio through multiple models or processing passes, then combine results to improve overall accuracy.

Quality assurance strategies

Human-in-the-loop validation: For critical applications, implement human review processes for low-confidence transcriptions or high-importance content.

Error pattern analysis: Track common error types in your specific use case and adjust preprocessing or post-processing to address them.

Continuous monitoring: Monitor accuracy metrics over time to identify degradation or opportunities for improvement.

Measuring and monitoring accuracy in production

Once you've implemented speech-to-text in your application, ongoing measurement ensures consistent performance:

- Establish baselines: Test your implementation with representative audio samples to establish accuracy baselines for your specific use case.

- Choose the right metric for your use case: Traditional WER is appropriate when humans read the output directly. Semantic WER is better for voice agents and LLM-powered pipelines where meaning preservation matters more than exact word matching.

- Track confidence distributions: Monitor the distribution of confidence scores over time—shifting patterns may indicate audio quality changes or model drift.

- User feedback integration: Collect user corrections and feedback to understand where your system struggles most in real-world usage.

- A/B testing: Compare different models, settings, or preprocessing approaches using controlled tests with identical audio samples.

The future of speech-to-text accuracy

Speech recognition accuracy continues to improve through several technological advances:

- Larger training datasets: Models trained on more diverse, extensive datasets handle edge cases and accents better than previous generations. As recent research demonstrates, leveraging a massive dataset can cause Word Error Rate to drop from 24.3% to just 7.5% for the same model architecture.

- Semantic and task-oriented evaluation: As transcripts increasingly feed directly into LLMs and AI agents, the industry is shifting toward evaluation frameworks that measure meaning preservation rather than word-level accuracy. Open benchmarks like Pipecat's semantic WER framework are standardizing this approach.

- Multimodal approaches: Combining audio with visual cues (like lip reading) or contextual information improves accuracy in challenging conditions.

- Real-time adaptation: Models that adapt to individual speakers or specific contexts during use, learning and improving throughout a conversation.

- Edge processing: Running speech recognition locally on devices reduces latency and can improve accuracy for personalized use cases.

Today's speech-to-text accuracy enables practical applications across industries. Success depends on understanding your specific use case, audio conditions, and user requirements—including which accuracy metric is actually the right signal for how your application uses transcripts.

Ready to test accuracy with your own audio?

Try our API for free and experience how Universal-3 Pro handles your specific use case.

Frequently asked questions about speech-to-text accuracy

How does Word Error Rate relate to user experience?

WER directly impacts user experience: high WER (25%+) creates unreadable transcripts requiring heavy cleanup, while low WER (under 10%) produces output users can trust with minimal editing. For applications where transcripts feed into LLMs or voice agents, Semantic WER is often a better proxy for real-world quality.

What is considered a good Word Error Rate (WER)?

A WER of 5-10% is considered high quality for most applications. Anything above 30% indicates poor performance that will frustrate users and require significant manual correction. Industry surveys confirm that accuracy failures are a primary driver of user frustration with voice AI systems.

How can I make speech-to-text more accurate?

The most effective improvements are better input audio quality (higher-quality microphones, reduced background noise), uncompressed audio formats, and providing the model with domain-specific vocabulary for terms, names, and acronyms unique to your use case.

When should I use Semantic WER instead of traditional WER?

Use Semantic WER when transcripts feed into downstream AI systems rather than being read directly by humans—voice agents and LLM-powered pipelines care about meaning preservation, not word-for-word matching.

How does accuracy affect voice agent performance?

For voice agents, speech-to-text accuracy is foundational—your agent can only respond to what it actually hears, and a missed or misheard word can stall the conversation entirely. This is why voice agents built on high-accuracy, real-time models like Universal-3 Pro Streaming deliver noticeably more natural conversations than those built on general-purpose speech recognition. Superior STT accuracy is the foundation for the entire voice AI stack, from initial speech understanding through LLM reasoning to final response generation.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.