Noise cancellation with speech-to-text: The pros and cons

Noise cancellation can improve voice agent conversations but hurt transcription accuracy. Learn when to use it, when to skip it, and why VAD tuning should come first.

If you're building a voice agent that operates in noisy environments, call centers, drive-throughs, outdoor kiosks, your first instinct is probably to clean the audio before sending it to your speech-to-text model. But recent research and real-world production experience reveal a counterintuitive truth: noise cancellation can improve your voice agent's conversational behavior while simultaneously degrading its transcription accuracy. Whether it helps or hurts depends entirely on where and how you apply it in your pipeline.

In this post, we'll break down what noise cancellation actually does in an STT pipeline, when it helps, when it hurts, and how it compares to Voice Activity Detection (VAD) tuning, another approach that's often more effective and entirely free.

How noise cancellation fits into an STT pipeline

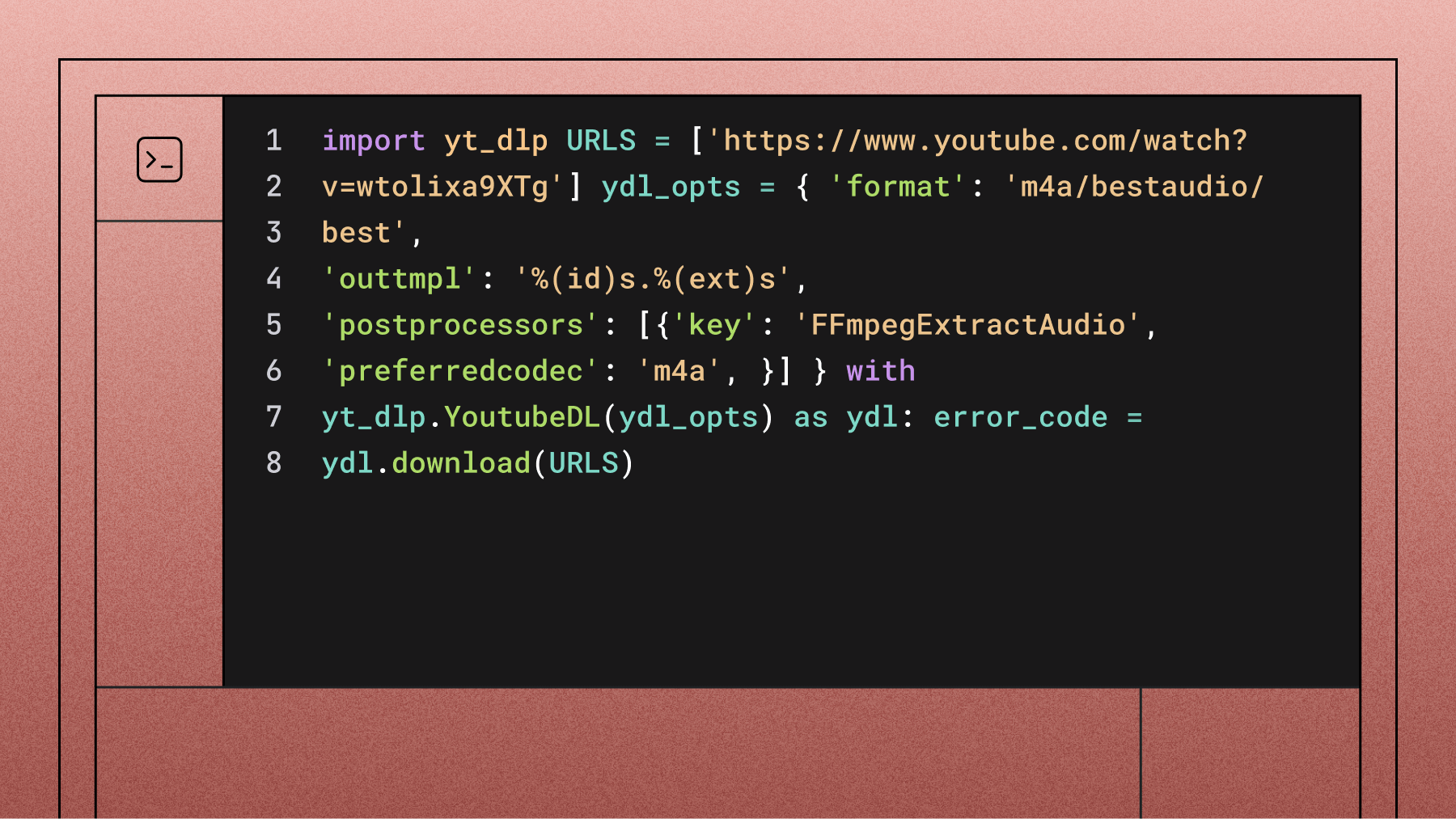

Before diving into pros and cons, it helps to understand where noise cancellation sits in the broader audio processing pipeline. A typical voice agent pipeline processes audio through several stages:

- Acoustic Echo Cancellation (AEC) removes the agent's own voice from the microphone input, preventing the system from "hearing itself." This must run on-device because it's extremely latency-sensitive.

- Noise suppression/cancellation filters background noise—traffic, HVAC hum, crowd chatter, secondary speakers—from the audio signal. This is the stage we're focused on.

- Voice Activity Detection (VAD) analyzes the audio to determine whether a human is currently speaking. This controls turn-taking: when the agent should listen, when it should talk, and when the user has been interrupted.

- Audio segmentation chunks the audio stream into segments based on VAD output, preparing it for the STT model.

- Speech-to-text transcribes the audio segments into text.

The critical architectural question is: which of these stages should receive the noise-cancelled audio?

There are two main approaches. In the first, noise-cancelled audio flows through everything—VAD, segmentation, and STT all operate on the cleaned signal. In the second, you split the pipeline: noise-cancelled audio feeds into VAD and turn-taking decisions, while the original, unprocessed audio goes to the STT model.

That distinction makes all the difference.

The pros of noise cancellation

Better turn-taking and barge-in detection

This is where noise cancellation delivers its clearest value. In voice agent conversations, background noise frequently triggers false "speech started" events—the system thinks the user is talking when they're not. A cough gets picked up as "um." Traffic noise causes the agent to stop mid-sentence. The conversation becomes a frustrating series of accidental interruptions.

Noise cancellation addresses this by cleaning the audio signal before the VAD makes its speech/no-speech decision. With cleaner input, the VAD produces fewer false positives.

Krisp's testing data shows their Background Voice Cancellation (BVC) reduces false-positive VAD triggers by 3.5x on average, and achieves more than a 2x improvement in Word Error Rate on the AMI dataset, a widely used benchmark of ~100 hours of multi-party meeting recordings featuring overlapping speech, multiple speakers, and realistic background noise, when using their BVC-VAD pipeline. For voice agents, this translates directly to smoother conversations, fewer accidental interruptions, and more natural turn-taking.

Reduced hallucination triggers

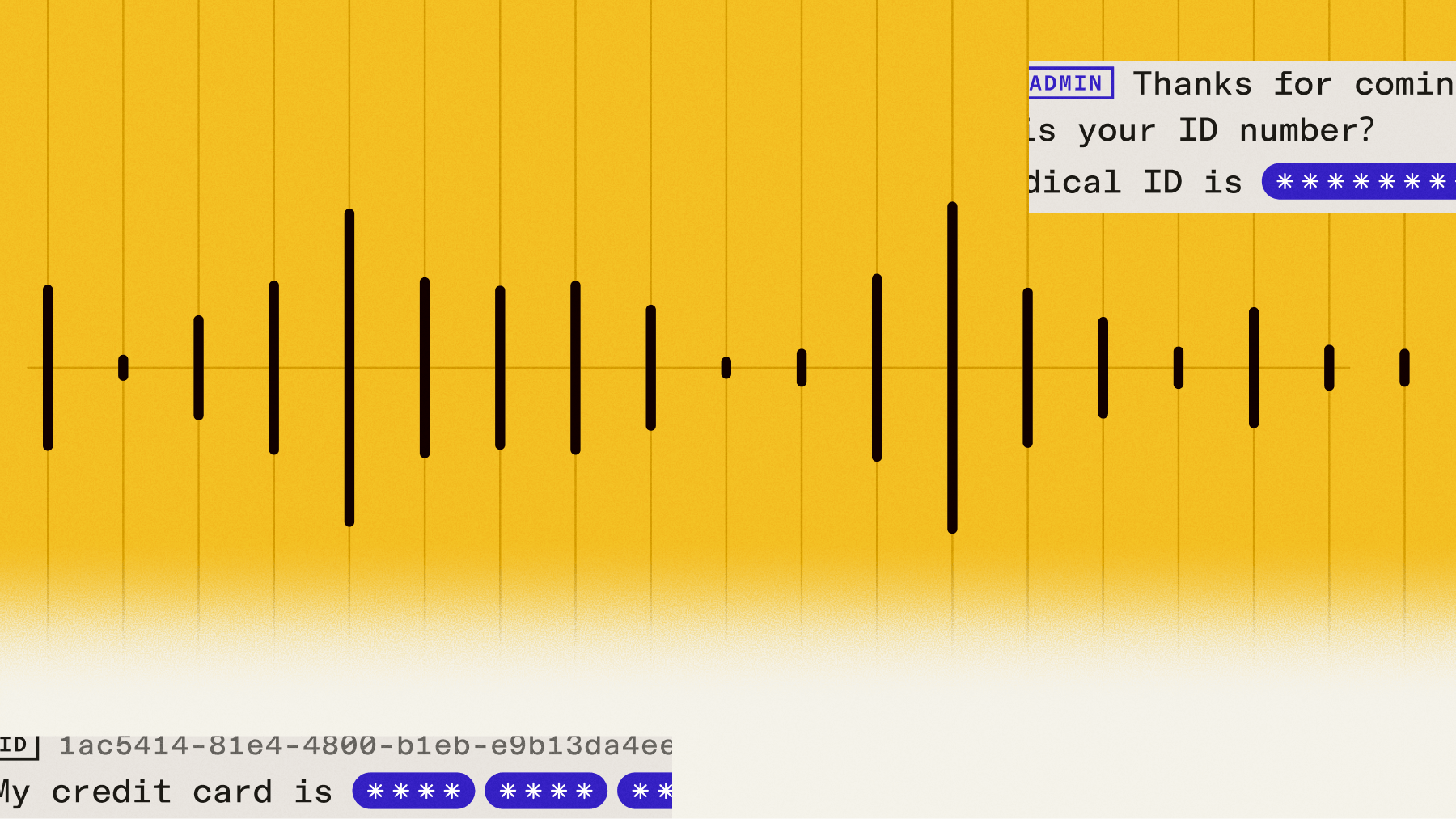

When background noise triggers a false VAD event, the STT model receives an audio segment that contains no actual speech. Modern STT models don't respond well to this—rather than returning silence, they often hallucinate words or phrases that aren't there. By reducing false VAD activations, noise cancellation cuts down the number of phantom audio segments the model has to process, which in turn reduces hallucinated output.

Speech recovery in extreme noise

In genuinely harsh acoustic environments—restaurant drive-throughs with wind, traffic, and machinery running simultaneously—speech can be nearly inaudible in the raw audio. Noise cancellation can recover utterances from noisy audio that would otherwise be completely lost, giving the STT model something to work with where it previously had nothing.

This is particularly valuable in environments where the alternative isn't "slightly worse transcription" but "no transcription at all."

The cons of noise cancellation

The accuracy paradox: noise cancellation can make transcription worse

This is the finding that surprises most people. Modern ASR models are trained on massive datasets that include real-world noisy audio. They've already learned how to handle noise. When you preprocess audio with noise cancellation before feeding it to these models, you're often duplicating work the model already does—but with less context and less accuracy.

A systematic study published in December 2025 drove this point home conclusively. Researchers tested MetricGAN+ speech enhancement on four leading ASR systems (OpenAI Whisper, NVIDIA Parakeet, Google Gemini Flash 2.0, and ParrotJet) using 500 medical speech recordings across 9 noise conditions. The result: original noisy audio achieved lower error rates than enhanced audio in all 40 tested configurations. The degradation ranged from 1.1% to 46.6% absolute increase in semantic word error rate.

Even Krisp's own evaluation illustrates this tradeoff. In their BVC-VAD-STT configuration, where noise-cancelled audio was sent to Whisper V3 for transcription, WER increased by roughly 2x compared to using the original audio. Their recommended approach is BVC-VAD mode—noise cancellation improves VAD accuracy, but original audio is preserved for the STT model.

Added latency and cost

Noise cancellation models add 10–15ms of processing latency. Not catastrophic, but meaningful in streaming speech-to-text pipelines where every millisecond affects perceived responsiveness. If the NC model runs server-side rather than on-device, network round-trip time compounds this further.

Commercial NC solutions also add cost. SDK licenses from providers like Krisp are priced per audio-hour and can meaningfully increase your cost of goods sold, especially at scale. For teams processing millions of minutes per month, this is a real line item that needs to be justified by measurable improvements.

Artifacts and "sound-alike" errors

Aggressive noise cancellation doesn't just remove noise—it can distort the speech signal itself. In production testing with drive-through audio, noise cancellation was recovering speech that was previously missed, but the transcripts started picking up "sound-alike" words instead of the correct terms. The NC model was altering the audio just enough that the STT model misrecognized words, trading silence for incorrect output.

This is a particularly insidious failure mode because it looks like the system is working—you're getting more transcribed words—until you realize those words are wrong.

Not a universal fix

Noise cancellation addresses one specific category of problems: persistent background noise and secondary speakers. It doesn't solve short utterance drops (an architectural challenge where models struggle with very brief speech segments), accented speech recognition gaps (a training data problem), or model hallucinations caused by prompt configuration issues.

Treating NC as a blanket solution for "audio quality problems" can lead to expensive investments that don't address the actual root cause.

Noise cancellation vs. VAD tuning: when to use each

One of the most common missteps teams make is reaching for noise cancellation when VAD tuning would've been more effective. These two approaches solve different problems, and understanding the distinction is key to choosing the right STT for voice agents.

Noise cancellation removes unwanted audio content from the signal. It's best suited for persistent, pervasive noise—the kind that's always there: HVAC hum, traffic, crowd noise, a TV playing in the background, secondary speakers in a shared space. When you're dealing with multiple speakers, speaker diarization is also worth considering as a complementary approach.

VAD tuning adjusts how sensitive your system is to speech detection. It's best suited for intermittent noise—a cough, a door slam, keyboard typing, a brief siren—that momentarily triggers a false speech detection event.

Here's how they compare:

A practical decision framework

Start with VAD tuning. It's free, adds no latency, and doesn't risk degrading STT accuracy. Lower your VAD threshold and increase min_turn_silence, which controls how long the model waits after detecting silence before deciding the speaker is done, for your specific use case. This alone may resolve your issues.

Add noise cancellation if VAD tuning isn't enough. If you're in a persistently noisy environment and still experiencing false speech detection or degraded turn-taking, introduce NC—but route it to VAD only. Keep the original audio flowing to your STT model.

Send NC audio to STT only as a last resort. If speech is literally inaudible without enhancement and you're getting no transcriptions at all, NC-to-STT may be necessary. But benchmark carefully: you may be trading fewer missed utterances for more misrecognized ones.

Practical recommendations

Don't apply noise cancellation by default. Evaluate whether your specific deployment environment and audio conditions actually warrant it. Many teams discover that their "noise problem" is actually a VAD sensitivity or prompt configuration issue.

Benchmark before and after. Measure WER, false-positive speech events, and end-to-end conversation quality. NC may improve one metric while degrading another. You need the full picture.

Use the split pipeline architecture. If you do add NC, route the noise-cancelled audio to VAD and turn-taking logic, but send the original audio to your STT model. This captures the conversation flow benefits without the accuracy penalty.

Tune your VAD first. A lower VAD threshold is free and often more effective than noise cancellation for intermittent noise. Test thresholds between 0.05 and 0.15 on your noisiest audio samples.

Match the NC model to the environment. If your NC solution offers configuration options (near-field vs. far-field, suppression strength), tune them for your deployment. Far-field settings work better for drive-throughs and open spaces; near-field for headset-based calls.

Watch for artifact errors. If you start seeing an increase in "sound-alike" misrecognitions after enabling NC, the enhancement may be distorting speech. Dial back the suppression strength or switch to VAD-only mode.

Stay current with model improvements. ASR models are rapidly improving their native noise robustness with each generation. Models like Universal-3 Pro Streaming are closing the gap on scenarios that previously required external preprocessing—what needed noise cancellation six months ago may not need it today.

The bottom line

Noise cancellation is a powerful tool in the Voice AI toolbox, but it's a scalpel, not a sledgehammer. The research is clear: for transcription accuracy alone, modern ASR models perform better on original audio than on noise-enhanced audio. But voice agents aren't only about transcription—they're about conversation. And for conversation flow, smooth turn-taking, and fewer false interruptions, noise cancellation can be transformative.

Here's the insight that often gets lost in the NC debate: the teams shipping the best voice agents aren't choosing between noise cancellation and a great STT model. They're investing in an STT model with strong native noise robustness first, then layering NC surgically—VAD-only, environment-matched, benchmarked—on top. The preprocessing should complement the model, not compensate for it. If you're spending more engineering time tuning your noise cancellation than evaluating your STT provider, you've got the priority order backwards.

Frequently asked questions

Does noise cancellation improve speech-to-text accuracy?

No—in most cases, it actually makes it worse. A December 2025 study found that original noisy audio produced lower error rates than noise-enhanced audio across all 40 tested configurations on leading ASR models. Modern speech-to-text systems like AssemblyAI's Universal-3 Pro Streaming are trained on diverse, real-world audio and have already learned to handle noise natively. Noise cancellation is more effective when applied to VAD and turn-taking logic rather than to the STT input itself.

How does noise cancellation affect voice agent turn-taking?

This is where noise cancellation shines. Background noise frequently triggers false "speech started" events, causing the voice agent to interrupt itself or misread when a user is done talking. By cleaning the audio before it reaches the VAD, noise cancellation reduces false-positive speech detection by up to 3.5x, leading to smoother, more natural conversations. The key is routing cleaned audio to VAD only—not to the STT model.

What's the difference between noise cancellation and VAD tuning for voice agents?

They solve different problems. Noise cancellation removes persistent background audio—HVAC hum, traffic, crowd noise—from the signal itself. VAD tuning adjusts how sensitive your system is to detecting speech, which is better for intermittent sounds like coughs, door slams, or keyboard typing. VAD tuning is free, adds no latency, and carries no risk to STT accuracy, so it should always be your first step before considering noise cancellation.

Should I preprocess audio before sending it to a speech-to-text API?

Generally, no. Modern speech-to-text APIs are built to handle noisy, real-world audio without external preprocessing. AssemblyAI's streaming speech-to-text, powered by Universal-3 Pro Streaming, is trained on diverse acoustic conditions and performs best on unprocessed audio. If you're experiencing issues, start by tuning your VAD thresholds and checking your pipeline architecture before adding noise cancellation to the audio sent to STT.

How does AssemblyAI handle noisy audio without preprocessing?

AssemblyAI's Universal-3 Pro Streaming model is trained on large-scale, diverse datasets that include real-world noise conditions—background chatter, traffic, low-quality microphones, and more. This means the model has learned to be noise-robust by default, without requiring external preprocessing. Combined with intelligent endpointing and streaming speech-to-text designed for voice agent pipelines, it handles challenging acoustic environments out of the box while delivering 300ms immutable transcripts.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.