How do I transcribe audio in languages like Spanish, French, or German?

Multilingual transcription for Spanish, French, and German audio with automatic language detection, speaker labels, and practical setup tips for global teams.

.png)

Global teams regularly work with audio and video content containing multiple languages, creating challenges when you need accurate written records. Whether you're documenting international business meetings where participants speak Spanish, French, and German, or creating accessible content from multilingual media, converting this audio into searchable text requires specialized technology that can handle language switching and maintain speaker identification across different languages.

This guide explains how multilingual transcription works, from automatic language detection to choosing between AI and human services. You'll learn about the key technologies that make it possible, practical implementation strategies, and best practices for getting accurate results when working with Spanish, French, German, and other languages in your audio content.

What is multilingual transcription?

Multilingual transcription is converting spoken audio that contains multiple languages into written text. This means when you have a recording where people speak Spanish, French, German, or any combination of languages, the system writes down exactly what was said in each original language.

Unlike translation, multilingual transcription doesn't change languages—it preserves them. If someone speaks French in your recording, you'll get French text back, not English.

Automatic language detection

Your transcription system automatically figures out which language is being spoken without you having to tell it. This means you can upload a file where someone starts speaking Spanish, switches to English mid-sentence, then continues in German, and the system will handle all the language switches.

Here's how it works in practice:

- Pattern recognition: The AI listens for sounds, rhythms, and word patterns unique to each language

- Real-time switching: When the language changes, the system adjusts immediately

- No manual setup: You don't need to specify which languages are in your audio beforehand

Speaker Diarization across languages

Through speaker diarization, the system can track who's speaking even when the same person switches languages. This means if Maria speaks both Spanish and English during a meeting, the transcript will correctly show that both language segments came from Maria, not two different speakers.

This works because your voice has consistent characteristics—like pitch and speaking rhythm—that don't change when you switch languages.

Key technologies enabling multilingual transcription

Several technologies work together to make multilingual transcription possible. Understanding these helps you choose the right solution for your needs.

Speech-to-text API capabilities

A speech-to-text API is the core technology that converts your audio into written words. This means you send your audio file to the service, and it sends back a text document with everything that was spoken.

The best APIs handle multiple languages simultaneously and work with common audio formats like MP3, WAV, and M4A files. They can process your audio in real-time during live meetings or handle recorded files you upload later.

Language detection API functionality

Language detection is part of the transcription request and is enabled with the `language_detection=True` parameter on the main `/v2/transcript` endpoint. It is not a separate API product or endpoint. This automatically identifies which language is being spoken at any moment in your audio. This means you don't have to manually tag sections of your recording as "Spanish," "French," or "German"—the system figures it out.

The detection happens continuously throughout your audio, so when speakers switch languages mid-conversation, the transcription adjusts accordingly. This is essential for natural conversations where people code-switch between languages.

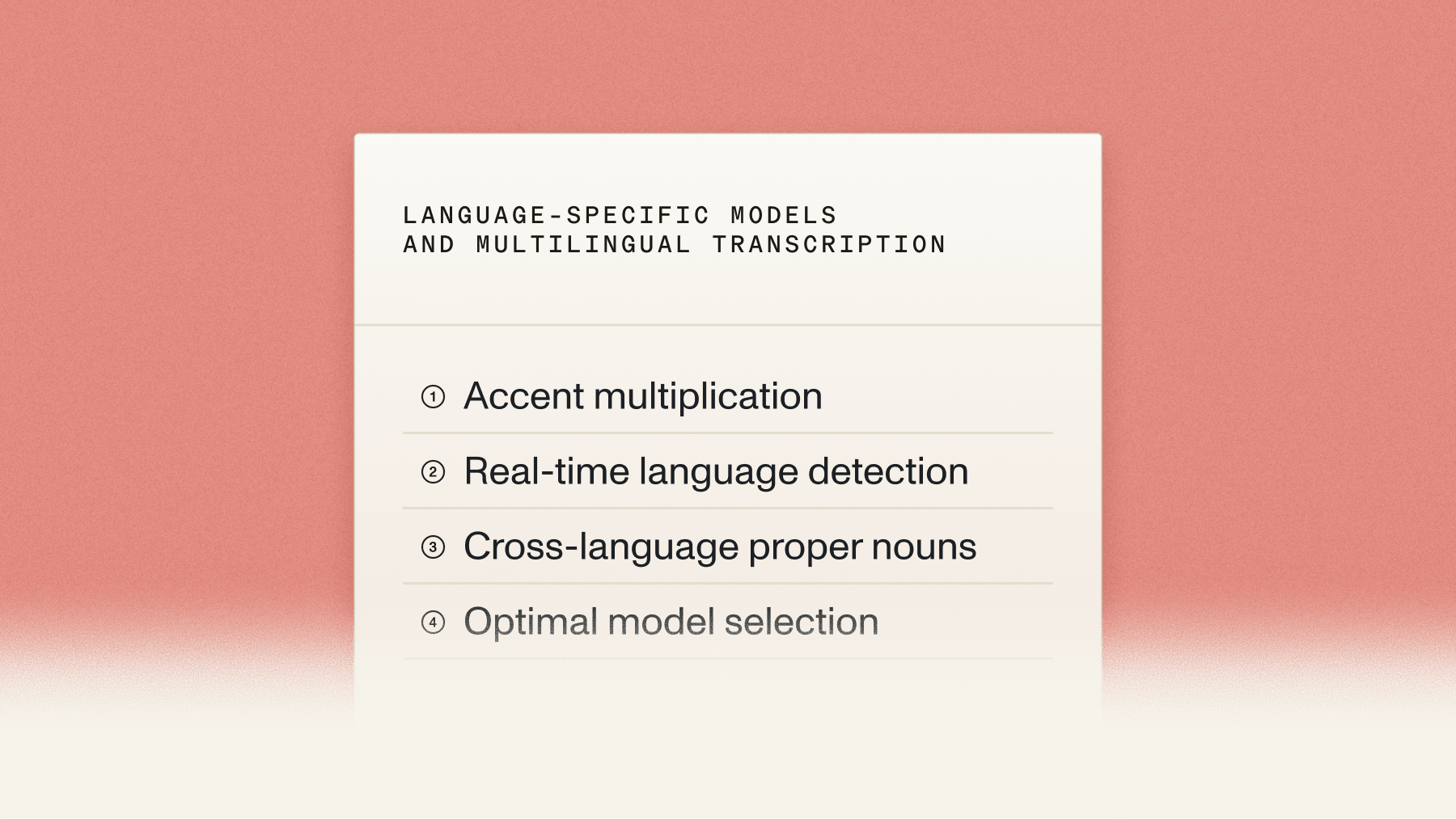

Audio to text for Spanish and other language-specific models

AssemblyAI uses multilingual models rather than a separate user-selected model per language. `Universal-2` supports 99+ languages, and Universal-3 Pro is optimized for 6 languages, including Spanish, French, and German, with support for regional dialects built in.

Language-specific features include:

- Regional accents: Mexican vs. European Spanish pronunciation differences

- Cultural expressions: Idioms and slang specific to each region

- Grammar patterns: How sentences are structured in each language

Common use cases for multilingual transcription

You'll find multilingual transcription useful in several practical situations where language barriers create communication challenges.

International business meetings

Global teams use multilingual transcription to document meetings where participants speak different languages. When your Tokyo team speaks Japanese, your Berlin engineers contribute in German, and your New York executives respond in English, the transcript captures everything in the original languages.

This creates searchable meeting records where anyone can find specific discussions, regardless of which language was used. Team members can also review what was said in their preferred language without needing translation.

Spanish audio transcription for media content

Content creators transcribe Spanish audio to expand their reach and improve accessibility. If you have a Spanish podcast, transcription helps you create subtitles for video versions, write blog posts from episode content, and make your material accessible to hearing-impaired audiences.

Media applications include:

- Subtitle creation: Automatic captions for YouTube or social media videos

- SEO optimization: Searchable text content for better discoverability

- Content repurposing: Converting podcast episodes into written articles

Foreign language transcription in customer service

Customer service teams transcribe calls in multiple languages for quality monitoring and training purposes. When customers call speaking French, the transcribed conversation helps supervisors review service quality and create training materials, even if they don't speak French themselves.

This enables consistent service standards across all languages your business supports.

Choosing between AI and human transcription services

Your choice between AI and human transcription depends on your accuracy needs, timeline, and budget constraints.

When to use AI transcription

AI transcription works best when you need fast results and can accept some minor errors. Modern AI models handle clear audio very well and process hours of content in minutes.

Choose AI transcription for:

- High volume processing: When you have many hours of audio to transcribe regularly

- Quick turnaround: Live events or urgent projects needing immediate results

- Clear audio quality: Recordings with minimal background noise and distinct speakers

- Budget-conscious projects: AI costs significantly less than human transcription

When human transcription is necessary

Human transcribers excel with complex content that requires perfect accuracy. They understand context, research unfamiliar terms, and make judgment calls when audio quality is poor or speakers have heavy accents.

You need human transcription for:

- Legal proceedings: Court records, depositions, or contract discussions

- Medical documentation: Patient consultations or clinical notes

- Poor audio quality: Recordings with background noise or overlapping speakers

- Technical content: Specialized terminology or industry-specific language

Hybrid approaches

Many organizations combine both approaches for optimal results. AI provides the initial transcript quickly and affordably, then human editors review and correct the most important sections.

This balances speed, cost, and accuracy while ensuring quality where it matters most.

Best practices for multilingual transcription

Getting high-quality results requires proper preparation and the right approach for your specific content.

Audio quality optimization

Clear audio is the foundation of accurate transcription across all languages. Background noise, echo, and overlapping speakers significantly reduce accuracy regardless of which language is being spoken.

Recording best practices:

- Use quality microphones: Dedicated mics or headsets outperform laptop built-ins

- Control your environment: Choose quiet spaces and minimize background noise

- Establish speaking protocols: Only one person should talk at a time

- Test audio levels: Ensure consistent volume without distortion

File format considerations

Different audio formats affect transcription quality and processing speed. Uncompressed formats like WAV preserve more audio detail, potentially improving accuracy. Compressed formats like MP3 are more practical for large files and online streaming.

Format recommendations:

- Maximum quality: WAV or FLAC for critical legal or medical content

- Balanced approach: High-bitrate MP3 for most business applications

- Streaming applications: AAC or Opus for real-time transcription

Language model selection

AssemblyAI's recommended approach is to use its multilingual models, such as `Universal-2` or `Universal-3 Pro`. In many cases, `language_detection=True` should be enabled rather than selecting separate single-language models. `Universal-3 Pro` is designed to provide strong accuracy for Spanish, French, and German within one model.

Consider these factors when selecting models:

- Regional variants: European vs. Latin American Spanish models

- Industry vocabulary: Medical, legal, or technical terminology

- Code-switching support: Frequent language mixing within conversations

Implementation considerations

Successfully implementing multilingual transcription requires attention to technical setup, security requirements, and workflow integration.

Security and compliance

Multilingual content often involves sensitive business information or personal data across international borders. Ensure your transcription solution meets security requirements for all regions where you operate.

Key security considerations:

- Data encryption: Protection during transmission and storage

- Access controls: User permissions for viewing and editing transcripts

- Compliance standards: GDPR for European content, HIPAA for medical information

- Data residency: Where your transcripts are stored geographically

Integration with existing workflows

Your transcription solution should fit seamlessly into your current processes. AssemblyAI's official integrations highlight partners such as Recall.ai and telephony-related tools. The documentation does not present Microsoft Teams or Slack as official direct first-party integrations.

Consider how you'll store, share, and search transcripts within your organization's existing systems.

Cost optimization strategies

Multilingual transcription costs vary based on volume, languages, and accuracy requirements. Optimize spending by using AI for initial transcription and human review only for critical sections.

Budget optimization tips:

- Estimate monthly volume: Calculate your average hours of audio across languages

- Prioritize accuracy needs: Use premium services only where essential

- Consider subscription plans: Volume discounts for predictable usage patterns

- Monitor usage: Identify opportunities to optimize language model selection

Final words

Multilingual transcription transforms audio and video content containing multiple languages into accessible, searchable text documents. The combination of automatic language detection, specialized language models, and flexible processing options makes it possible to handle complex international communications efficiently. Whether you're documenting global business meetings, creating accessible media content, or maintaining customer service records across languages, modern transcription technology breaks down language barriers while preserving the original meaning and context.

AssemblyAI's Voice AI models deliver industry-leading accuracy for multilingual speech-to-text applications. The platform's automatic language detection capabilities and specialized language models ensure that your Spanish, French, German, and other language content is transcribed with the precision and reliability that global businesses require.

Frequently asked questions

Can AI transcription systems automatically detect when speakers switch between Spanish and English mid-conversation?

Yes, modern AI transcription systems can detect language switches within the same conversation and even within individual sentences. The system continuously analyzes audio patterns and adjusts its language processing in real-time as speakers change languages.

What's the accuracy difference between transcribing Spanish audio versus English audio?

Spanish transcription accuracy is generally comparable to English when using language-specific models trained on Spanish speech patterns. However, accuracy can vary depending on regional accents, audio quality, and whether speakers are mixing languages within sentences.

Should I use separate transcription requests for each language in my multilingual audio file?

No, you should upload the entire multilingual audio file as a single request. Modern transcription systems are designed to handle multiple languages within one file automatically, and separating languages manually often reduces accuracy and creates workflow complications.

How does transcription handle Spanish regional dialects like Mexican Spanish versus Argentinian Spanish?

Advanced transcription systems use models trained on multiple Spanish dialects and can adapt to regional pronunciation differences, vocabulary variations, and accent patterns. However, accuracy may vary slightly between dialects, with more common variants typically performing better.

Can I get timestamps for different languages within the same transcription?

Yes, multilingual transcription services provide timestamps that show exactly when each language segment begins and ends within your audio file. This allows you to locate specific language portions and understand the conversation flow across different languages.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

.png)