Are there language-specific models for better accuracy?

Speech to text accuracy depends on audio quality, accents, models, and setup. Learn how WER works and improve transcription results for real teams today.

Speech-to-text accuracy determines whether your Voice AI application succeeds or frustrates users. Most developers focus solely on Word Error Rate percentages from marketing materials, but real-world performance depends on audio quality, speaker characteristics, and how you configure your system. Understanding these factors helps you set realistic expectations and optimize for actual use cases.

This guide explains how speech recognition accuracy gets measured, what performance to expect in different scenarios, and proven methods to improve your results. You'll learn why benchmark claims don't match production performance, which factors matter most for your specific application, and practical steps that deliver measurable improvements without rebuilding your entire system.

How speech-to-text accuracy is measured

Speech-to-text accuracy is how well an AI model converts spoken words into written text. This means comparing what the AI wrote against what a human would write from the same audio. The industry measures this using Word Error Rate (WER), which counts the percentage of words that are wrong, missing, or added incorrectly.

Lower WER means better accuracy. So if your system has 5% WER, that's 95% accuracy.

But here's the thing—WER isn't the only metric that matters anymore. Many transcripts now go directly into AI systems instead of being read by humans, which changes what "accuracy" really means.

Word Error Rate (WER) calculation

WER calculation compares your AI transcript against a perfect human transcript. You count three types of mistakes: wrong words (substitutions), extra words (insertions), and missing words (deletions). Then you divide total errors by the total words in the perfect transcript.

Here's how it works:

- Perfect transcript: "The quick brown fox jumps over the lazy dog" (9 words)

- AI transcript: "A quick brown fox jumped over the lazy dog" (9 words)

- Errors: 2 substitutions ("The" becomes "A", "jumps" becomes "jumped")

- WER: (2 errors ÷ 9 words) × 100 = 22.2%

Beyond WER: Alternative accuracy metrics

You might need different metrics depending on how you use your transcripts. Semantic WER measures whether the meaning stays the same even if specific words change—"cannot" versus "can't" means the same thing but counts as an error in regular WER.

This matters when your transcripts feed into AI models that understand meaning rather than exact words.

- Confidence scoring: Shows how certain the AI model is about each word it transcribes

- Character Error Rate: Measures accuracy by individual letters instead of whole words

- Semantic WER: Focuses on meaning preservation rather than exact word matches

What accuracy to expect: Current benchmarks

The best speech-to-text models can achieve very high accuracy in perfect conditions—think professional recording studios with expensive microphones and no background noise. But your real-world results will be different.

You'll typically see much lower accuracy when processing phone calls, video conferences, or recordings with background noise. This isn't a flaw in the technology—it's just reality.

Benchmark vs. real-world performance gap

Marketing materials often show near-perfect accuracy because they test on ideal datasets. These are usually audiobook recordings with clear pronunciation and zero background noise. Your users aren't reading from scripts in recording studios.

You should expect your real-world accuracy to be lower than benchmark claims. The difference between ideal conditions and real use is normal and expected.

Companies building successful products with Voice AI plan for this reality from the start.

Key factors that affect accuracy

Three main things determine whether your speech-to-text system works well or poorly. Understanding these helps you set realistic expectations and find ways to improve performance.

Audio quality factors

Your microphone quality matters more than the AI model you choose. Even the most advanced AI can't recover information that wasn't captured properly in the first place.

- Microphone distance: Closer microphones capture clearer audio with less background noise

- Background noise: Even moderate office chatter can significantly hurt accuracy

- Audio compression: Heavily compressed files lose audio information the AI needs

- Recording environment: Hard surfaces create echo that blurs word boundaries

A decent USB microphone will give you better results than an expensive AI model running on poor audio.

Speaker-related factors

Different speakers present different challenges for speech recognition systems. Non-native accents remain one of the biggest accuracy hurdles, though modern models handle diversity better than older systems.

Speaking pace affects accuracy too. Very fast or very slow speech can confuse the AI model's timing expectations.

Multiple speakers create the hardest challenge—when people talk over each other, accuracy drops dramatically because the AI can't separate the voices clearly.

Vocabulary and content challenges

Specialized terminology consistently causes recognition errors unless you're using models trained for your specific field. Medical terms, legal language, or technical acronyms will have higher error rates than everyday vocabulary.

Proper nouns like names, companies, or products challenge even advanced models because they're often unique and not well-represented in training data.

- Numbers: "Fifteen" versus "fifty" sound similar and create ambiguity

- Alphanumerics: Phone numbers and addresses need special formatting rules

- Domain terminology: Medical, legal, and technical terms need specialized models

How to improve speech-to-text accuracy

You can significantly improve accuracy through both better audio input and smarter system configuration. Many improvements cost nothing beyond changing your workflow.

Audio input optimization

Start with the basics that give you immediate results:

- Use a quality microphone: Even a basic USB microphone dramatically improves results over built-in laptop mics

- Position correctly: Keep microphones 6-12 inches from speakers

- Control environment: Close windows, turn off fans, use soft furnishings to reduce echo

- Choose better formats: Use WAV or FLAC files instead of compressed MP3 when possible

For meetings with multiple people, individual microphones for each person work much better than a single conference room microphone.

System configuration and features

Modern speech recognition models offer settings that significantly impact accuracy. Domain-specific models trained on medical, legal, or financial conversations recognize specialized vocabulary much better than general models.

You can also provide context about expected vocabulary through prompting features. This is particularly effective for company names, product terms, or industry-specific language.

- Keyterms prompting: Boost recognition of important terms, names, or phrases

- Confidence scoring: Identify uncertain words that might need human review

- Post-processing: Use AI models to correct obvious errors based on context

- Multiple models: Run several systems and compare outputs for critical transcriptions

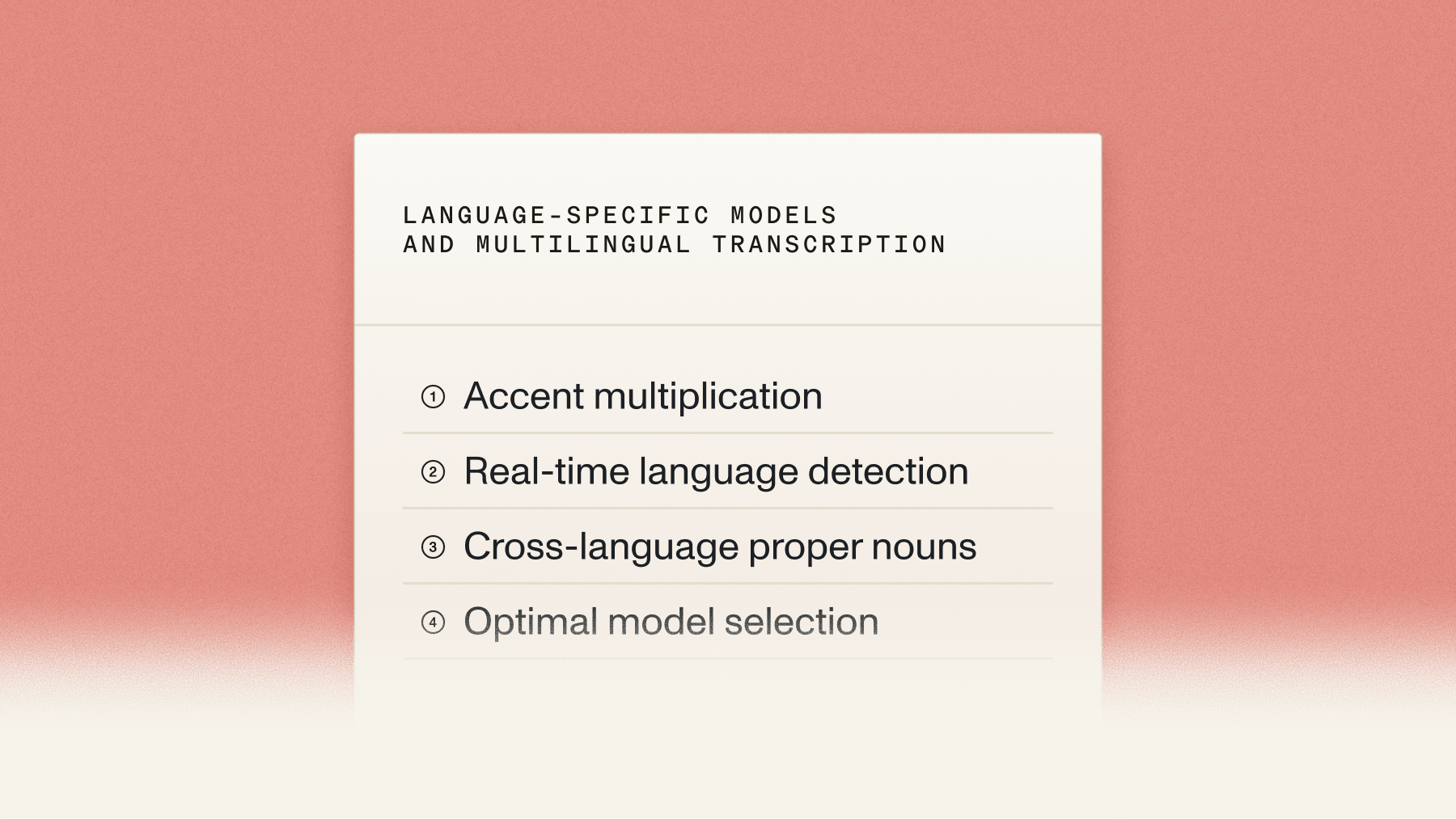

Language-specific models and multilingual transcription

Language-specific models consistently outperform general multilingual models for accuracy. A model trained only on Spanish will do better on Spanish audio than a model trained on 50 languages. This happens because language-specific models can focus all their capacity on understanding one language's nuances.

The challenge comes with code-switching—when speakers alternate between languages within the same conversation. This is common in multilingual communities where people might switch between English and Spanish mid-sentence.

Multilingual speech recognition faces unique challenges:

- Accent multiplication: Every language has multiple accent variations to handle

- Real-time language detection: The system needs to recognize language switches instantly

- Cross-language proper nouns: Names from one language appearing in another create confusion

- Optimal model selection: Different languages work best with different AI architectures

You face a trade-off when handling multiple languages. Separate language-specific models give you maximum accuracy but require detecting when speakers switch languages. Single multilingual models simplify your setup but reduce overall accuracy.

AssemblyAI offers two distinct approaches to this challenge. Universal-2 supports 99 languages for broad multilingual coverage, while Universal-3 Pro delivers the highest accuracy on 6 major languages with advanced prompting capabilities. This lets you choose between broader language support or maximum accuracy based on your specific needs.

Final words

Understanding speech-to-text accuracy measurement and optimization makes the difference between frustrating implementations and successful products. The key insight is that perfect accuracy isn't your goal—useful accuracy is.

A system with good accuracy plus features like confidence scoring, custom vocabulary, and fast processing often serves your needs better than one claiming perfect accuracy without these capabilities. AssemblyAI's Universal-2 delivers industry-leading accuracy across 99 languages for diverse accents and conditions, while Universal-3 Pro offers the highest accuracy on 6 major languages with advanced prompting capabilities and streaming transcription to address real production challenges beyond basic accuracy metrics.

Frequently asked questions

What Word Error Rate percentage is considered good for business applications?

A WER of 5-10% represents high quality for most business applications, while anything above 20% indicates poor quality that will frustrate users. Voice command systems typically need very low WER for reliability, while meeting transcription can tolerate higher WER since humans review the output.

Why does my speech-to-text accuracy drop significantly with phone call audio?

Phone call audio uses heavy compression and limited frequency range that removes audio information speech recognition models need. Additionally, phone systems introduce artifacts, echo, and network delays that degrade the audio quality compared to direct microphone input.

Can speech-to-text models achieve the same accuracy as professional human transcriptionists?

Human transcriptionists maintain very high accuracy for critical tasks by using context, experience, and multiple passes to fill gaps. AI models approach human performance in ideal conditions but struggle with challenging scenarios like overlapping speech or poor audio quality where humans excel.

How do regional accents affect speech recognition accuracy across different English dialects?

Regional accents can reduce accuracy significantly depending on training data representation. Models trained primarily on American English might struggle with Scottish, Indian, or Australian English accents, though modern multilingual models show improved robustness across accent variations.

What preprocessing steps improve speech-to-text accuracy for recorded audio files?

Noise reduction, volume normalization, and format conversion to uncompressed audio can improve accuracy. However, capturing clean audio initially works better than trying to fix poor recordings afterward, since preprocessing can introduce artifacts that confuse speech recognition models.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

.png)

.png)