Streaming speaker diarization: How to identify who's speaking in real time

Streaming speaker diarization identifies who is speaking in real time with low-latency labels. Learn how it works and when to use it for live apps.

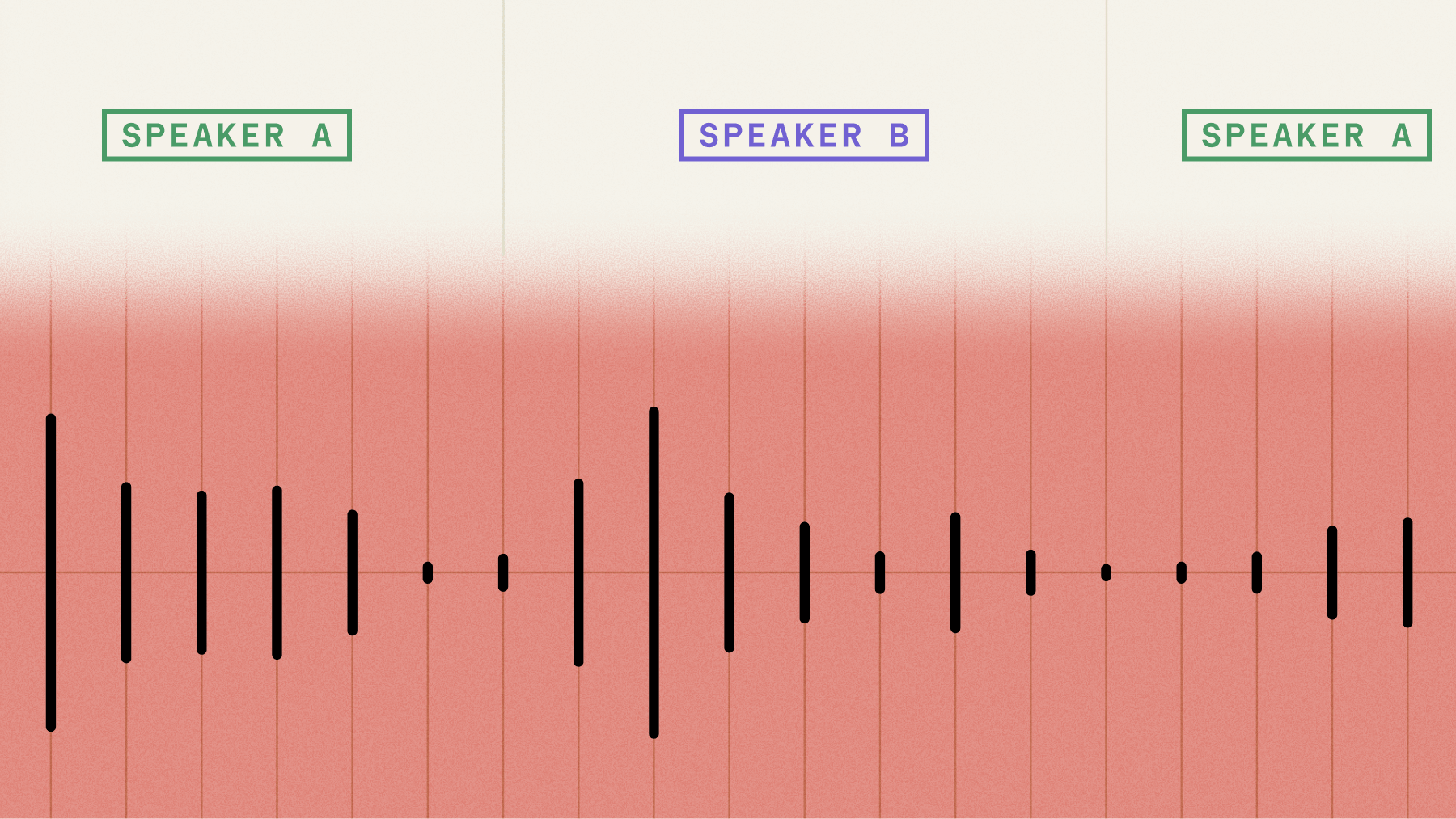

Streaming speaker diarization identifies who's speaking during live audio capture, assigning speaker labels like SPEAKER_A and SPEAKER_B in real time as conversations unfold. Unlike traditional batch processing that waits for complete recordings, streaming diarization makes speaker assignments within milliseconds while people are still talking. This creates a fundamental constraint: once the system assigns a speaker label to audio, that decision becomes permanent with no ability to revise it later.

This real-time capability matters when your application needs to act on speaker identity during conversations rather than afterward. Voice agents routing responses based on who's speaking, live contact center coaching systems, and meeting platforms showing labeled transcripts to participants all depend on immediate speaker attribution. The technology trades some accuracy for speed, but enables entirely new categories of voice applications that weren't possible with batch-only processing.

What is streaming speaker diarization?

Streaming speaker diarization is the real-time identification of who's speaking during live audio capture. This means the technology assigns speaker labels (SPEAKER_A, SPEAKER_B) instantly as audio arrives, rather than waiting for complete recordings to finish.

Here's the key difference: traditional batch diarization waits for your entire audio file before processing it. Streaming diarization works while you're still talking, making decisions about who said what within milliseconds.

Think of a customer service call where the CRM system needs to track which parts came from the agent versus the customer—right now, not after the call ends. That's streaming diarization in action.

The technology faces a critical constraint that shapes everything about how it works. Once it assigns a speaker label to a piece of audio, that decision is final. There's no going back to fix mistakes like batch processing allows.

Accuracy builds over time: Early in a conversation, speaker assignments might be less stable because the system has limited data to work with. As more audio comes in, the assignments get more reliable.

You need streaming diarization when speaker attribution matters during the conversation itself—for real-time agent coaching, live meeting transcription, or voice agents that need to know who's talking to respond appropriately.

How does streaming speaker diarization work?

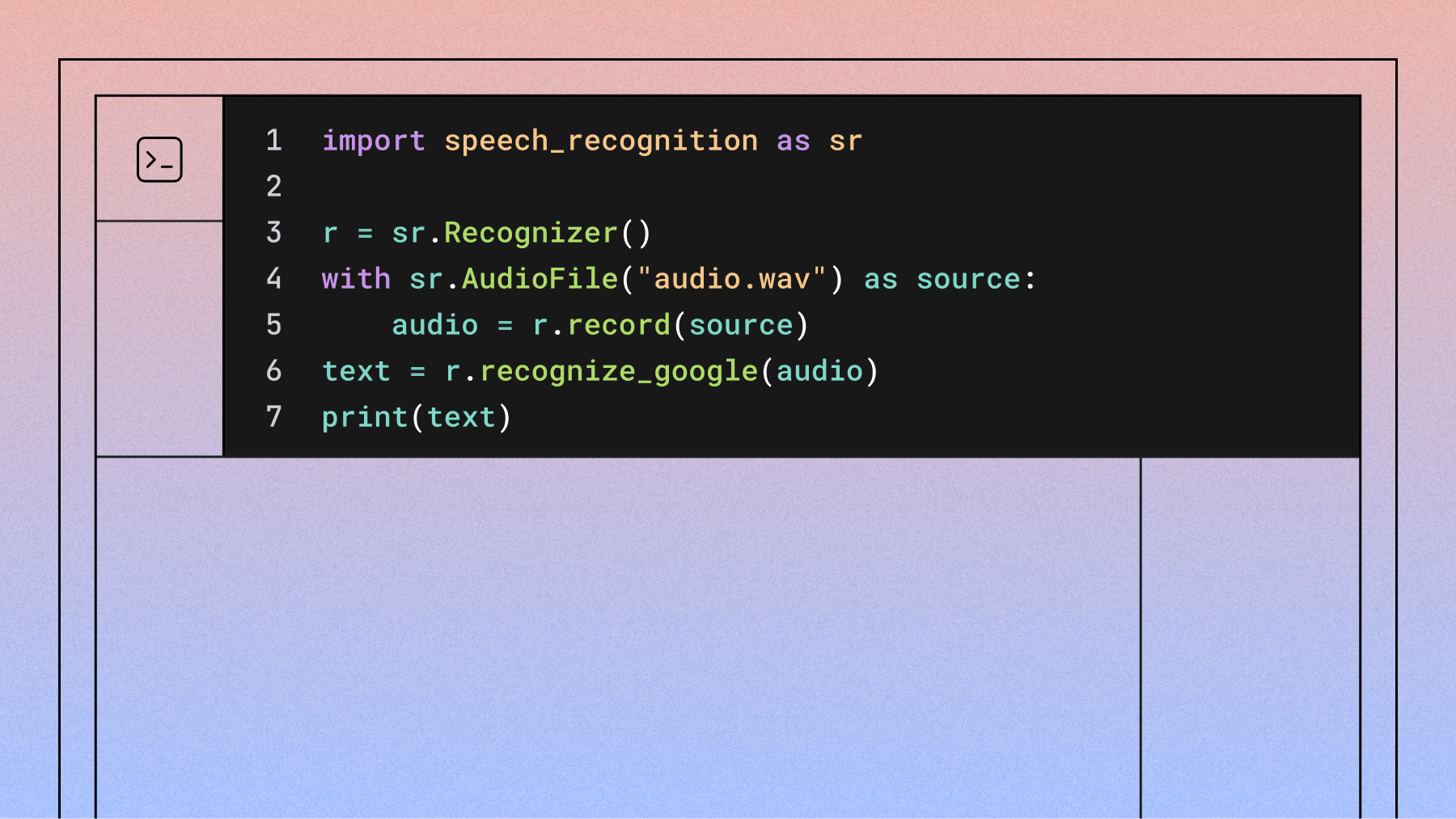

Streaming diarization builds on top of speech-to-text technology to identify speakers in real time. The process follows three main steps: detecting when someone stops talking, creating a voice fingerprint for that turn, and deciding if it matches a known speaker or represents someone new.

The system depends heavily on speech recognition to determine turn boundaries. When the speech-to-text model decides a speaker has finished talking, that triggers the diarization process for that chunk of audio.

Chunked processing and windowing

The system doesn't process random chunks of audio—it works with complete speaker turns. A "turn" means one person speaking from start to finish, like saying "Hello, how can I help you today?" before the other person responds.

This approach gives you two major benefits. First, it keeps natural speech boundaries intact instead of chopping up words mid-sentence. Second, it aligns perfectly with transcription output so you get both the words and the speaker labels together.

Turn boundary accuracy matters: If the speech-to-text gets confused about when turns end, the speaker assignments will be wrong too. The two systems need to work together seamlessly.

The system maintains a "speaker cache"—a memory bank of voice characteristics from recent turns in the conversation. For each new turn, it compares the new voice against this cache to decide if it's from a known speaker or someone new.

Here's what makes streaming different: it can't look ahead. The system must make decisions using only the audio it has received so far, unlike batch processing that can analyze the entire recording before making any assignments.

- Session start challenge: The first couple turns often get misassigned because there isn't enough voice data yet

- Self-correction: As the conversation continues, assignments become more stable and accurate

- No second chances: Once a turn gets labeled, that label sticks—there's no going back to fix it

Speaker caching and memory management

The speaker cache is where the system stores voice fingerprints from everyone who's spoken so far. When new audio comes in, it extracts a voice fingerprint (called an embedding) and compares it to everything in the cache.

The embedding model itself doesn't learn or change during a conversation. It's like a fixed calculator that always produces the same output for the same voice input. Only the clustering part accumulates memory, building up profiles of each speaker as more turns come in.

Speaker labels follow arrival order. The first person to speak becomes SPEAKER_A, the second becomes SPEAKER_B, and so on. These labels stay consistent throughout the conversation—SPEAKER_A always refers to the same person.

Short utterances create problems: Brief responses like "yes" or "okay" don't provide enough voice data for reliable identification. The system might label these as UNKNOWN or incorrectly assign them to the previous speaker.

Background noise hurts accuracy: Microphone bleed, cross-talk, and environmental noise all make voice fingerprints less reliable. Clean audio with minimal overlap between speakers works best.

Balancing latency and accuracy in streaming diarization

Streaming diarization forces you to choose between speed and accuracy. More context leads to better speaker identification, but waiting for more context means higher latency. Less context enables faster responses but less stable labels.

The biggest latency factor isn't the diarization itself—it's turn detection. The system must wait for someone to stop talking before it can process their voice. With the Universal-3 Pro Streaming model using aggressive settings (min_turn_silence=100ms), you can get turn delivery in around 221ms, but the diarization overhead on top of that is minimal.

The context window is your main accuracy control. With only one or two turns in memory, the system struggles to distinguish similar voices. With five or more turns per speaker, the voice profiles become well-defined and assignments stabilize.

Why context matters so much: Early in a conversation, the system is essentially guessing based on limited voice data. As it hears more from each person, it builds stronger voice profiles and makes more confident assignments.

You can improve accuracy by setting the max_speakers parameter when you know how many people are talking. For a customer service call, setting it to 2 helps the system focus on just two voice profiles instead of constantly wondering if there's a third person.

When to use streaming speaker diarization

The decision is straightforward: use streaming when you need speaker labels during the conversation, use batch when you need them afterward. Ask yourself: does your application need to act on speaker identity before the conversation ends?

Streaming is required for:

- Voice agents that route responses based on who's speaking

- Live contact center coaching where supervisors need real-time visibility

- Meeting transcription that shows live labeled text to participants

- Broadcast captioning for live TV or radio shows

Batch works better for:

- Post-call analysis where accuracy trumps speed

- Podcast production that needs clean speaker separation

- Legal transcription requiring high accuracy and revision capability

- Meeting summaries generated after calls complete

Consider a hybrid approach for maximum benefit. Use streaming diarization for real-time features during the conversation, then run batch diarization on the same audio afterward for higher-accuracy records. This gives you both immediate functionality and reliable documentation.

Speaker count significantly affects streaming performance. The system works most reliably with two speakers, like agent-customer calls. As you add more speakers, accuracy degrades because the system has less audio per person to build reliable voice profiles.

Streaming speaker diarization solutions

Most published speaker diarization benchmarks measure batch processing, not streaming. Streaming numbers are rarely published because they're harder to measure and typically lower than batch results. When evaluating solutions, test with your actual use case rather than relying on benchmark claims.

AssemblyAI's streaming diarization works by adding speaker_labels: true to any streaming connection. You can set max_speakers (1-10) to hint at the expected number of people and improve accuracy. It works with Universal-3 Pro Streaming and all multilingual streaming models.

The feature is available in public beta with ongoing infrastructure scaling and model improvements. Real-world feedback from companies like Goodcall highlights strong performance: "Turn detection latency is best we have seen, transcript quality in a noisy environment unmatched."

Evaluation criteria for streaming solutions:

- Test with your actual audio conditions, not ideal laboratory samples

- Measure how quickly speaker labels stabilize in real sessions

- Check short utterance handling—can it identify brief responses?

- Verify maximum speaker count support for your use case

- Understand pricing models for your expected volume

The improvement in AssemblyAI's speaker embeddings specifically targeted the failure modes that hurt streaming performance most: quiet segments, short responses, background noise, and overlapping voices. Since streaming can't revise its decisions, getting these edge cases right matters more than in batch processing.

Final words

Streaming speaker diarization enables voice applications where you need to know who's speaking while the conversation happens, not just afterward. The core trade-off is clear: you sacrifice some accuracy for real-time delivery, since the system can't revise its decisions after seeing the complete recording.

AssemblyAI's Universal-3 Pro Streaming provides this capability through a simple speaker_labels: true parameter across all streaming models. While currently in public beta with ongoing improvements, it addresses the key challenge of building voice applications that need immediate speaker attribution rather than post-conversation analysis.

Frequently asked questions

How fast can streaming speaker diarization identify speakers?

With AssemblyAI's Universal-3 Pro (Streaming) model and min_turn_silence=100ms, you get turn delivery in around 221ms with minimal additional overhead from speaker identification. The main delay comes from waiting for someone to finish speaking, not from processing their voice. Note that other streaming models use different turn detection systems and may have different latency characteristics.

Can streaming diarization separate overlapping speech?

No—when two people talk simultaneously, streaming diarization assigns the entire overlapping segment to one speaker. If you frequently deal with cross-talk, use separate microphones for each speaker to eliminate the overlap problem entirely.

How many speakers can streaming diarization handle accurately?

The AssemblyAI max_speakers parameter accepts values 1-10.

Why is batch diarization more accurate than streaming?

Batch diarization can analyze the complete recording before making any speaker assignments, allowing it to revise and optimize labels across the entire audio file. Streaming must commit to labels immediately with only partial context, limiting its ability to correct early mistakes.

Can prompting improve streaming diarization for short responses?

No—prompted speaker attribution is an experimental feature designed for async (pre-recorded) transcription and does not reliably work for streaming. The streaming API processes shorter audio segments that don't provide enough context for prompt-based speaker attribution to function properly. For streaming applications with short utterances, rely on the automatic speaker diarization feature rather than prompting.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

.png)