Turn detection vs forced endpoints in voice AI: Why getting this wrong tanks your UX

Voice agent turn detection explained: compare VAD and forced endpoints, tune silence thresholds and confidence scores to stop interruptions and delays.

.png)

Turn detection determines when users finish speaking in voice conversations, directly controlling whether your AI agent responds at the right moment or creates awkward interruptions and delays.` This system analyzes speech patterns and silence to decide when it's appropriate for your agent to start responding, making it fundamental to natural conversation flow.

Getting turn detection wrong destroys user experience through two critical failures: cutting users off mid-sentence when they're still speaking, or creating uncomfortable silence after they've clearly finished. The configuration approach differs completely between AI models—Universal-3 Pro Streaming uses punctuation patterns while Universal-streaming models use confidence scores—and using the wrong parameters for your chosen model guarantees conversation problems that frustrate users and break engagement.

What is turn detection in voice agents

Turn detection is the system that identifies when a user has finished speaking in a conversation. This means your voice agent knows when it's appropriate to start responding instead of waiting or interrupting.

The process works like this: audio input arrives, turn detection analyzes the speech and silence patterns, then decides whether to trigger response generation. This happens continuously in real-time during every conversation.

Think of it like knowing when to speak in human conversation.` Jump in too early and you're rude. Wait too long and it's awkward silence.

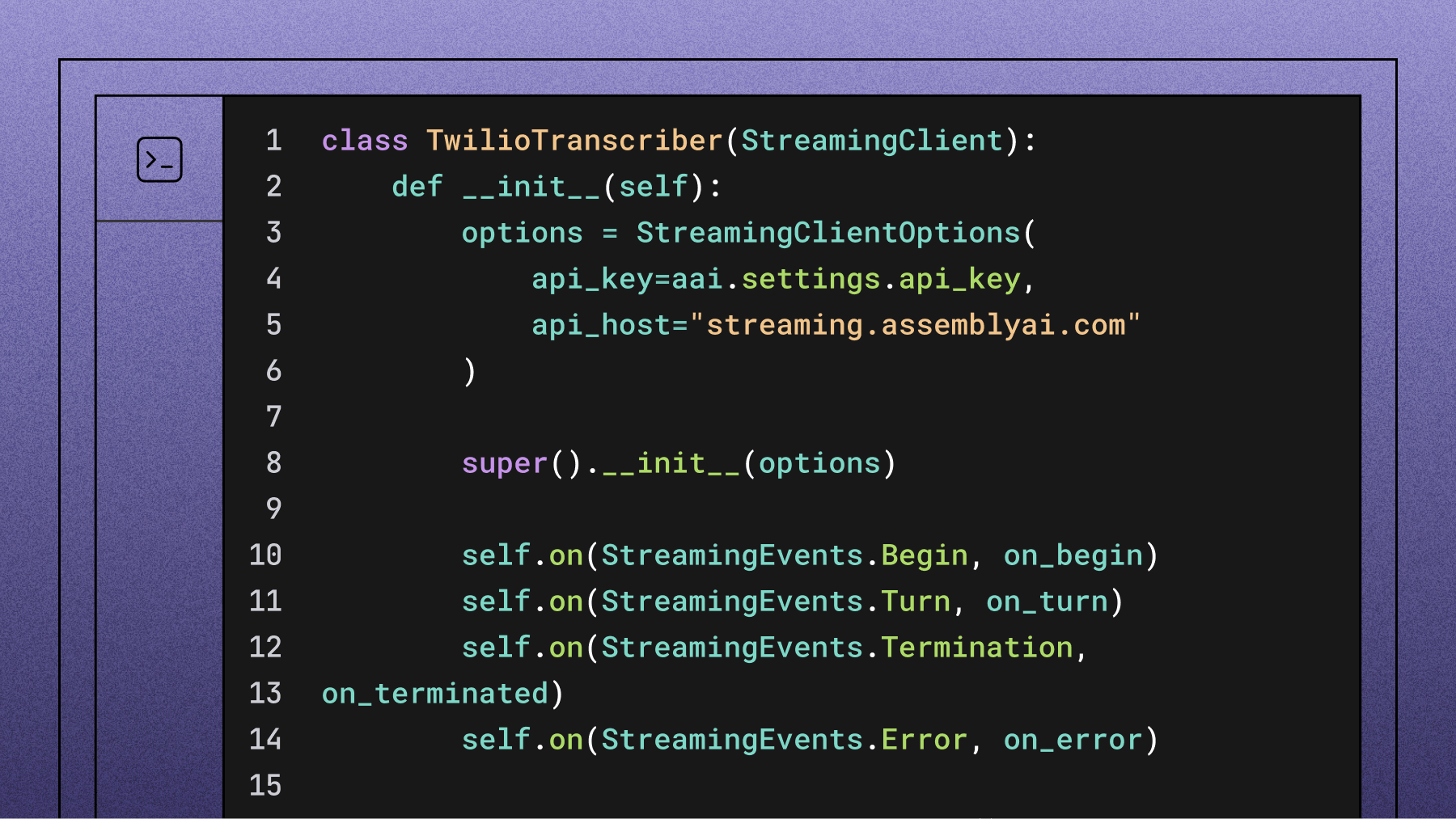

Different speech-to-text models handle this differently. Universal-3 Pro Streaming (u3-rt-pro) uses punctuation patterns to detect turn completion. Universal-streaming models use numerical confidence scores. Both can use Voice Activity Detection (VAD) as an alternative.

Automatic detection vs forced endpoints

You have two fundamental approaches to handle when turns end in your voice agent.

Automatic detection uses AI models to predict speech completion. The model analyzes patterns in speech and silence to make intelligent guesses about whether the user is done talking.

Forced endpoints require explicit signals to mark turn completion. Instead of waiting for AI to decide, you set clear rules like "end the turn after X milliseconds of silence" or "end when the user releases a button."

How automatic turn detection works

U3-rt-pro uses punctuation-based detection. When silence reaches your configured duration, the model checks the transcribed text for terminal punctuation like periods, question marks, or exclamation points. Find terminal punctuation and the turn ends. No terminal punctuation means the user is still talking.

The system prompt controls this behavior directly. The default prompt tells the model exactly when to use periods versus commas, giving you control over turn boundaries through natural language instructions.

Universal-streaming works with confidence scores instead. The model generates a number between 0 and 1 indicating how confident it is that the user finished speaking. Above your threshold, the turn ends. Below the threshold, it keeps waiting.

Key differences between models:

- U3-rt-pro: Uses punctuation patterns and prompt engineering

- Universal-streaming: Uses numerical confidence thresholds

- Both: Support VAD-based forced endpoints as backup

VAD forced endpoints bypass model intelligence entirely. When VAD detects silence, it immediately sends an endpoint signal. With u3-rt-pro, this still triggers a final transcription of buffered audio.

When forced endpoints make sense

Some scenarios work better with predictable boundaries than intelligent detection.

Push-to-talk interfaces make model detection redundant since the button release clearly signals turn completion. Compliance scenarios need guaranteed complete message delivery without interruption risk.

Structured data collection benefits from explicit boundaries. When gathering phone numbers or account details, you want clean segmentation between each piece of information.

High-noise environments can confuse intelligent detection, but simple silence thresholds cut through background chaos.

Common forced endpoint patterns:

- Pipecat Mode 1 (Pipecat integration): VAD controls boundaries, model provides fast finals

- Push-to-talk: Button release triggers immediate endpoint

- Timeout-based: Fixed silence duration ends turns

Critical warning: don't combine VAD endpoints with model detection simultaneously in u3-rt-pro. Both systems try to end the same turn, creating race conditions and unpredictable timing.

Why bad turn detection destroys voice UX

Poor configuration creates two major failure patterns that break the conversation experience.

The first problem is cutting users off mid-sentence. Your agent interrupts before the user finishes their thought, creating frustration and confusion.

The second problem is awkward response delays. After the user clearly finishes speaking, the agent sits in uncomfortable silence before responding.

Both failures have specific technical causes you can fix through proper configuration.

Cutting users off mid-sentence

This happens when your detection settings are too aggressive for natural speech patterns.

The most common cause is min_turn_silence set too low. Values under 100ms can split entities like phone numbers or email addresses mid-phrase. A user saying "Call me at 555-0123" gets cut off at "555-01" because the brief pause between number groups triggers turn detection.

Pipecat's smart turn analyzer creates another cutting-off scenario. The analyzer buffers about 8 seconds of audio. Multiple hesitations like "Yes... And— Do you have—" fill this buffer. When the user finally asks a clear question, the buffer is full of incomplete patterns. The system predicts the turn is still incomplete, hits the 3-second timeout ceiling, and cuts them off.

Solutions for different causes:

- Entity splitting: Increase min_turn_silence to 100ms minimum

- Buffer overflow (Pipecat integration): Switch to Mode 2 for hesitant speakers

- Filler words: Use Strict Rules prompt to handle "um" and "uh" properly

Awkward silence and response delays

Long delays after clear completion statements confuse users and break conversation flow.`

Users finish with statements like "What's my account balance?" then wait through 3+ seconds of silence. They assume the system didn't hear them and start repeating just as the response finally arrives.

This usually happens when min_turn_silence is set too high. Values over 400ms force users to wait through unnecessary silence before turn detection even begins checking for completion.

Sometimes max_turn_silence becomes the primary trigger instead of intelligent detection. This means your model-based system is failing to recognize obvious completion signals.

Quick fixes:

- Reduce min_turn_silence to 100ms for responsive conversation

- Verify format_turns: true for proper turn event delivery

- Check that max_turn_silence isn't firing first as primary endpoint

Reducing min_turn_silence can decrease perceived response delay, though actual results vary based on audio conditions and system configuration.

Configuration parameters that determine UX

Your parameter choices directly control whether conversations feel natural or broken. Different models use different parameters, so using the wrong ones guarantees problems.

Core parameters by model:

- min_turn_silence: Minimum quiet time before checking for completion (all models)

- max_turn_silence: Forced timeout regardless of detection (all models)

- end_of_turn_confidence_threshold: Confidence trigger level (universal-streaming only)

- format_turns: Enables turn-complete event delivery (required for all)

- vad_force_turn_endpoint (Pipecat integration only): Switches between Mode 1 and Mode 2

Note: min_end_of_turn_silence_when_confident is deprecated. Use min_turn_silence instead.

Confidence thresholds

The end_of_turn_confidence_threshold parameter only applies to universal-streaming models. Don't try to use this with u3-rt-pro—it won't work.

For universal-streaming, this number between 0 and 1 determines when turns end. Higher values wait for clearer completion signals. Lower values trigger more aggressively on smaller pauses.

Start with 0.7-0.8 for most voice agents. Test against sample conversations and adjust based on whether you see too many interruptions or too many delays.

U3-rt-pro handles confidence through punctuation instead of numbers. Periods and question marks signal high confidence the turn is complete. Commas and missing punctuation signal the user will continue speaking.

Silence duration settings

Min_turn_silence controls responsiveness while max_turn_silence provides a safety net.

For voice agents using u3-rt-pro, the default min_turn_silence of 100ms is recommended. For older universal-streaming models, the default is 400ms, which can create noticeable delays in quick exchanges. Values below 100ms risk splitting multi-word entities mid-phrase.

Max_turn_silence acts as the absolute timeout. In Pipecat Mode 1 (Pipecat integration), set this equal to min_turn_silence since VAD provides the real ceiling. In Mode 2 (Pipecat integration), defaults work well. For non-voice applications like meeting transcription, use 1000-2000ms.

Format_turns must be set to true. This enables proper turn-complete event delivery without adding latency.

Aggressive vs balanced vs conservative tuning

Real deployments typically choose between Pipecat's Mode 1 and Mode 2 (in the Pipecat integration) rather than custom tuning profiles.

Mode 1 (Pipecat integration) uses VAD as the turn boundary controller with model detection providing fast finals. This offers the quickest response times but may cut off thinking pauses.

Mode 2 (Pipecat integration) lets the speech model control turn detection while VAD only handles barge-in detection. This produces more natural boundaries but adds slight latency.

Mode comparison (Pipecat integration):

- Mode 1: VAD ceiling, ~280-330ms total latency, good for quick exchanges

- Mode 2: STT-controlled, slightly higher latency, better for complex queries

- Non-agent: Longer timeouts, optimized for transcription rather than conversation

Mode 2 (Pipecat integration) requires u3-rt-pro specifically because it needs the SpeechStarted event for proper barge-in handling.

Prompt engineering for turn detection

U3-rt-pro's punctuation-based approach lets you control turn detection through natural language instructions. This capability doesn't exist in confidence-score models.

The system prompt directly tells the model when to use terminal punctuation (ending turns) versus continuation punctuation (keeping turns open).

Testing shows the Strict Rules prompt reduces false interruptions significantly compared to no prompt:

Prompt performance patterns:

- Strict Rules: Fewer false interruptions, maintains accuracy for real completions

- No prompt: More interruptions on incomplete statements

- Custom prompts: Often underperform the tested default

The winning prompt structure includes explicit rules about punctuation usage and filler word handling. It specifically instructs the model that words like "um," "uh," "so," and "like" indicate the speaker will continue.

Building better voice agents with reliable turn detection

Success depends on matching your model choice to your configuration approach. U3-rt-pro and universal-streaming need completely different parameter strategies.

Start with the fundamentals: choose between automatic detection (letting AI decide) or forced endpoints (using explicit signals). Your use case determines the right approach.

For natural conversation, automatic detection feels more human-like. For structured data collection or compliance scenarios, forced endpoints provide predictable boundaries.

Configuration checklist:

- Model-specific parameters: Use confidence thresholds only with universal-streaming

- Voice agent timing: Set min_turn_silence to 100ms for responsiveness

- Event delivery: Enable format_turns: true for proper turn handling

- Backup timeout: Configure max_turn_silence as safety net

The SpeechStarted event in u3-rt-pro enables the fastest possible interruption handling. This event fires before any transcript arrives, letting you implement immediate barge-in detection.

You can update parameters like min_turn_silence during active conversations without reconnecting. This enables adaptive agents that adjust to individual speaker patterns in real-time.

Final words

AssemblyAI's streaming transcription models, particularly the Universal-3 Pro Streaming model, provide the foundation for reliable turn detection through accurate real-time transcription, customizable prompting capabilities, and event-driven architecture that supports both intelligent detection and forced endpoint approaches depending on your specific voice agent requirements.

Frequently asked questions

Can I combine VAD forced endpoints with automatic model detection?

Yes, but only through Pipecat Mode 1 (in the Pipecat integration) where VAD provides the ceiling while the model detection runs as the primary trigger. Don't enable both mechanisms simultaneously in u3-rt-pro as this creates race conditions and unpredictable timing behavior.

How do I fix voice agents that cut users off mid-sentence?

Check if min_turn_silence is below 100ms and increase it to prevent entity splitting. For Pipecat users experiencing buffer overflow with hesitant speakers, switch to Mode 2 (in the Pipecat integration) which avoids the cumulative hesitation buffer issue.

What causes response delays after users finish speaking?

Usually min_turn_silence set too high (over 400ms) or max_turn_silence firing as the primary endpoint instead of intelligent detection. Reduce min_turn_silence to 100ms and verify your model-based detection is working properly.

Does the system prompt affect turn detection accuracy?

Only in u3-rt-pro, where punctuation placement controls turn boundaries. The Strict Rules prompt reduces false positive interruptions compared to no prompt while maintaining accuracy for real completions. Universal-streaming models don't support custom prompting for turn detection.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.