This comprehensive guide compares the top 8 speech-to-text APIs in 2025, evaluating their accuracy, latency, features, and pricing to help developers choose the right Voice AI solution for their applications. We'll cover everything from integration basics to advanced features like speaker diarization and real-time streaming, plus open-source alternatives and implementation best practices.

A speech-to-text API is a cloud service that converts spoken audio into written text using AI models. These APIs process audio files or live audio streams and return accurate transcriptions in JSON format, complete with timestamps and confidence scores.

Start by testing APIs with your actual audio data. Accuracy varies significantly based on audio quality, accents, and specialized vocabulary that your application might encounter.

Test speech-to-text on your audio

Compare accuracy and latency for batch and streaming in a no‑code Playground. Upload a clip, switch modes, and view timestamps and confidence instantly.

Try the playground

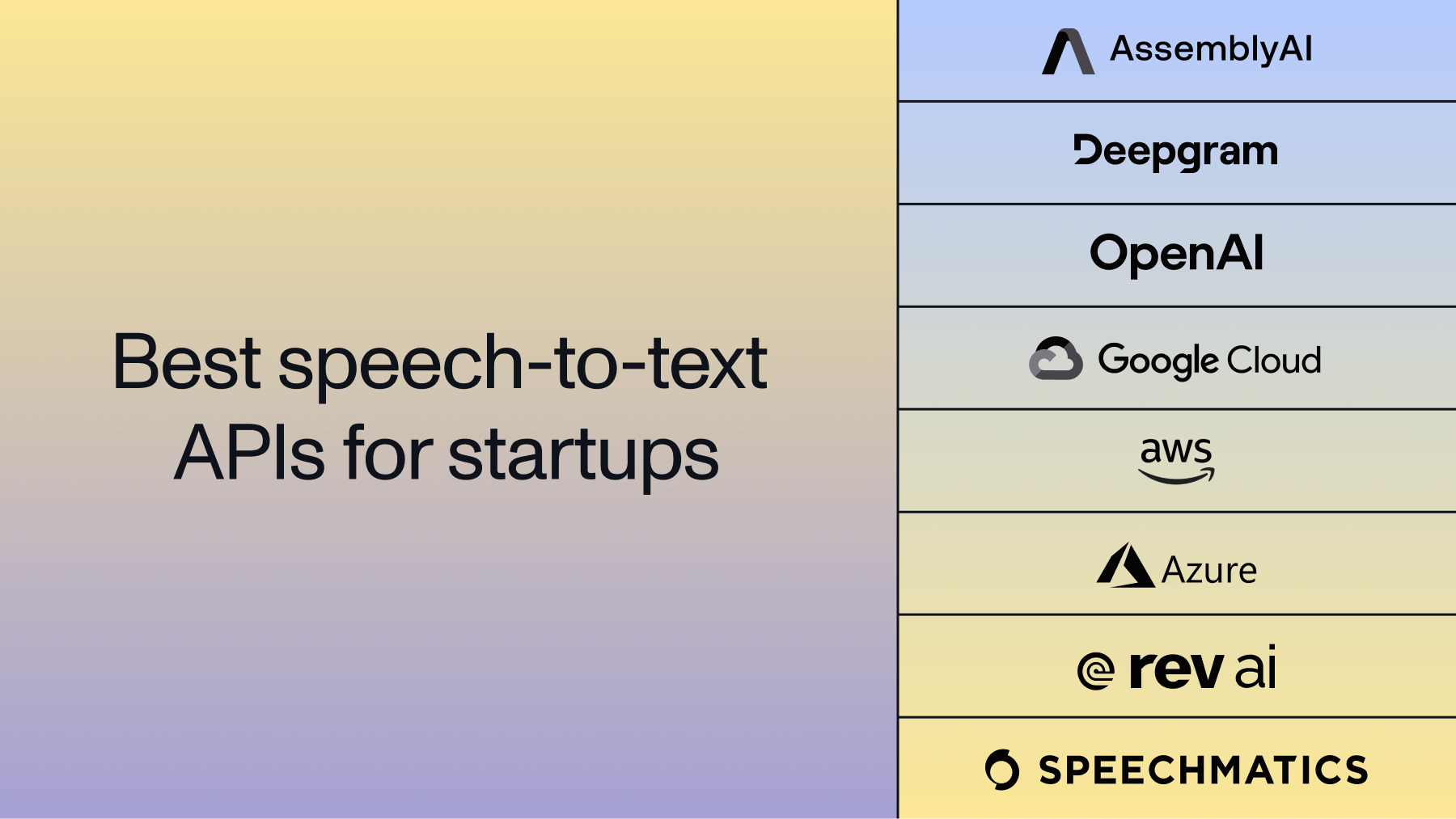

Top 8 best speech-to-text APIs in 2025

These APIs represent the current leaders in speech recognition technology, each with unique strengths for different applications.

1. AssemblyAI

AssemblyAI's Voice AI platform delivers high accuracy through its Universal models: Universal-3-Pro for highest accuracy, Universal-2 for broad language support, and Universal-3 Pro Streaming for real-time applications. The platform goes beyond basic transcription with built-in speech understanding capabilities like sentiment analysis and entity detection.

Developers appreciate AssemblyAI's straightforward integration process and comprehensive documentation. The API handles both batch and real-time streaming transcription with consistent accuracy across both modes.

Main features:

- Universal-3-Pro, Universal-2, and Universal-3 Pro Streaming models optimized for different use cases

- Built-in speaker diarization for multiple speakers

- PII redaction for compliance requirements

- Sentiment analysis and entity detection

- Auto chapters and Key Phrases

- Summarization

- Topic Detection

Ideal for:

- AI meeting assistants and notetakers

- Call center analytics platforms

- Content creation and podcasting applications

- Healthcare transcription with business associate agreements

Pricing:

- Pay-as-you-go hourly rates

- Volume discounts for high usage

- Free tier with initial credits

Build with AssemblyAI's speech-to-text

Sign up for an API key and start transcribing files or streaming audio with high accuracy. Access speaker diarization, sentiment analysis, entity detection, and PII redaction from one API.

Get API key

2. Deepgram

Deepgram's Nova-2 model emphasizes speed and efficiency, achieving low latency for streaming transcription. Their end-to-end deep learning approach processes audio directly without intermediate steps.

Deepgram's batch API also handles large-scale processing efficiently for recorded content.

Pricing:

- Nova-2 per-minute rates for pay-as-you-go

- Growth plans with volume discounts

- Enterprise custom pricing available

3. OpenAI Whisper

Whisper stands out as the major open-source option, offering complete control over deployment and data privacy. The model's zero-shot learning capability means it performs well across many languages without specific training.

While Whisper lacks real-time streaming capabilities, its transformer architecture delivers strong accuracy for batch transcription. Organizations can self-host for unlimited processing or use OpenAI's hosted API.

Pricing:

- OpenAI API per-minute rates

- Self-hosted option is free but requires infrastructure

- No streaming transcription available

4. Google Cloud Speech-to-Text

Google's speech API benefits from extensive training data across many languages and integrates deeply with other Google Cloud services. The AutoML feature allows teams to create custom models for specialized vocabulary.

Multi-channel recognition processes stereo audio with separate speaker channels, which works well for call center recordings. The API's complexity can be overwhelming for simple transcription needs.

Pricing:

- Standard per-minute rates for initial usage

- Enhanced models at premium rates

- Medical and video models available

5. Amazon Transcribe

Amazon Transcribe integrates seamlessly with AWS services, making it natural for teams already using S3, Lambda, or other AWS infrastructure. The medical transcription model understands clinical terminology.

Performance varies based on audio quality and content type. The service works best for AWS-native applications where integration simplicity is more important than absolute accuracy.

Pricing:

- Standard per-minute rates

- Medical transcription at premium rates

- Call Analytics features available

6. Microsoft Azure Speech Services

Azure Speech Services offers extensive customization through its Custom Speech portal, where teams can train models on their specific audio data. The pronunciation assessment feature evaluates spoken language learning.

Integration with Microsoft's ecosystem provides advantages for Teams, Office, and Dynamics users. The service supports many languages but requires more configuration than some competitors.

Pricing:

- Standard per-hour rates

- Custom models at premium rates

- Batch transcription options available

7. Rev AI

Rev AI focuses exclusively on English transcription, achieving high accuracy through models trained on professionally transcribed audio. The async API handles file uploads efficiently while streaming provides real-time transcription.

The narrow language focus means Rev AI excels at English but lacks support for global applications. Their straightforward API design appeals to developers who need reliable English transcription.

Pricing:

- Machine-only per-minute rates

- Async API with premium rates

- Volume discounts available for high usage

8. Speechmatics

Speechmatics uses self-supervised learning for speech recognition, allowing their models to adapt to new accents and languages rapidly. The platform offers both cloud and on-premise deployment options.

Real-time transcription maintains consistent latency across supported languages. Custom deployments can optimize for specific use cases, though this requires working directly with their sales team.

Pricing:

- Custom pricing based on usage volume

- On-premise licensing available

- Contact sales for detailed quotes

Open-source speech-to-text alternatives

Open-source speech recognition engines offer complete control over your transcription pipeline, eliminating vendor dependencies and recurring API costs. These solutions work best when you have technical expertise for deployment and maintenance.

Whisper leads the open-source space with its transformer-based architecture that handles many languages without fine-tuning. The largest model achieves commercial-grade accuracy but requires significant GPU resources for real-time processing.

Vosk runs efficiently offline on mobile devices and embedded systems, supporting multiple languages with compact models. It's ideal for privacy-focused applications that can't send audio to cloud services.

Kaldi remains the research standard with extensive customization options and active academic development. The learning curve is steep, but Kaldi offers unmatched flexibility for specialized applications.

wav2vec 2.0 from Meta uses self-supervised learning to achieve strong performance with minimal labeled training data. This makes it valuable for low-resource languages or domain-specific applications.

How to get started with speech-to-text APIs

Getting started with speech-to-text APIs requires just a few lines of code and an API key. Most providers offer free tiers or credits that let you test their services immediately.

First, sign up for an API key from your chosen provider. Then prepare your audio in a supported format—most APIs accept MP3, WAV, or M4A files with various sample rates.

Integration steps:

- API setup: Register for an account and obtain authentication credentials

- Audio preparation: Ensure your audio files are in supported formats

- Basic integration: Use REST endpoints for batch processing or WebSocket for streaming

- Error handling: Implement retry logic for network issues and rate limits

Best practices:

- Test with your actual audio data to verify accuracy

- Use webhooks for asynchronous processing instead of polling

- Implement proper error handling for production applications

- Monitor usage to optimize costs and performance

Start building with AssemblyAI

Get your API key and try batch or real‑time transcription, then set up webhooks and robust error handling as outlined above. Be up and running in minutes.

Sign up free

Frequently asked questions

What's the difference between batch and real-time speech-to-text APIs?

Batch APIs process pre-recorded audio files asynchronously, while real-time APIs transcribe live audio streams with minimal delay. Choose batch for podcasts or recorded meetings, and real-time for live captioning or voice assistants.

How much does a speech-to-text API typically cost?

Speech-to-text APIs charge either per minute or per hour of audio processed, with rates varying based on features and accuracy levels. Free tiers usually include limited monthly minutes for testing purposes.

Can speech-to-text APIs handle technical terminology and industry jargon?

Modern speech-to-text APIs use custom vocabulary features to recognize specialized terms, improving accuracy for domain-specific content. Some providers offer pre-trained models for medical, legal, or financial terminology.

What audio formats do speech-to-text APIs support?

Most APIs accept common formats like MP3, WAV, M4A, MP4, and FLAC with various sample rates. Some providers automatically convert formats while others require specific formats for optimal performance.

How do I measure the accuracy of a speech-to-text API?

Calculate Word Error Rate by comparing transcription output to human-verified reference text, counting word insertions, deletions, and substitutions. Your actual accuracy depends on audio quality and content type rather than published benchmarks.